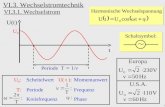

Algebra U = ∑ a(t) Y(t) E{U} = c Y ∑ a(t) cov{U,V} = ∑ a(s) b(t) c YY (s-t)

description

Transcript of Algebra U = ∑ a(t) Y(t) E{U} = c Y ∑ a(t) cov{U,V} = ∑ a(s) b(t) c YY (s-t)

Algebra

U = ∑ a(t) Y(t) E{U} = c Y ∑ a(t)

cov{U,V} = ∑ a(s) b(t) c YY(s-t)

U is gaussian if {Y(t)} gaussian

Some useful stochastic models

Purely random / white noise (i.i.d.)

(often mean assumed 0)

cYY(u) = cov(Y(t+u),Y(t)} = σY2 if u = 0

= 0 if u ≠ 0

ρYY(u) = 1, u=0

= 0, u ≠ 0

A building block

Random walk

Not stationary, but

∆Y(t) = Y(t) – Y(t-1) = Z(t)

Y(t) = Y(t-1) + Z(t), Y(0) = 0

Y(t) = ∑i=1t Z(i)

E{Y(t)} = t μZ

var{Y(t)} = t σZ2

Moving average, MA(q)

Y(t) = β(0)Z(t) + β(1)Z(t-1) +…+ β(q)Z(t-q)

If E{Z(t)} = 0, E{Y(t)} = 0

cYY(u) = 0, u > q

= σZ2 ∑ t=0

q-k β(t) β(t+u) u=0,1,…,q

= cYY(-u) stationary

MA(1). ρYY(u) = 1 u = 0

= β(1)/(1+ β(1) 2), k = ±1

= 0 otherwise

Backward shift operator remember translation operator TuY(t)=Y(t+u)

Linear process. )(MA

jtt

j XXB

0iitit ZX

Need convergence condition, e.g. |i | or |i |2 <

q

q

t

q

q

tt

BBB

ZBB

ZBX

qMA

...)(

)...(

)(

)(

10

10

BjY(t) = Y(t-j)

autoregressive process, AR(p)

first-order, AR(1) Markov

Linear process invertible

For convergence in probability/stationarity

1||

tt

tptptt

ZXB

ZXXX

)(

...11

ttt ZXX 1

...

)(

2

2

1

21

ttt

tttt

ZZZ

XZZX

(**)

a.c.f. of ar(1) from previous slide (**)

||

22||

)(

,...2/,1/,0 ),1/()(k

Z

k

k

kk

p.a.c.f. using normal or linear definitions

corr{Y(t),Y(t-m)|Y(t-1),...,Y(t-m+1)}

= 0 for m p when Y is AR(p)

Proof. via multiple regression

ρYY

In general case,

Useful for prediction

tptptt ZXXX ...11

tystationarifor 1||in 0(z) of roots need

)(

z

ZXB tt

Yule-Walker equations for AR(p).

Sometimes used for estimation

Correlate, with Xt-k , each side of

tptptt ZXXX ...11

0 ),(...)1()( 1 kpkkk p

ρYY

ARMA(p,q)

qtqtttptptt ZZZXXX ...... 11011

(B)Yt = (B)Zt

ARIMA(p,d,q).

0)ARIMA(0,1,

)1(

walkRandom

1

tt

ttt

ZX

XBXX

q)ARMA(p, stationary a is t

d X

Xt = Xt - Xt-1 2Xt = Xt - 2Xt-1 + Xt-2

arima.mle() fits by mle assuming Gaussian noise

Armax.

(B)Yt = β(B)Xt + (B)Zt

arima.mle(…,xreg,…)

State space.

st = Ft(st-1 , zt ) Yt = Ht(st , Zt )could include X

Next i.i.d. → mixing stationary process

Mixing has a variety of definitions

e.g. normal case, ∑ |cYY(u)| < ∞, e.g.Cryer and Chan (2008)

CLT mY = cY

T = Y-bar = ∑ t=1T Y(t)/T

Normal with

E{mY} = cY

var{mY} = ∑ s=1T ∑ t=1

T c YY(s-t)

≈ T ∑ u c YY(u) = T σYY if white noise

OLS.

Y(t) = α + βt + N(t)

b = β + ∑ (t - tbar)N(t) /∑ (t - tbar)2

= β + ∑ u(t) N(t)

E(b) = β

Var(b) = ∑ ∑ us ut cNN(s-t)

Cumulants.

cum(Y1,Y2, ...,Yk )

Extends mean, variance, covariance

cum(Y) = E{Y}

cum(Y,Y) = Var{Y}

cum(X,Y) = Cov(X,Y)

DRB (1975)

Proof of ordinary CLT.

ST = Y(1) + … + Y(T)

cumk(ST) = T κ k additivity and imdependence

cumk(ST/√T) = T–k/2 cumk(ST) = O( T T–k/2 ) → 0 for k > 2 as T → ∞

normal cumulants of order > 2 are 0

normal is determined by its moments

(ST - Tμ)/√ T tends in distribution to N(0,σ2)

Stationary series

cumulant functions.

cum{Y(t+u1 ), …,Y(t+u k-1 ),Y(t) } = ck(t+u 1 , … ,t+u k-1 ,t) = ck(u1 , .., uk-1)

k = 2, 3,, 4 ,…

cumulant mixing.

∑ u |ck(u1 , ..,uk-1)| < ∞ u = (u1 , .., uk-1)

![= ntq;fNl];tuh tpj;ah ke;jph; Nkdpiyg; gs;sp · 1@cosθ ` a2 12 @cos2 θ ffffffffffffffffffffffffffff v u u t = 1@cosθ ` a2 sin2 θ ffffffffffffffffffffffffffff v u u t ...](https://static.fdocument.org/doc/165x107/5c02561d09d3f252338de26f/-ntqfnltuh-tpjah-kejph-nkdpiyg-gssp-1cos-a2-12-cos2-ffffffffffffffffffffffffffff.jpg)

![EE-EP t u { z v z u B sEE-EP t u { z v z u B s RISTVIITED SEOTUD TAOTLUSTELE [0001] See taotlus on osaliselt jätk rahvusvahelisele patenditaotlusele nr PCT/USβ009/0γ4448, mis esitati](https://static.fdocument.org/doc/165x107/603c4579c0c5060f991662d9/ee-ep-t-u-z-v-z-u-b-s-ee-ep-t-u-z-v-z-u-b-s-ristviited-seotud-taotlustele-0001.jpg)