BS1a Applied Statistics Lectures 9-10nicholls/bs1a/lectures9-10.pdf · 2010. 11. 8. · 5 10 15 20...

Transcript of BS1a Applied Statistics Lectures 9-10nicholls/bs1a/lectures9-10.pdf · 2010. 11. 8. · 5 10 15 20...

BS1a Applied Statistics

Lectures 9-10

Dr Geoff Nicholls

Week 5 MT10

Box-Cox

Observations of y, x1, ..., xp with yk ≥ 0.

y not linear with x1, ..., xp try

y′ = (yλ − 1)/λ

treating λ as an(other) unknown parameter.

(yλ − 1)/λ gives powers of y and log(y).

Likelihood is now

L(β, σ2, λ; y′) ∝ 1

σnexp

− 1

2σ2

∑

k

(y′k − xTk β)2

.

Exercise Compute MLE’s for β, σ2 and λ (trans-

form to get L(β, σ2, λ; y) then maximise - this

is the first exercise of PS4).

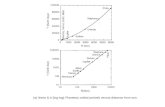

Example: fraction of successful putts as a func-

tion of distance in feet.

> putts<-data.frame(Dist=2:20, Prop=c(0.93,0.83,

0.74,0.59,0.55,0.53,0.46,0.32,0.34,0.32,0.26,

0.24,0.31,0.17,0.13,0.16,0.17,0.14,0.16))

> putts

Dist Prop

1 2 0.93

2 3 0.83

3 4 0.74

...

17 18 0.17

18 19 0.14

19 20 0.16

> y<-(1-putts$Prop)/putts$Prop

> x<-putts$Dist

5 10 15 20

0.2

0.4

0.6

0.8

data

Dist

Pro

p

5 10 15 20

01

23

45

6

y=(1−Prop)/Prop

Dist

y

5 10 15 20

−1

01

23

(y^lambda−1)/lambda

Dist

(y^l

ambd

a −

1)/

lam

bda

5 10 15 20

−2

−1

01

2

log(y)

Dist

log(

y)

The λ value was estimated by maximising the

likelihood, as above.

> putts.bc<-boxcox(y~x)

−2 −1 0 1 2

−10

0−

80−

60−

40−

20

λ

log−

Like

lihoo

d

95%

> putts.lm<-lm(sqrt(y)~x)

> summary(putts.lm)

...

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 0.14342 0.09818 1.461 0.162

x 0.12293 0.00799 15.386 2.07e-11

...√

1 − Prop

Prop= Dist+ ǫ

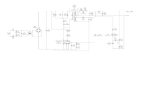

Weighted Regression

Yi = xiβ + ǫ for i = 1,2, ..., n

ǫi ∼ N(0, σ2/wi) non-constant variance.

Eg: Yi = n−1i

∑

j Yi,j yielding var(Yi) ≃ σ2/ni.

Let W = diag(w1, ..., wn) and Y ′ = W1/2Y and

X ′ = W1/2X so

Y ′ = X ′β + ǫ′

ǫ′ ∼ N(0, σ2In)

β = (XT WX)−1XTWY estimates β, and

s2 = (Y − Xβ)TW (Y − Xβ)/(n − p)

is unbiased for σ2. This is WLS.

price

0 20 40 60 80 100

100

300

500

700

020

4060

8010

0

month

100 300 500 700 20 40 60 80 120

2040

6080

120

sales

Example: OHP revisited

OHP data were monthly averages, Sales gives

number of sales by month/House Type

Let wi = sales[i] in the ith month. Very simple

NLM for flats alone.

yk ∼ α + γMxk,M + ǫk, ǫk ∼ N(0, σ2/wi),

Not enough (see below). Signs of σ ∝ E(y).

Would like to consider

wi = 1/E(Yi)2

but we dont have E(Yi) (it is a result of the

regression we want to do). Fit unweighted

then fit wi = 1/y2i or wi = sales[i]/y2

i .

Note that when we refit using the results of the

first fit in the second, we replace one model er-

ror (non-constant variance) with another (cor-

relation between observations). We do at least

diagnose the issue.

> #read the data

> fohp<-read.table(’ohp.txt’,header=TRUE)

> names(fohp)<-c(’price’,’type’,’month’,’sales’)

>

> #collect flats

> ohp<-fohp[ (fohp[,2]==’Flat’),]

#unweighted NLM

> ohp.lm<-lm(price~month,data=ohp)

> yhat<-fitted.values(ohp.lm)

> #weighted by number of sales

> ohp.wlm1<-lm(price~month,weights=ohp$sales,data=ohp)

> #weighted by fitted values (sigma ~ mean price)

> ohp.wlm2<-lm(price~month,weights=1/yhat^2,data=ohp)

> #weighted by sales and fitted values

> ohp.wlm3<-lm(price~month,weights=ohp$sales/yhat^2,data=ohp)

120 140 160 180 200 220 240

−2

02

4

unweighted

stud

entis

ed r

esid

uals

120 140 160 180 200 220 240−

20

24

weighted by sales

120 140 160 180 200 220 240

−2

02

4

weighted by month

fitted values

stud

entis

ed r

esid

uals

120 140 160 180 200 220 240

−2

02

4

weighted by month and sales

fitted values

> summary(ohp.wlm3)

...

Estimate Std. Error t value Pr(>|t|)

(Intercept) 125.15076 2.47997 50.47 <2e-16

month 1.25198 0.05204 24.06 <2e-16

...

Residual standard error: 0.5502 on 98 degrees of freedom

F-statistic: 578.8 on 1 and 98 DF, p-value: < 2.2e-16

Exercise Explain how to compute the RSE s = 0.55 and

F = 578.8.

Exercise We fit the weighted response so r′(e′) ∼ t(n−p−1) and Y ′ are independent under NLM for Y ′. However, R

returns Y = Xβ and e = (Y −Xβ) (ie in original unweighted

coordinates). Verify r′(e) ∼ t(n− p−1) and Y independent

under NLM for Y ′.

Generalised Linear Models

Restrict observation model to Y ∼ f(y|θ)

f(y|θ) = exp

(

yθ − κ(θ)

φ+ c(y;φ)

)

, y ∈ Ω

φ = 1 natural exponential family

φ > 0 natural exponential dispersion family.

exp(κ/φ) =

∫

Ωexp

(

yθ

φ+ c(y)

)

dy

θ ∈ θ; κ(θ) < ∞

y natural observation

θ natural parameter

φ dispersion parameter

Normal: Y ∼ N(µ, σ2) is NEDF

f(y|θ) =1√

2πσ2exp

(

−(y − µ)2

2σ2

)

= exp

(

yµ

σ2− µ2

2σ2− log(2πσ2)

2− y2

2σ2

)

= exp

(

yθ − κ(θ)

φ+ c(y;φ)

)

θ = µ, y ∈ R, φ = σ2,

κ = µ2/2

c(y;φ) = −(1/2) log(2πφ) − y2/2φ

Student’s-t: Y ∼ t(ν) is not in this class

f(y|ν) ∝ (1 + y2/ν)−(

ν+12

)

cant factorise y and ν in exponent.

eκ/φ =

∫

Ωexp

(

yθ

φ+ c(y)

)

dy

κ(θ) cummulant GF (at φ = 1).

κ′eκ/φ =

∫

Ω

(

y

φ

)

exp

(

yθ

φ+ c(y)

)

dy

κ′ = E(Y )

(

(κ′)2

φ2+

k′′

φ

)

eκ/φ =

∫

Ω

(

y

φ

)2

exp

(

yθ

φ+ c(y)

)

dy

(

(κ′)2

φ2+

k′′

φ

)

= E(Y 2)/φ2

var(Y ) = E(Y 2) − E(Y )2

= (κ′)2 + φκ′′ − (κ′)2

= φκ′′.

Let µi = E(Yi), var(Yi) = φV (µi).

Exercisedµi

dθi= V (µi) so µi increases with θi.

Modeling with GLM’s

three decisions fix the model.

Distribution: Yi ∼ f(yi|θ), iid for i = 1,2, ..., n.

given the EV, how is the response distributed?

Linear predictor: η = x1β1 + x2β2 + ... + xpβp

what variables x1, x2, ..., xp should we use?

Link function g(µi) = ηi

(increasing, continuous, differentiable function)

How does mean response increase with LP?

NLM yi = xiβ + ǫi, ǫi ∼ N(0, σ2)

Stochastic, Yi ∼ N(µi, σ2) jointly indpendent

Deterministic, ηi = xTi β, η = Xβ

Link, choice g(µi) = µi gives NLM E(Yi) = xiβ.

Log-Likelihood (GLM)

ℓ(β; y) =n∑

i=1

yiθi − κ(θi)

φ+ c(yi;φ),

with

θi = θ(xiβ)

since µi = κ′(θi) and g(µi) = ηi both invertible

Canonical link function (important special case)

If we choose link function

g(µi) = κ′−1(µi)

then θi = ηi since g(µi) = θi.

Log-Likelihood (canonical link, φ = 1 or known)

ℓ(β; y) =n∑

i=1

yiηi − κ(ηi)

φ+c(y, φ), ηi = xiβ.

Example GLM for a Poisson response

Yi ∼ Poisson(λi), independent E(Yi) = λi.

exp(−λ)λy

y!= exp (y log(λ) − λ − log(y!))

= exp

(

yθ − κ(θ)

φ+ c(y;φ)

)

θ = log(λ), φ = 1, κ(θ) = exp(θ).

Check E(Y ): µ = κ′(θ) = exp(θ) = λ.

Check var(Y ): φV (µ) = κ′′(θ) = λ = µ.

Link function g(µ) = η where η = xβ:

Canonical link g(µ) = κ′−1(µ) = log(µ)

Log-likelihood

ℓ(β; y) =n∑

i=1

yixiβ − exiβ

0.0 0.2 0.4 0.6 0.8 1.0

510

1520

y~Poisson(mu) mu=exp(1+2x)

x

y

Example GLM for Yi ∼ Binomial(m, pi)

f(y|θ) = Cmy py(1 − p)m−y

= exp(y log

(

p

1 − p

)

+ m log(1 − p) + log(Cmy ))

θ = log

(

p

1 − p

)

(log odds), φ = 1

κ(θ) = −m log(1 − p) = m log(1 + exp(θ))

Check E(Y ) = mp:

κ′(θ) = m exp(θ)/(1 + exp(θ)).

Exercise Check variance φV = mp(1 − p).

Canonical link g(µ) = κ′−1(µ)

g(µ) = log

(

µ/m

1 − µ/m

)

Log-likelihood

ℓ(β; y) =n∑

i=1

yixiβ − m log(1 + exiβ)

GLM MLEs

The MLEs β = β for the GLM satisfy scoreequations

∂ℓ(β; y)

∂βi= 0, i = 1,2, ..., p.

β ∈ Rp, ℓ(β; y) : Rp → R.

In terms of the gradient operator

∂ℓ

∂β=

(

∂ℓ

∂β1,

∂ℓ

∂β2, ...,

∂ℓ

∂βp

)T

∂ℓ

∂β= 0 at β = β.

Hessian operator is

∂2ℓ

∂β∂βT=

∂2ℓ∂β2

1

∂2ℓ∂β1∂β2

... ∂2ℓ∂β1∂βp

∂2ℓ∂β2∂β1

∂2ℓ∂β2∂βp

... ...∂2ℓ

∂βp∂β1

∂2ℓ∂βp∂β2

... ∂2ℓ∂β2

p

Observed information

J(y) = −∂2ℓ/∂β∂βT

Expected information

I = −E(∂2ℓ/∂β∂βT)

βD→ N(β, I−1) with n.

Compute I

∂ℓ

∂βi=

n∑

k=1

∂ℓ

∂ηk

∂ηk

∂βi

=∂ℓ

∂ηT

∂η

∂βi∂ℓ

∂βT=

∂ℓ

∂ηT

∂η

∂βT

=∂ℓ

∂ηTX

∂2ℓ

∂ββT=

∂ηT

∂β

∂

∂η

∂ℓ

∂βT

= XT ∂2ℓ

∂η∂ηTX

Let

W = −E

(

∂2ℓ

∂η∂ηT

)

so that I = XTWX and

βD→ N(β, (XTWX)−1).

W is diagonal since ℓ =∑

k ℓk with ℓk = ℓk(ηk).

Wkk = −E

(

∂2ℓ

∂η2k

)

= −E

[

dθk

dηk

]2∂2ℓ

∂θ2k

ηk = g(κ′(θk))

1 =dθk

dηkg′(µk)κ

′′(θk)

dθk

dηk=

1

g′(µk)V (µk)

∂2ℓ

∂θ2k

= −κ′′/φ

Wkk =1

g′(µk)2φV (µk)

Example: Poisson, canonical link

φ = 1, κ(θ) = exp(θ), V (µ) = µ.

Link g(µ) = η, canonical link log(µ) = η.

Log-likelihood

ℓ(β; y) =n∑

i=1

yiηi − eηi

Wkk = −E

(

∂2ℓ

∂η2k

)

= eηk

= µk

=1

g′(µk)2φV (µk)

I = J

= XTdiag(µ1, ..., µn)X