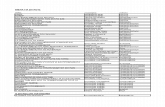

CS361

description

Transcript of CS361

CS361Week 12 - Wednesday

Last time

What did we talk about last time? Sources of 3D data Tessellation Splines and NURBS Triangle fans, strips, and meshes Simplification

Questions?

Project 4

Review

Shading

Lambertian shading

Diffuse exitance Mdiff = cdiff EL cos θ Lambertian (diffuse) shading

assumes that outgoing radiance is (linearly) proportional to irradiance

Because diffuse radiance is assumed to be the same in all directions, we divide by π

Final Lambertian radiance Ldiff = θcosdiff

LEπ

c

Specular shading

Specular shading is dependent on the angles between the surface normal to the light vector and to the view vector

For the calculation, we compute h, the half vector half between v and l

vlvlh

Specular shading equation The total specular exitance is almost exactly

the same as the total diffuse exitance: Mspec = cspec EL cos θ

What is seen by the viewer is a fraction of Mspec dependent on the half vector h

Final specular radiance Lspec =

Where does m come from? It's the smoothness parameter

θcoscos88

spec Lhm Eφ

πm

c

Implementing the shading equation Final lighting is:

n

iLh

m Eφπ

mπ

Li

1ispec

diff θcoscos88)( ccv

Aliasing

Screen based antialiasing Jaggies are caused by insufficient sampling A simple method to increase sampling is full-

scene antialiasing, which essentially renders to a higher resolution and then averages neighboring pixels together

The accumulation buffer method is similar, except that the rendering is done with tiny offsets and the pixel values summed together

FSAA schemesA variety of FSAA schemes exist with different tradeoffs between quality and computational cost

Multisample antialiasing Supersampling techniques (like FSAA) are very

expensive because the full shader has to run multiple times

Multisample antialiasing (MSAA) attempts to sample the same pixel multiple times but only run the shader once Expensive angle calculations can be done once

while different texture colors can be averaged Color samples are not averaged if they are off the

edge of a pixel

Transparency

Sorting Drawing transparent things correctly is order

dependent One approach is to do the following:

Render all the opaque objects Sort the centroids of the transparent objects in distance

from the viewer Render the transparent objects in back to front order

To make sure that you don't draw on top of an opaque object, you test against the Z-buffer but don't update it

Problems with sorting

It is not always possible to sort polygons They can interpenetrate

Hacks: At the very least, use a Z-buffer test but

not replacement Turning off culling can help Or render transparent polygons twice:

once for each face

Depth peeling It is possible to use two depth buffers to

render transparency correctly First render all the opaque objects updating

the depth buffer On the second (and future) rendering passes,

render those fragments that are closer than the z values in the first depth buffer but further than the value in the second depth buffer

Repeat the process until no pixels are updated

Texturing

Texturing We've got polygons, but they are all one color

At most, we could have different colors at each vertex

We want to "paint" a picture on the polygon Because the surface is supposed to be colorful To appear as if there is greater complexity than

there is (a texture of bricks rather than a complex geometry of bricks)

To apply other effects to the surface such as changes in material or normal

Textures are usually images, but they could be procedurally generated too

Texture pipeline We never get tired of pipelines

Go from object space to parameter space

Go from parameter space to texture space

Get the texture value Transform the texture value

The u, v values are usually inthe range [0,1]

• Projector function

Object

space

• Corresponder function

Parameter spac

e

• Obtain value

Texture

space

• Value transform function

Texture

value

Transformed

value

Magnification Magnification is often done by filtering the source texture in

one of several ways: Nearest neighbor (the worst) takes the closest texel to the one

needed Bilinear interpolation linearly interpolates between the four

neighbors Bicubic interpolation probably gives the best visual quality at

greater computational expense (and is generally not directly supported)

Detail textures is another approach

Minification Minification is just as big of a problem (if not

bigger) Bilinear interpolation can work

But an onscreen pixel might be influenced by many more than just its four neighbors

We want to, if possible, have only a single texel per pixel

Main techniques: Mipmapping Summed-area tables Anisotropic filtering

Mipmapping in action Typically a chain of mipmaps is created, each half

the size of the previous That's why cards like square power of 2 textures Often the filtered version is made with a box filter,

but better filters exist The trick is figuring out which mipmap level to use The level d can be computed based on the change

in u relative to a change in x

Trilinear filtering One way to improve quality

is to interpolate between u and v texels from the nearest two d levels

Picking d can be affected by a level of detail bias term which may vary for the kind of texture being used

Summed-area table Sometimes we are magnifying in one axis of the

texture and minifying in the other Summed area tables are another method to reduce

the resulting overblurring It sums up the relevant pixels values in the texture It works by precomputing all possible rectangles

Anisotropic filtering Summed area tables work poorly for non-rectangular

projections into texture space Modern hardware uses unconstrained anisotropic

filtering The shorter side of the projected area determines d, the

mipmap index The longer side of the projected area is a line of anisotropy Multiple samples are taken along this line Memory requirements are no greater than regular

mipmapping

Alpha Mapping Alpha values allow for interesting effects Decaling is when you apply a texture

that is mostly transparent to a (usually already textured) surface

Cutouts can be used to give the impression of a much more complex underlying polygon 1-bit alpha doesn't require sorting Cutouts are not always convincing from

every angle

Bump Mapping

Bump mapping

Bump mapping refers to a wide range of techniques designed to increase small scale detail

Most bump mapping is implemented per-pixel in the pixel shader

3D effects of bump mapping are greater than textures alone, but less than full geometry

Normal maps The results are the same, but

these kinds of deformations are usually stored in normal maps Normal maps give the full 3-

component normal change Normal maps can be in world

space (uncommon) Only usable if the object never moves

Or object space Requires the object only to undergo

rigid body transforms Or tangent space

Relative to the surface, can assume positive z

Lighting and the surface have to be in the same space to do shading

Filtering normal maps is tricky

Parallax mapping Bump mapping doesn't change what can be

seen, just the normal High enough bumps should block each other Parallax mapping approximates the part of

the image you should see by moving from the height back to the view vector and taking the value at that point

The final point used is:z

xy

vh vpp

adj

Relief mapping The weakness of parallax mapping is that it can't

tell where it first intersects the heightfield Samples are made along the view vector into the

heightfield Three different research groups proposed the idea

at the same time, all with slightly different techniques for doing the sampling

There is much active research here Polygon boundaries are still flat in most models

Heightfield texturing

Yet another possibility is to change vertex position based on texture values Called displacement mapping

With the geometry shader, new vertices can be created on the fly

Occlusion, self-shadowing, and realistic outlines are possible and fast

Unfortunately, collision detection becomes more difficult

Types of lights Real light behaves consistently (but in a

complex way) For rendering purposes, we often divide

light into categories that are easy to model Directional lights (like the sun) Omni lights (located at a point, but evenly

illuminate in all directions) Spotlights (located at a point and have intensity

that varies with direction) Textured lights (give light projections variety in

shape or color)

BRDFs

BRDF theory The bidirectional reflectance distribution

function is a function that describes the difference between outgoing radiance and incoming irradiance

This function changes based on: Wavelength Angle of light to surface Angle of viewer from surface

For point or directional lights, we do not need differentials and can write the BRDF:

iL

o

θELf cos

)(),( vvl

Revenge of the BRDF

The BRDF is supposed to account for all the light interactions we discussed in Chapter 5 (reflection and refraction)

We can see the similarity to the lighting equation from Chapter 5, now with a BRDF:

n

kiLko kkθEfL

1cos),()( vlv

Fresnel reflectance Fresnel reflectance is an ideal mathematical

description of how perfectly smooth materials reflect light

The angle of reflection is the same as the angle of incidence and can be computed:

The transmitted (visible) radiance Lt is based on the Fresnel reflectance and the angle of refraction of light into the material:

it

iiFt L

θθθRL 2

2

sinsin))(1(

lnlnr )(2i

External reflection Reflectance is obviously

dependent on angle Perpendicular (0°) gives

essentially the specular color of the material

Higher angles will become more reflective

The function RF(θi) is also dependent on material (and the light color)

Snell's Law The angle of refraction into the material is related to the

angle of incidence and the refractive indexes of the materials below the interface and above the interface:

We can combine this identity with the previous equation:

)sin()sin( 21 ti θnθn

iiFt LnnθRL 2

1

22))(1(

Area Lighting

Area light sources

Area lights are complex The book describes the 3D integration

over a hemisphere of angles needed to properly quantify radiance

No lights in reality are point lights All lights have an area that has some

effect

Ambient light The simplest model of indirect light is ambient

light This is light that has a constant value

It doesn't change with direction It doesn't change with distance

Without modeling occlusion (which usually ends up looking like shadows) ambient lighting can look very bad

We can add ambient lighting to our existing BRDF formulation with a constant term:

kiL

n

kkAo EfLL θcos),()(

1amb

vlcv

Environment Mapping

Environment mapping

A more complicated tool for area lighting is environment mapping (EM)

The key assumption of EM is that only direction matters Light sources must be far away The object does not reflect itself

In EM, we make a 2D table of the incoming radiance based on direction

Because the table is 2D, we can store it in an image

EM algorithm Steps:

1. Generate or load a 2D image representing the environment2. For each pixel that contains a reflective object, compute the normal at the

corresponding location on the surface3. Compute the reflected view vector from the view vector and the normal4. Use the reflected view vector to compute an index into the environment

map5. Use the texel for incoming radiance

Sphere mapping Imagine the environment is viewed

through a perfectly reflective sphere The resulting sphere map (also

called a light probe) is what you'd see if you photographed such a sphere (like a Christmas ornament)

There sphere map has a basis giving its own coordinate system (h,u,f)

The image was generated by looking along the f axis, with h to the right and u up (all normalized)

Cubic environmental mapping Cubic environmental mapping is the most popular

current method Fast Flexible

Take a camera, render a scene facing in all six directions Generate six textures For each point on the surface of the object you're

rendering, map to the appropriate texel in the cube

Pros and cons of cubic mapping Pros

Fast, supported by hardware View independent Shader Model 4.0 can generate a cube map in a

single pass with the geometry shader Cons

It has better sampling uniformity than sphere maps, but not perfect (isocubes improve this)

Still requires high dynamic range textures (lots of memory)

Still only works for distant objects

Glossy reflections We have talked about using environment mapping for

mirror-like surfaces The same idea can be applied to glossy (but not

perfect) reflections By blurring the environment map texture, the surface

will appear rougher For surfaces with varying roughness, we can simply

access different mipmap levels on the cube map texture

Irradiance environment mapping Environment mapping can be used for diffuse

colors as well Such maps are called irradiance environment

maps Because the viewing angle is not important for

diffuse colors, only the surface normal is used to decide what part of the irradiance map is used

Global Illumination

The true rendering equation The reflectance equation we

have been studying is:

The full rendering equation is:

The difference is the Lo(r(p,l),-l) term which means that the incoming light to our point is the outgoing light from some other point

Unfortunately, this is all recursive (and can go on nearly forever)

iioo ωdθrLfL Ω

cos)),,((),(),( llpvlvp

iiio ωdθLfL Ω

cos),(),(),( lpvlvp

Local lighting

Real-time rendering uses local (non-recursive) lighting whenever possible

Global illumination causes all of our problems (unbounded object-object interaction) Transparency Reflections Shadows

Shadows

Shadows Shadow terminology:

Occluder: object that blocks the light

Receiver: object the shadow is cast onto

Point lights cast hard shadows (regions are completely shadows or not)

Area lights cast soft shadows Umbra is the fully shadowed part Penumbra is the partially

shadowed part

Projection shadows A planar shadow occurs when an object casts a shadow

on a flat surface Projection shadows are a technique for making planar

shadows: Render the object normally Project the entire object onto the surface Render the object a second time with all its polygons set to

black The book gives the projection matrix for arbitrary

planes

Problems with projection shadows We need to bias (offset) the

plane just a little bit Otherwise, we get z fighting and

the shadows can be below the surface

Shadows can be draw larger than the plane The stencil buffer can be used to

fix this Only opaque shadows work

Partially transparent shadows will make some parts too dark

Z-buffer and stencil buffer tricks can help with this too

Shadows have hard edges

Hard to see example from Shogo: MAD

Other projection shadow issues Another fix for projection shadows is rendering

them to a texture, then rendering the texture Effects like blurring the texture can soften shadows

softer If the light source is between the occluder and the

receiver, an antishadow is generated

Soft shadows True soft shadows occur due to area lights We can simulate area lights with a number of point lights

For each point light, we draw a shadow in an accumulation buffer We use the accumulation buffer as a texture drawn on the surface

Alternatively, we can move the receiver up and down slightly and average those results

Both methods can require many passes to get good results

Convolution (blurring) You can just blur based on the amount of distance

from the occluder to the receiver It doesn't always look right if the occluder touches the

receiver Haines's method is to paint the silhouette of the

hard shadow with gradients The width is proportional to the height of the silhouette

edge casting the shadow

Planar shadows summarized Project the object onto a plane

Gives a hard shadow Needs tricks if the object is bigger than the

plane Get an antishadow if the light is between

occluder and receiver Soften the shadow

Render the shadow multiple times Blur the projection Put gradients around the edges

Shadows on a curved surface Think of shadows from the light's

perspective It "sees" whatever is not blocked by the

occluder We can render the occluder as black

onto a white texture Then, compute (u,v) coordinates of

this texture for each triangle on the receiver There is often hardware support for this

This is known as the shadow texture technique

Shadow volumes Shadow volumes are another technique for casting

shadows onto arbitrary objects Setup:

Imagine a point and a triangle Extending lines from the point through the triangle vertices

makes an infinite pyramid If the point is a light, anything in the truncated pyramid under the

triangle is in shadow The truncated pyramid is the shadow volume

Shadow volumes in principle Follow a ray from the eye through a pixel until it hits the object

to be displayed Increment a counter each time the ray crosses a frontfacing face

of the shadow volume Decrement a counter each time the ray crosses a backfacing

face of the shadow volume If the counter is greater than zero, the pixel is in shadow Idea works for multiple triangles casting a shadow

Shadow volumes in practice Calculating this geometrically is

tedious and slow in software We use the stencil buffer instead

Clear the stencil buffer Draw the scene into the frame

buffer (storing color and Z-data) Turn off Z-buffer writing (but leave

testing on) Draw the frontfacing polygons of

the shadow volumes on the stencil buffer (with incrementing done)

Then draw the backfacing polygons of the shadow values on the stencil buffer (with decrementing done)

Because of the Z-test, only the visible shadow polygons are drawn on the stencil

Finally, draw the scene but only where the stencil buffer is zero

Shadow mapping Another technique is to render

the scene from the perspective of the light using the Z-buffer algorithm, but with all the lighting turned off

The Z-buffer then gives a shadow map, showing the depths of every pixel

Then, we render the scene from the viewer's perspective, and darken those pixels that are further from the light than their corresponding point in the shadow map

Shadow mapping issues Strengths:

A shadow map is fast Linear for the number of objects

Weaknesses: A shadow map only works for a single light Objects can shadow themselves (a bias needs to be used) Too high of a bias can make shadows look wrong

Ambient Occlusion

Ambient occlusion Ambient lighting is completely even and

directionless As a consequence, objects in ambient

lighting without shadows look very flat Ambient occlusion is an attempt to add

shadowing to ambient lighting

Ambient occlusion theory Without taking occlusion into account, the

ambient irradiance is constant:

But for points that are blocked in some way, the radiance will be less

We use the factor kA(p) to represent the fraction of the total irradiance available at point p

ALπE ),( np

AA LπkE )(),( pnp

Computing kA

The trick, of course, is how to compute kA The visibility function approach checks to

see if a ray cast from a direction intersects any other object before reaching a point We average over all (or many) directions to get

the final value Doesn't work (without modifications) for a closed

room Obscurance is similar, except that it is based

on the distance of the intersection of the ray cast, not just whether or not it does

Screen space ambient occlusion Screen space ambient occlusion methods have become

popular in recent video games such as Crysis and StarCraft 2 Scene complexity isn't an issue

In Crysis, sample points around each point are tested against the Z-buffer More points that are behind the visible Z –buffer give a more

occluded point A very inexpensive technique is to use an unsharp mask (a

filter that emphasizes edges) on the Z-buffer, and use the result to darken the image

Reflections

Environment mapping

We already talked about reflections!

Environment mapping was our solution

But it only works for distant objects

Reflection rendering The reflected

object can be copied, moved to reflection space and rendered there

Lighting must also be reflected

Or the viewpoint can be reflected

Hiding incorrect reflections

This problem can by solved by using the stencil buffer

The stencil buffer is set to areas where a reflector is present

Then the reflector scene is rendered with stenciling on

Curved Reflections Ray tracing can be

used to create general reflections

Environment mapping can be used for recursive reflections in curved surfaces

To do so, render the scene repeatedly in 6 directions for each reflective object

Transmittance Transmittance is the

amount of light that passes through a sample

When the materials are all a uniform thickness, a simple color filter can be used

Otherwise, the Beer-Lambert Law must be used to compute the affect on the light T = e-α´cd

Refraction

Refraction is how a wave bends when it the medium it is traveling through changes

Examples with light: Pencil looks bent in water Mirages

Snell's Law

The amount of refraction is governed by Snell's Law

It relates the angle of incidence and the angle of refraction of light by the equation:

2

1

2

1sinsin

nn

θθ

Caustics

Light is focused by reflective or refractive surfaces Curve or surface of concentrated light

Reflective:

Refraction:

Global subsurface scattering Subsurface scattering is where light enters an

object bounces around and exits at a different point than it entered

Causes: Foreign Particles (pearls) Discontinuities (air bubbles) Density variations Structural changes

Radiosity To create a realistic

scene, it is necessary for light to bounce between surfaces many times

This causes subtle effects in how light and shadow interact

This also causes certain lighting effects such as color bleeding (where the color of an object is projected onto nearby surfaces)

Computing radiosity Radiosity simulates this Turn on the light sources and allow the

environmental light to reach equilibrium (stable state)

While the light is in stable state, each surface may be treated a light source

The equilibrium is found by forming a square matrix out of form factors for each patch times the patch’s reflectivity

Gaussian Elimination on the resulting matrix gives the exitance (color) of the patch in question

Classical ray tracing Trace rays from the camera

through the screen to the closest object, the intersection point.

For each intersection point, rays are traced: A ray to each light source If the object is shiny, a reflection ray If the object is not opaque, a

refraction ray Opaque objects can block the

rays, while transparent objects attenuate the light

Repeat recursively until all points on the screen are calculated

Monte Carlo ray tracing Classical ray tracing is

relatively fast but performs poorly for environmental lighting and diffuse interreflections

In Monte Carlo ray tracing, ray directions are randomly chosen, weighted by the BRDF

This is called importance sampling.

Monte Carlo ray tracing gets excellent results but takes a huge amount of time

Precomputed lighting Full global illumination is expensive If the scene and lighting are static,

much can be precomputed Simple surface prelighting uses a

radiosity render to determine diffuse lighting ahead of time

Directional surface prelighting stores directional lighting information that can be used for specular effects Much more expensive in memory

Volume information can be precomputed to light dynamic objects

Precomputed Occlusion Global illumination algorithms precompute various quantities

other than lighting Often, a measure of how much parts of a scene block light are

computed Bent normal, occlusion factor

These precomputed occlusion quantities can be applied to changing light in a scene

Create a more realistic appearance than precomputed lighting alone

Precomputed Ambient Occlusion Precomputed ambient occlusion factors are

only valid on stationary objects Example: a racetrack

For moving objects (like a car), ambient occlusion can be computed on a large flat plane

This works for rigid objects, but deformable objects would need many precomputed poses Example: a human

Also can be used to model occlusion effects of objects on each other

Precomputed radiance transfer The total effect of dynamic lighting conditions

can be precomputed and approximated This method is trying to take into account all

possible lightings from all possible angles Computing all this stuff is difficult, but a

compact representation is even more difficult Spherical harmonics is a way of storing the

data, sampled in many directions Storage requirements can be large

Results generally hold only for distant lights and diffuse shading

Image Based Effects

Rendering spectrum

We can imagine all the different rendering techniques as sitting on a spectrum reaching from purely appearance based to purely physically based

Sprites BillboardsAppeara

nceBased

Lightfields

Physically

Based

Global illuminatio

n

Skyboxes When objects are close to the viewer, small changes in

viewing location can have big effects When objects are far away, the effect is much smaller As you know by now, a skybox is a large mesh

containing the entire scene We have not set up our skyboxes correctly:

They should never move relative to the viewer They are much more effective when there is a lot of other

stuff to look at Some (our) skyboxes look crappy because there isn't

enough resolution Minimum texture resolution (per cube face) = tan(fov/2)

resolution screen

Lightfields If you are trying to recreate a complex

scene from reality, you can take millions of pictures from of it from many possible angles

Then, you can use interpolation and warping techniques to stitch them together

Huge data storage requirements Each photograph must be catalogued based

on location and orientation High realism output!

Sprites and layers A sprite is an image that

moves around the screen Sprites were the basis of

most old 2D video games (back when those existed, before the advent of Flash)

By putting sprites in layers, it is possible to make a compelling scene

Sequencing sprites can achieve animation

Billboarding Applying sprites to 3D gives billboarding Billboarding is orienting a textured polygon based on view

direction Billboarding can be effective for objects without solid surfaces If the object is supposed to exist in the world, it needs to

change as the world changes For small sprites (such as particles) the billboard's surface

normal can be the negation of the view plane normal Larger sprites should have different normals that point the

billboard directly at the viewpoint

Particle systems In a particle system, many small, separate objects are

controlled using some algorithm Applications:

Fire Smoke Explosions Water

Particle systems refer more to the animation than to the rendering

Particles can be points or lines or billboards Modern GPUs can generate and render particles in hardware

Impostors An impostor is a billboard created on the fly by rendering a complex

object to a texture Then, the impostor can be rendered more cheaply This technique should be used to speed up the rendering of far

away objects The resolution of the texture should be at least:

Impostors need to be updated periodically as the viewpoint changestan(fov/2) distance2size objectresolution screen

Billboard clouds Impostors have to be recomputed for different

viewing angles Certain kinds of models (trees are a great example)

can be approximated by a cloud of billboards Finding a visually realistic set of cutouts is one part

of the problem The rendering overhead of overdrawing is another

Billboards may need to be sorted if transparency effects are important

Image processing

Image processing takes an input image and manipulates it Blurring Edge detection Color correction Tone mapping

Much of this can be done on the GPU

Special effects Lens flare is an effect caused by bright light

hitting the crystalline structure of a camera lens The bloom effect is where bright areas spill

over into other areas Depth of field simulates a camera's behavior

of blurring objects based on how far they are from the focal plane

Motion blur blurs fast moving objects Fog can be implemented by blending a color

based on distance of an object from the viewpoint

Quiz

Upcoming

Next time…

Exam 2

Reminders

Exam 2 is this Friday in class Review chapters 5 – 10

Start working on Project 4 Tell me who your teams are!

Database engineer position (for graduating seniors): https://

www.moodys.jobs/TGWebHost/searchopenings.aspx

Enter job ID 5534BR