Calculation of Lagrange multipliers and their use for ...

Transcript of Calculation of Lagrange multipliers and their use for ...

Calculation of Lagrange multipliers and their use for

sensitivity of optimal solutions

Jaco F. Schutte

EGM 6365 - Structural OptimizationFall 2005

Constrained optimization

x1

x2

Infeasible regions

Feasible region

OptimumDecreasing f(x)

h(x)g(x)

Constraint normalization

0

1 0

a

a

a

g

g

σ σσ σ

σσ

≤= − ≤

= − ≥

Poor optimizer performance often encountered when constraints are not normalized

When normalized g = 0.1 → 10% margin in responce

Equality constraints

� Convert equality constraint to two equality constraints

� Increases the number of constraints

( ) ( )( )

00

0i

ii

h xh x

h x ≤= ⇔ ≥

Reduction of inequality constraints

� Using KS-function (Kreisselmeier-Steinhauser)

� KS bounded by

( )( )

( )

( ) ( )

1

1

00 1 ln

0

jg xj

j

j

g xg x

KS g x e

g x

ρ

ρ−

≥≥ ⇔ = −

≥

∑!

( ) ( )min min

lnj

mg KS g x g

ρ ≤ ≤ −

Using KS to approximate hi(x)

For

the solution lies at hi(x) = - hi(x) = 0

Gradient of KS function of ± hi pair vanishes at solution hi = 0Value of KS function approaches 0 for ρ → ∞

( ) ( )( )

00

0i

ii

h xh x

h x ≤= ⇔ − ≤

( ) ln(2)0 ,KS h hρ

≥ − ≥ −

� Lagrangian function

where λ j are unknown Lagrange multipliers� Stationary point conditions for inequality

constraints:

Kuhn-Tucker conditions

Stationary points

Kuhn-Tucker conditions (contd.)

� Conditions only apply at a regular point (constraint gradients linearly independent)

� Equations

yield n + ne total equations� n stationary point coordinates� ne Lagrange multipliers

� Inequality constraints require transformation to equality constraints:

� This yields the following Lagrangian:

Kuhn-Tucker conditions (contd.)

Kuhn-Tucker conditions (contd.)

� Conditions for stationary points are then:

� If inequality constraint is inactive (t ≠ 0) then Lagrange multiplier = 0

� For inequality constraints a regular point is when gradients of active constraint are linearly independent

Kuhn-Tucker conditions (contd.)

� For an inequality constrained problem x is a minimum of a set of no-negative λ i can be found such that:

1)

2) The corresponding λ i is zero if constraint gj is not active

Kuhn-Tucker conditions (contd.)

Kuhn-Tucker graphical representation

Sufficient conditions� Kuhn-Tucker conditions are satisfied when no

-∇ f can be obtained without violating constraints� Possible to move perpendicular to constraints

and improve objective function (necessary conditions are met, but not sufficient)

� Kuhn tucker conditions for optimality are sufficient when� no. design vars. = no. of active constraints.� or, Hessian of Lagrangian function is positive definite

for subspace tangent to active constraints

Sufficient conditions (contd.)

� For equality constraints

where

� For inequality constraints

A function is convex if

or

Convex problems

� Convex optimization problem has� convex objective function� convex feasible domain if

� All inequality constraints are concave (or �gj = convex)

� All equality constraints are linear

� only one optimum� Kuhn-Tucker conditions necessary and will also

always be sufficient for global minimum

Convex problems (contd.)

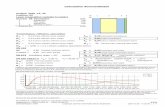

Calculating Lagrange multipliers

� Lagrange equation in matrix notation:

where N is

assuming number of active constraints are r define a residual vector u (to be minimized)

Calculating Lagrange multipliers (contd.)

Obtain a least squares solution of u

By differentiating w.r.t. each λ

Or

And substituting into

We obtain where

Calculating Lagrange multipliers (contd.)

� P is a projection matrix which projects a vector into a subspace which is tangent to the constraints

� For the Kuhn Tucker conditions to be satisfied, ∇ f has to be orthogonal to this subspace

� The method of using is often ill-conditioned matrices and inefficient

Alternate method for calculating Lagrange multipliers

QR factorization of N gives a more efficient way ofcalculating λ

Because Q is orthogonal

║u║2 is then minimized by choosing

Alternate method for calculating Lagrange multipliers

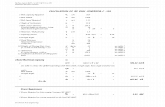

Sensitivity of optimum solution to problem parameters

Assuming problem fitness and constraintsdepend on parameter p

The solution is

and the corresponding fitness value

Sensitivity of optimum solution to problem parameters (contd.)

We would like to obtain derivatives of f* w.r.t. p

Equations that govern the optimum solution are

After manipulating governing equations we obtain

![arXiv:1403.6265v4 [hep-th] 28 Apr 2015 · 3 where ZLE is the normalizing factor making trbρLE = 1. The values of the Lagrange multipliers b, vand ξare obtained enforcing hAˆi =](https://static.fdocument.org/doc/165x107/60b043bb8bfee204b967d654/arxiv14036265v4-hep-th-28-apr-2015-3-where-zle-is-the-normalizing-factor-making.jpg)