ΔΙΑΛΕΞΗ 6: Load Balancing and Scheduling for ... for Multiprocessor Systems Other handouts To...

Transcript of ΔΙΑΛΕΞΗ 6: Load Balancing and Scheduling for ... for Multiprocessor Systems Other handouts To...

ΧΑΡΗΣ

ΘΕΟΧΑΡΙΔΗΣ

ΗΜΥ

656 ΠΡΟΧΩΡΗΜΕΝΗ

ΑΡΧΙΤΕΚΤΟΝΙΚΗ

ΗΛΕΚΤΡΟΝΙΚΩΝ

ΥΠΟΛΟΓΙΣΤΩΝ Εαρινό

Εξάμηνο

2007

ΔΙΑΛΕΞΗ

6: Load Balancing and

Scheduling for Multiprocessor Systems

Load Balancing in General

Enormous and diverse literature on load balancing° Computer Science systems

• operating systems• parallel computing• distributed computing

° Computer Science theory° Operations research (IEOR)° Application domains

A closely related problem is scheduling, which is to determine the order in which tasks run

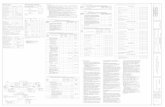

Understanding Different Load Balancing Problems

Load balancing problems differ in:° Tasks costs

• Do all tasks have equal costs?• If not, when are the costs known?

- Before starting, when task created, or only when task ends

° Task dependencies• Can all tasks be run in any order (including parallel)?• If not, when are the dependencies known?

- Before starting, when task created, or only when task ends

° Locality• Is it important for some tasks to be scheduled on the same

processor (or nearby) to reduce communication cost?• When is the information about communication between tasks

known?

Task cost spectrum

Task Dependency Spectrum

Task Locality Spectrum (Data Dependencies)

Spectrum of Solutions

One of the key questions is when certain information about the load balancing problem is known

Leads to a spectrum of solutions:

° Static scheduling.

All information is available to scheduling algorithm, which runs before any real computation starts. (offline algorithms)

° Semi-static scheduling.

Information may be known at program startup, or the beginning of each timestep, or at other well-defined points. Offline algorithms may be used even though the problem is dynamic.

° Dynamic scheduling.

Information is not known until mid-execution. (online algorithms)

Approaches

° Static load balancing° Semi-static load balancing° Self-scheduling° Distributed task queues° Diffusion-based load balancing° DAG scheduling° Mixed Parallelism

Note: these are not all-inclusive, but represent some of the problems for which good solutions exist.

Static Load Balancing

° Static load balancing is use when all information is available in advance

° Common cases:• dense matrix algorithms, such as LU factorization

- done using blocked/cyclic layout- blocked for locality, cyclic for load balance

• most computations on a regular mesh, e.g., FFT- done using cyclic+transpose+blocked layout for 1D- similar for higher dimensions, i.e., with transpose

• sparse-matrix-vector multiplication- use graph partitioning- assumes graph does not change over time (or at least within

a timestep

during iterative solve)

Semi-Static Load Balance

° If domain changes slowly over time and locality is important

• use static algorithm• do some computation (usually one or more timestamps) allowing

some load imbalance on later steps• recompute a new load balance using static algorithm

° Often used in:• particle simulations, particle-in-cell (PIC) methods

- poor locality may be more of a problem than load imbalance as particles move from one grid partition to another

• tree-structured computations (Barnes Hut, etc.)• grid computations with dynamically changing grid, which

changes slowly

Self-Scheduling

° Self scheduling:• Keep a centralized pool of tasks that are available to run• When a processor completes its current task, look at the pool• If the computation of one task generates more, add them to the

pool

° Originally used for:• Scheduling loops by compiler (really the runtime-system)• Original paper by Tang and Yew, ICPP 1986

When is Self-Scheduling a Good Idea?

Useful when:

° A batch (or set) of tasks without dependencies• can also be used with dependencies, but most analysis has only

been done for task sets without dependencies

° The cost of each task is unknown° Locality is not important° Using a shared memory multiprocessor, so a

centralized pool of tasks is fine

Variations on Self-Scheduling

° Typically, don’t want to grab smallest unit of parallel work.

° Instead, choose a chunk of tasks of size K.• If K is large, access overhead for task queue is small• If K is small, we are likely to have even finish times (load balance)

° Four variations:• Use a fixed chunk size• Guided self-scheduling• Tapering• Weighted Factoring• Note: there are more

Variation 1: Fixed Chunk Size

° Kruskal

and Weiss give a technique for computing the optimal chunk size

° Requires a lot of information about the problem characteristics

• e.g., task costs, number

° Results in an off-line algorithm. Not very useful in practice.

• For use in a compiler, for example, the compiler would have to estimate the cost of each task

• All tasks must be known in advance

Variation 2: Guided Self-Scheduling

° Idea: use larger chunks at the beginning to avoid excessive overhead and smaller chunks near the end to even out the finish times.

° The chunk size Ki

at the ith

access to the task pool is given by

ceiling(Ri

/p)° where Ri

is the total number of tasks remaining and° p is the number of processors

° See Polychronopolous, “Guided Self-Scheduling: A Practical Scheduling Scheme for Parallel Supercomputers,”

IEEE Transactions on Computers,

Dec. 1987.

Variation 3: Tapering

° Idea: the chunk size, Ki

is a function of not only the remaining work, but also the task cost variance

• variance is estimated using history information• high variance => small chunk size should be used• low variant => larger chunks OK

° See S. Lucco, “Adaptive Parallel Programs,”

PhD Thesis, UCB, CSD-95-864, 1994.

• Gives analysis (based on workload distribution)• Also gives experimental results --

tapering always works at least as well as GSS, although difference is often small

Variation 4: Weighted Factoring

° Idea: similar to self-scheduling, but divide task cost by computational power of requesting node

° Useful for heterogeneous systems° Also useful for shared resource NOWs, e.g., built

using all the machines in a building• as with Tapering, historical information is used to predict future

speed• “speed”

may depend on the other loads currently on a given processor

° See Hummel, Schmit, Uma, and Wein, SPAA ‘96• includes experimental data and analysis

Distributed Task Queues

° The obvious extension of self-scheduling to distributed memory is:

• a distributed task queue (or bag)

° When are these a good idea?• Distributed memory multiprocessors• Or, shared memory with significant synchronization overhead• Locality is not (very) important• Tasks that are:

- known in advance, e.g., a bag of independent ones- dependencies exist, i.e., being computed on the fly

• The costs of tasks is not known in advance

Diffusion-Based Load Balancing

° In the randomized schemes, the machine is treated as fully-connected.

° Diffusion-based load balancing takes topology into account

• Locality properties better than prior work• Load balancing somewhat slower than randomized• Cost of tasks must be known at creation time• No dependencies between tasks

Diffusion-based load balancing

° The machine is modeled as a graph° At each step, we compute the weight

of task

remaining on each processor• This is simply the number if they are unit cost tasks

° Each processor compares its weight with its neighbors and performs some averaging

• Markov chain analysis

° See Ghosh

et al, SPAA96 for a second order diffusive load balancing algorithm

• takes into account amount of work sent last time• avoids some oscillation of first order schemes

° Note: locality is still not a major concern, although balancing with neighbors may be better than random

DAG Scheduling

° For some problems, you have a directed acyclic graph (DAG) of tasks

• nodes represent computation (may be weighted)• edges represent orderings and usually communication (may also

be weighted)• not that common to have the DAG in advance

° Two application domains where DAGs

are known• Digital Signal Processing computations• Sparse direct solvers (mainly Cholesky, since it doesn’t require

pivoting). More on this in another lecture.

° The basic offline strategy: partition DAG to minimize communication and keep all processors busy

• NP complete, so need approximations• Different than graph partitioning, which was for tasks with

communication but no

dependencies• See Gerasoulis

and Yang, IEEE Transaction on P&DS, Jun ‘93.

Task Graphs

° Directed Acyclic Graph (DAG)G = (V,E, τ,c)V –

Set of nodes, representing computational tasksE –

Set of edges, representing communication of data between tasksτ(v) –

Execution cost for node vc(i,j) –

Communication cost for edge (i,j)

° Referred to as the delay model (macro dataflow model)

Small Task Graph Example

12

32

21

41

52

62

71

81

5 10

5 5 5

10 1010

Task Scheduling Algorithms

° Multiprocessor Scheduling Problem• For each task, assign

- Starting time- Processor assignment (P1

,...PN

)• Goal: minimize execution time, given

- Precedence constraints- Execution cost- Communication cost

° Algorithms in literature• List Scheduling approaches (ERT, FLB)• Critical Path scheduling approaches (TDS, MCP)

° Categories:

Fixed No. of Proc, fixed c and/or τ, ...

Which Strategy to Use

Multiprocessor Systems

•

Continuous need for faster computers–

shared memory model

–

message passing multiprocessor–

wide area distributed system

Multiprocessors

Definition: A computer system in which two or

more CPUs share full access to a common RAM

Multiprocessor Hardware (1)

Bus-based multiprocessors

Multiprocessor Hardware (2)

•

UMA Multiprocessor using a crossbar switch

Multiprocessor Hardware (3)•

UMA multiprocessors using multistage switching networks can be built from 2x2 switches

(a) 2x2 switch (b) Message format

Multiprocessor Hardware (4)

•

Omega Switching Network

Multiprocessor Hardware (5)

NUMA Multiprocessor Characteristics1.

Single address space visible to all CPUs

2.

Access to remote memory via commands- LOAD- STORE

3.

Access to remote memory slower than to local

Multiprocessor Hardware (6)

(a) 256-node directory based multiprocessor

(b) Fields of 32-bit memory address(c) Directory at node 36

Multiprocessor OS Types (1)

Each CPU has its own operating system

Bus

Multiprocessor OS Types (2)

Master-Slave multiprocessors

Bus

Multiprocessor OS Types (3)

•

Symmetric Multiprocessors–

SMP multiprocessor model

Bus

Multiprocessor Synchronization (1)

TSL instruction can fail if bus already locked

Multiprocessor Synchronization (2)

Multiple locks used to avoid cache thrashing

Multiprocessor Synchronization (3)

Spinning versus Switching•

In some cases CPU must wait–

waits to acquire ready list

•

In other cases a choice exists–

spinning wastes CPU cycles

–

switching uses up CPU cycles also–

possible to make separate decision each time locked mutex encountered

Assigning Processes to Processors

•

How are processes/threads assigned to processors?

•

Static assignment.–

Advantages

•

Dedicated short-term queue for each processor.•

Less overhead in scheduling.•

Allows for group or gang scheduling.•

Process remains with processor from activation until completion.

–

Disadvantages•

One or more processors can be idle.•

One or more processors could be backlogged.•

Difficult to load balance.•

Context transfers costly.

Scheduling

Assigning Processes to Processors

•

Who handles the assignment?–

Master/Slave

•

Single processor handles O.S. functions.•

One processor responsible for scheduling jobs.•

Tends to become a bottleneck.•

Failure of master brings system down.–

Peer

•

O.S. can run on any processor.•

More complicated operating system.•

Generally use simple schemes.–

Overhead is a greater problem

–

Threads add additional concerns•

CPU utilization is not always the primary factor.

Scheduling

Process Scheduling•

Single queue for all processes.

•

Multiple queues are used for priorities.•

All queues feed to the common pool of processors.

•

Specific scheduling disciplines is less important with more than one processor.–

Simple FCFS discipline or FCFS within a static priority scheme may suffice for a multiple-processor system.

Scheduling

Thread Scheduling•

Executes separate from the rest of the process.

•

An application can be a set of threads that cooperate and execute concurrently in the same address space.

•

Threads running on separate processors yields a dramatic gain in performance.

•

However, applications requiring significant interaction among threads may have significant performance impact w/multi-

processing.

Scheduling

Multiprocessor Thread Scheduling•

Load sharing–

processes are not assigned to a particular processor

•

Gang scheduling–

a set of related threads is scheduled to run on a set of processors at the same time

•

Dedicated processor assignment–

threads are assigned to a specific processor

•

Dynamic scheduling–

number of threads can be altered during course of execution

Scheduling

Multiprocessor Scheduling (1)

•

Timesharing–

note use of single data structure for scheduling

Load Sharing•

Load is distributed evenly across the processors.

•

Select threads from a global queue.•

Avoids idle processors.

•

No centralized scheduler required.•

Uses global queues.

•

Widely used.–

FCFS

–

Smallest number of threads first–

Preemptive smallest number of threads first

Scheduling

Multiprocessor Scheduling (2)

•

Space sharing–

multiple threads at same time across multiple CPUs

Disadvantages of Load Sharing•

Central queue needs mutual exclusion.–

may be a bottleneck when more than one processor looks for work at the same time

•

Preemptive threads are unlikely to resume execution on the same processor.–

cache use is less efficient

•

If all threads are in the global queue, all threads of a program will not gain access to the processors at the same time.

Scheduling

Multiprocessor Scheduling (3)

•

Problem with communication between two threads–

both belong to process A

–

both running out of phase

Multiprocessor Scheduling (4)

• Solution: Gang Scheduling

1.

Groups of related threads scheduled as a unit (a gang)

2.

All members of gang run simultaneously•

on different timeshared CPUs3.

All gang members start and end time slices together

Gang Scheduling•

Schedule related threads on processors to run at the same time.

•

Useful for applications where performance severely degrades when any part of the application is not running.

•

Threads often need to synchronize with each other.

•

Interacting threads are more likely to be running and ready to interact.

•

Less overhead since we schedule multiple processors at once.

•

Have to allocate processors.

Scheduling

Multiprocessor Scheduling (5)

Gang Scheduling

Dedicated Processor Assignment

•

When application is scheduled, its threads are assigned to a processor.

•

Advantage: –

Avoids process switching

•

Disadvantage: –

Some processors may be idle

•

Works best when the number of threads equals the number of processors.

Scheduling

Dynamic Scheduling•

Number of threads in a process are altered dynamically by the application.

•

Operating system adjusts the load to improve use.–

assign idle processors

–

new arrivals may be assigned to a processor that is used by a job currently using more than one processor

–

hold request until processor is available–

new arrivals will be given a processor before existing running applications

Scheduling

Issues in MP Scheduling•

Starvation–

Number of active parallel threads < number of allocated processors

•

Overhead–

CPU time used to transfer and start various portions of the application

•

Contention–

Multiple threads attempt to use same shared resource

•

Latency–

Delay in communication between processors and I/O devices

How to allocate processors•

Allocate proportional to average parallelism

•

Other factors:–

System load

–

Variable parallelism–

Min/Max parallelism

•

Acquire/relinquish processors based on current program needs

Cache Affinity•

While a program runs, data needed is placed in local cache

•

When job is rescheduled, it will likely access some of the same data

•

Scheduling jobs where they have “affinity”

improves performance by

reducing cache penalties

Cache Affinity (cont)•

Tradeoff between processor reallocation and cost of reallocation–

Utilization versus cache behavior

•

Scheduling policies:–

Equipartition: constant number of processors allocated evenly to all jobs. Low overhead.

–

Dynamic: constantly reallocates jobs to maximize utilization. High utilization.

Cache Affinity (cont)

•

Vaswani

and Zahoran, 1991–

When a processor becomes available, allocate it to runnable

process that was last

run on processor, or higher priority job–

If a job requests additional processors, allocate critical tasks on processor with highest affinity

–

If an allocated processor becomes idle, hold it for a small amount of time in case task with affinity comes along

Vaswani

and Zahoran, 1991•

Results showed that utilization was dominant effect on performance, not cache affinity–

But their algorithm did not degrade performance

•

Predicted that as processor speeds increase, significance of cache affinity will also increase

•

Later studies validated their predictions

How to Measure Utilization?•

IPC not necessarily best predictor:–

IPC can have high variations throughout process

–

High-IPC threads may unfairly take system resources from low-IPC threads

•

Other predictors: low # conflicts, high cache hit rate, diverse instruction mix

•

Balance: schedule with lowest deviation in IPC between coschedules

is considered best

What About Priorities?•

Scheduler estimates the “natural”

IPC

of job•

If a high-priority jobs is not meeting the desired IPC, it will be exclusively scheduled on CPU

•

Provides a truer implementation of priority:–

Normal schedulers only guarantee proportional resource sharing, assumes no interaction between jobs

Another Priority Algorithm:•

SMT hardware fetches instructions to issue from queue

•

Scheduler can bias fetching algorithm to give preference to high-priority threads

•

Hardware already exists, minimal modifications

Multiprocessor Scheduling•

Will consider only shared memory multiprocessor

•

Salient features:–

One or more caches: cache affinity is important

–

Semaphores/locks typically implemented as spin- locks: preemption during critical sections

Multiprocessor Scheduling•

Central queue –

queue can be a bottleneck

•

Distributed queue –

load balancing between queue

Scheduling•

Common mechanisms combine central queue with per processor queue (SGI IRIX)

•

Exploit

cache affinity – try to schedule on the same processor that a process/thread executed last

•

Context switch overhead–

Quantum sizes larger on multiprocessors than uniprocessors

Parallel Applications on SMPs•

Effect of spin-locks: what happens if preemption occurs in the middle of a critical section?–

Preempt entire application (co-scheduling)

–

Raise priority so preemption does not occur (smart scheduling)

–

Both of the above•

Provide applications with more control over its scheduling–

Users should not have to check if it is safe to make certain system calls

–

If one thread blocks, others must be able to run

Distributed Scheduling: Motivation

•

Distributed system with N workstations–

Model each w/s as identical, independent M/M/1 systems

–

Utilization u, P(system idle)=1-u•

What is the probability that at least one system is idle and one job is waiting?

Implications•

Probability high for moderate system utilization–

Potential for performance improvement via load distribution•

High utilization => little benefit

•

Low utilization => rarely job waiting•

Distributed scheduling (aka load balancing) potentially useful

•

What is the performance metric?–

Mean response time•

What is the measure of load?–

Must be easy to measure–

Must reflect performance improvement

Design Issues

•

Measure of load–

Queue lengths at CPU, CPU utilization

•

Types of policies–

Static: decisions hardwired into system

–

Dynamic: uses load information–

Adaptive: policy varies according to load

•

Preemptive versus non-preemptive•

Centralized versus decentralized

•

Stability: λ>μ

=> instability, λ1

+λ2

<μ1

+μ2

=>load balance–

Job floats around and load oscillates

Components•

Transfer policy: when to transfer a process?–

Threshold-based policies are common and easy•

Selection policy: which process to transfer?–

Prefer new processes–

Transfer cost should be small compared to execution cost•

Select processes with long execution times•

Location policy: where to transfer the process?–

Polling, random, nearest neighbor•

Information policy: when and from where?–

Demand driven [only if sender/receiver], time-driven [periodic], state-change-driven [send update if load changes]

Sender-initiated Policy•

Transfer policy

•

Selection policy: newly arrived process•

Location policy: three variations–

Random: may generate lots of transfers => limit max transfers

–

Threshold: probe n nodes sequentially•

Transfer to first node below threshold, if none, keep job–

Shortest: poll Np nodes in parallel

•

Choose least loaded node below T

Receiver-initiated Policy•

Transfer policy: If departing process causes load < T, find a process from elsewhere

•

Selection policy: newly arrived or partially executed process

•

Location policy:–

Threshold: probe up to Np other nodes sequentially

•

Transfer from first one above threshold, if none, do nothing

–

Shortest: poll n nodes in parallel, choose node with heaviest load above T

Symmetric Policies•

Nodes act as both senders and receivers: combine previous two policies without change–

Use average load as threshold

•

Improved symmetric policy: exploit polling information–

Two thresholds: LT, UT, LT <= UT

–

Maintain sender, receiver and OK nodes using polling info

–

Sender: poll first node on receiver list …–

Receiver: poll first node on sender list …

Code and Process Migration

•

Motivation•

How does migration occur?

•

Resource migration•

Agent-based system

•

Details of process migration

Motivation•

Key reasons: performance and flexibility

•

Process migration (aka

strong mobility)–

Improved system-wide performance –

better

utilization of system-wide resources –

Examples: Condor, DQS

•

Code migration (aka

weak mobility)–

Shipment of server code to client –

filling forms

(reduce communication, no need to pre-link stubs with client)

–

Ship parts of client application to server instead of data from server to client (e.g., databases)

–

Improve parallelism –

agent-based web searches

Motivation

•

Flexibility –

Dynamic configuration of distributed system

–

Clients don’t need preinstalled software – download on demand

Migration models•

Process = code seg

+ resource seg

+ execution

seg•

Weak versus strong mobility–

Weak => transferred program starts from initial state

•

Sender-initiated versus receiver-initiated•

Sender-initiated (code is with sender)–

Client sending a query to database server

–

Client should be pre-registered•

Receiver-initiated–

Java applets

–

Receiver can be anonymous

Do Resources Migrate?•

Depends on resource to process binding–

By identifier: specific web site, ftp server

–

By value: Java libraries–

By type: printers, local devices

•

Depends on type of “attachments”–

Unattached to any node: data files

–

Fastened resources (can be moved only at high cost)

•

Database, web sites–

Fixed resources

•

Local devices, communication end points

Resource Migration Actions

•

Actions to be taken with respect to the references to local resources when migrating code to another machine.

•

GR: establish global system-wide reference•

MV: move the resources

•

CP: copy the resource•

RB: rebind process to locally available resource

Unattached Fastened Fixed

By identifierBy valueBy type

MV (or GR)CP ( or MV, GR)RB (or GR, CP)

GR (or MV)GR (or CP)RB (or GR, CP)

GRGRRB (or GR)

Resource-to machine binding

Process-to-

resource

binding

![ΔΙΑΛΕΞΗ 04 - ocw.aoc.ntua.gr · 163 [3] Το αίνιγμα ή ο γρίφος του κύβου και οι «εξ αντιθέτων αμφισημίες» του, π.χ.:](https://static.fdocument.org/doc/165x107/5e1e3bab82acb30d827d3a95/-04-ocwaocntuagr-163-3-.jpg)