Probability Theory - ETH Zürich · Probability Theory "A random variable ... The probability...

Transcript of Probability Theory - ETH Zürich · Probability Theory "A random variable ... The probability...

Probability Theory

”A random variable is neither random nor variable.”

Gian-Carlo Rota, M.I.T..

Florian Herzog

2013

Probability space

Probability space

A probability space W is a unique triple W = Ω,F, P:

• Ω is its sample space

• F its σ-algebra of events

• P its probability measure

Remarks: (1) The sample space Ω is the set of all possible samples or elementary

events ω: Ω = ω | ω ∈ Ω.

(2)The σ-algebra F is the set of all of the considered events A, i.e., subsets of

Ω: F = A | A ⊆ Ω, A ∈ F.

(3) The probability measure P assigns a probability P (A) to every event

A ∈ F : P : F → [0, 1].

Stochastic Systems, 2013 2

Sample space

The sample space Ω is sometimes called the universe of all samples or possible

outcomes ω.

Example 1. Sample space

• Toss of a coin (with head and tail): Ω = H, T.

• Two tosses of a coin: Ω = HH,HT, TH, TT.

• A cubic die: Ω = ω1, ω2, ω3, ω4, ω5, ω6.

• The positive integers: Ω = 1, 2, 3, . . . .

• The reals: Ω = ω | ω ∈ R.

Note that the ωs are a mathematical construct and have per se no real or

scientific meaning. The ωs in the die example refer to the numbers of dots

observed when the die is thrown.

Stochastic Systems, 2013 3

Event

An event A is a subset of Ω. If the outcome ω of the experiment is in the

subset A, then the event A is said to have occurred. The set of all subsets of

the sample space are denoted by 2Ω.

Example 2. Events

• Head in the coin toss: A = H.

• Odd number in the roll of a die: A = ω1, ω3, ω5.

• An integer smaller than 5: A = 1, 2, 3, 4, where Ω = 1, 2, 3, . . . .• A real number between 0 and 1: A = [0, 1], where Ω = ω | ω ∈ R.

We denote the complementary event of A by Ac = Ω\A. When it is possible

to determine whether an event A has occurred or not, we must also be able to

determine whether Ac has occurred or not.

Stochastic Systems, 2013 4

Probability Measure I

Definition 1. Probability measure

A probability measure P on the countable sample space Ω is a set function

P : F → [0, 1],

satisfying the following conditions

• P(Ω) = 1.

• P(ωi) = pi.

• If A1, A2, A3, ... ∈ F are mutually disjoint, then

P( ∞⋃i=1

Ai

)=

∞∑i=1

P (Ai).

Stochastic Systems, 2013 5

Probability

The story so far:

• Sample space: Ω = ω1, . . . , ωn, finite!

• Events: F = 2Ω: All subsets of Ω

• Probability: P (ωi) = pi ⇒ P (A ∈ Ω) =∑

wi∈Api

Probability axioms of Kolmogorov (1931) for elementary probability:

• P(Ω) = 1.

• If A ∈ Ω then P (A) ≥ 0.

• If A1, A2, A3, ... ∈ Ω are mutually disjoint, then

P( ∞⋃i=1

Ai

)=

∞∑i=1

P (Ai).

Stochastic Systems, 2013 6

Uncountable sample spaces

Most important uncountable sample space for engineering: R, resp. Rn.

Consider the example Ω = [0, 1], every ω is equally ”likely”.

• Obviously, P(ω) = 0.

• Intuitively, P([0, a]) = a, basic concept: length!

Question: Has every subset of [0, 1] a determinable length?

Answer: No! (e.g. Vitali sets, Banach-Tarski paradox)

Question: Is this of importance in practice?

Answer: No!

Question: Does it matter for the underlying theory?

Answer: A lot!

Stochastic Systems, 2013 7

Fundamental mathematical tools

Not every subset of [0, 1] has a determinable length⇒ collect the ones with a

determinable length in F . Such a mathematical construct, which has additional,

desirable properties, is called σ-algebra.

Definition 2. σ-algebra

A collection F of subsets of Ω is called a σ-algebra on Ω if

• Ω ∈ F and ∅ ∈ F (∅ denotes the empty set)

• If A ∈ F then Ω\A = Ac ∈ F : The complementary subset of A is also

in Ω

• For all Ai ∈ F :⋃∞i=1Ai ∈ F

The intuition behind it: collect all events in the σ-algebra F , make sure that

by performing countably many elementary set operation (∪,∩,c ) on elements

of F yields again an element in F (closeness).

The pair Ω,F is called measure space.

Stochastic Systems, 2013 8

Example of σ-algebra

Example 3. σ-algebra of two coin-tosses

• Ω = HH,HT, TH, TT = ω1, ω2, ω3, ω4• Fmin = ∅,Ω = ∅, ω1, ω2, ω3, ω4.

• F1 = ∅, ω1, ω2, ω3, ω4, ω1, ω2, ω3, ω4.

• Fmax = ∅, ω1, ω2, ω3, ω4, ω1, ω2, ω1, ω3, ω1, ω4, ω2, ω3,ω2, ω4, ω3, ω4, ω1, ω2, ω3, ω1, ω2, ω4, ω1, ω3, ω4,ω2, ω3, ω4,Ω.

Stochastic Systems, 2013 9

Generated σ-algebras

The concept of generated σ-algebras is important in probability theory.

Definition 3. σ(C): σ-algebra generated by a class C of subsets

Let C be a class of subsets of Ω. The σ-algebra generated by C, denoted

by σ(C), is the smallest σ-algebra F which includes all elements of C, i.e.,

C ∈ F .

Identify the different events we can measure of an experiment (denoted by A),

we then just work with the σ-algebra generated by A and have avoided all the

measure theoretic technicalities.

Stochastic Systems, 2013 10

Borel σ-algebra

The Borel σ-algebra includes all subsets of R which are of interest in practical

applications (scientific or engineering).

Definition 4. Borel σ-algebra B(R))

The Borel σ-algebra B(R) is the smallest σ-algebra containing all open

intervals in R. The sets in B(R) are called Borel sets. The extension to the

multi-dimensional case, B(Rn), is straightforward.

• (−∞, a), (b,∞), (−∞, a) ∪ (b,∞)

• [a, b] = (−∞, a) ∪ (b,∞),

• (−∞, a] =⋃∞n=1[a− n, a] and [b,∞) =

⋃∞n=1[b, b+ n],

• (a, b] = (−∞, b] ∩ (a,∞),

• a =⋂∞n=1(a−

1n, a+ 1

n),

• a1, · · · , an =⋃nk=1 ak.

Stochastic Systems, 2013 11

Measure

Definition 5. Measure

Let F be the σ-algebra of Ω and therefore (Ω,F) be a measurable space.

The map

µ : F → [0,∞]

is called a measure on (Ω,F) if µ is countably additive. The measure µ

is countably additive (or σ-additive) if µ(∅) = 0 and for every sequence of

disjoint sets (Fi : i ∈ N) in F with F =⋃i∈N Fi we have

µ(F ) =∑i∈N

µ(Fi).

If µ is countably additive, it is also additive, meaning for every F,G ∈ F we

have

µ(F ∪G) = µ(F ) + µ(G) if and only if F ∩G = ∅The triple (Ω,F, µ) is called a measure space.

Stochastic Systems, 2013 12

Lebesgue Measure

The measure of length on the straight line is known as the Lebesgue measure.

Definition 6. Lebesgue measure on B(R)

The Lebesgue measure on B(R), denoted by λ, is defined as the measure on

(R,B(R)) which assigns the measure of each interval to be its length.

Examples:

• Lebesgue measure of one point: λ(a) = 0.

• Lebesgue measure of countably many points: λ(A) =∑∞

i=1 λ(ai) = 0.

• The Lebesgue measure of a set containing uncountably many points:

– zero

– positive and finite

– infinite

Stochastic Systems, 2013 13

Probability Measure

Definition 7. Probability measure

A probability measure P on the sample space Ω with σ-algebra F is a set

function

P : F → [0, 1],

satisfying the following conditions

• P(Ω) = 1.

• If A ∈ F then P (A) ≥ 0.

• If A1, A2, A3, ... ∈ F are mutually disjoint, then

P( ∞⋃i=1

Ai

)=

∞∑i=1

P (Ai).

The triple (Ω,F, P ) is called a probability space.

Stochastic Systems, 2013 14

F-measurable functions

Definition 8. F -measurable function

The function f : Ω→ R defined on (Ω,F, P ) is called F -measurable if

f−1

(B) = ω ∈ Ω : f(ω) ∈ B ∈ F for all B ∈ B(R),

i.e., the inverse f−1 maps all of the Borel sets B ⊂ R to F . Sometimes it is

easier to work with following equivalent condition:

y ∈ R⇒ ω ∈ Ω : f(ω) ≤ y ∈ F

This means that once we know the (random) value X(ω) we know which of

the events in F have happened.

• F = ∅,Ω: only constant functions are measurable

• F = 2Ω: all functions are measurable

Stochastic Systems, 2013 15

F-measurable functions

Ω: Sample space, Ai: Event, F = σ(A1, . . . , An): σ-algebra of events

Ω

6

R

t ωj

X(ω) : Ω 7→ R

X(ω) ∈ RA1

A2

A3

A4 X−1 : B 7→ F

Y

(−∞, a] ∈ B

X: random variable, B: Borel σ-algebra

Stochastic Systems, 2013 16

F-measurable functions - Example

Roll of a die: Ω = ω1, ω2, ω3, ω4, ω5, ω6We only know, whether an even or and odd number has shown up:

F = ∅, ω1, ω3, ω5, ω2, ω4, ω6,Ω = σ(ω1, ω3, ω5)

Consider the following random variable:

f(ω) =

1, if ω = ω1, ω2, ω3;

−1, if ω = ω4, ω5, ω6.

Check measurability with the condition

y ∈ R⇒ ω ∈ Ω : f(ω) ≤ y ∈ F

ω ∈ Ω : f(ω) ≤ 0 = ω4, ω5, ω6 /∈ F ⇒ f is not F -measurable.

Stochastic Systems, 2013 17

Lebesgue integral I

Definition 9. Lebesgue Integral

(Ω,F) a measure space, µ : Ω→ R a measure, f : Ω→ R is F -measurable.

• If f is a simple function, i.e., f(x) = ci, for all x ∈ Ai, ci ∈ R∫Ω

fdµ =

n∑i=1

ciµ(Ai).

• If f is nonnegative, we can always construct a sequence of simple functions

fn with fn(x) ≤ fn+1(x) which converges to f : limn→∞ fn(x) = f(x).

With this sequence, the Lebesgue integral is defined by∫Ω

fdµ = limn→∞

∫Ω

fndµ.

Stochastic Systems, 2013 18

Lebesgue integral II

Definition 10. Lebesgue Integral

(Ω,F) a measure space, µ : Ω→ R a measure, f : Ω→ R is F -measurable.

• If f is an arbitrary, measurable function, we have f = f+ − f− with

f+(x) = max(f(x), 0) and f

−(x) = max(−f(x), 0),

and then define ∫Ω

fdµ =

∫Ω

f+dP −

∫Ω

f−dP.

The integral above may be finite or infinite. It is not defined if∫

Ωf+dP

and∫

Ωf−dP are both infinite.

Stochastic Systems, 2013 19

Riemann vs. Lebesgue

The most important concept of the Lebesgue integral is that the limit ofapproximate sums (as the Riemann integral): for Ω = R:

6

- x

f(x)

6

- x

f(x)

∆x

f

Stochastic Systems, 2013 20

Riemann vs. Lebesgue integral

Theorem 1. Riemann-Lebesgue integral equivalence

Let f be a bounded and continuous function on [x1, x2] except at a countable

number of points in [x1, x2]. Then both the Riemann and the Lebesgue integral

with Lebesgue measure µ exist and are the same:∫ x2

x1

f(x) dx =

∫[x1,x2]

fdµ.

There are more functions which are Lebesgue integrable than Riemann integra-

ble.

Stochastic Systems, 2013 21

Popular example for Riemann vs. Lebesgue

Consider the function

f(x) =

0, x ∈ Q;

1, x ∈ R\Q.

The Riemann integral ∫ 1

0

f(x)dx

does not exist, since lower and upper sum do not converge to the same value.

However, the Lebesgue integral∫[0,1]

fdλ = 1

does exist, since f(x) is the indicator function of x ∈ R\Q.

Stochastic Systems, 2013 22

Random Variable

Definition 11. Random variable

A real-valued random variable X is a F -measurable function defined on a

probability space (Ω,F, P ) mapping its sample space Ω into the real line R:

X : Ω→ R.

Since X is F -measurable we have X−1 : B → F .

Stochastic Systems, 2013 23

Distribution function

Definition 12. Distribution function

The distribution function of a random variable X, defined on a probability space

(Ω,F, P ), is defined by:

F (x) = P (X(ω) ≤ x) = P (ω : X(ω) ≤ x).

From this the probability measure of the half-open sets in R is

P (a < X ≤ b) = P (ω : a < X(ω) ≤ b) = F (b)− F (a).

Stochastic Systems, 2013 24

Density function

Closely related to the distribution function is the density function. Let f : R 7→R be a nonnegative function, satisfying

∫R fdλ = 1. The function f is called

a density function (with respect to the Lebesgue measure) and the associated

probability measure for a random variable X, defined on (Ω,F, P ), is

P (ω : ω ∈ A) =

∫A

fdλ.

for all A ∈ F .

Stochastic Systems, 2013 25

Important Densities I

• Poisson density or probability mass function (λ > 0):

f(x) =λx

x!e−λ

, x = 0, 1, 2, . . . .

• Univariate Normal density

f(x) =1

√2πσ

e−1

2

(x−µσ

)

. The normal variable is abbreviated as N (µ, σ).

• Multivariate normal density (x, µ ∈ Rn; Σ ∈ Rn×n):

f(x) =1√

(2π)ndet(Σ)e−1

2(x−µ)TΣ−1(x−µ).

Stochastic Systems, 2013 26

Important Densities II

• Univariate student t-density ν degrees of freedom (x, µ ∈ R1;σ ∈ R1

f(x) =Γ(ν+1

2 )

Γ(ν2)√πνσ

(1 +

1

ν

(x− µ)2

σ2

)−12(ν+1)

• Multivariate student t-density with ν degrees of freedom (x, µ ∈ Rn; Σ ∈Rn×n):

f(x) =Γ(ν+n

2 )

Γ(ν2)√

(πν)ndet(Σ)

(1 +

1

ν(x− µ)

TΣ−1

(x− µ))−1

2(ν+n)

.

Stochastic Systems, 2013 27

Important Densities II

• The chi square distribution with degree-of-freedom (dof) n has the following

density

f(x) =e−

x2(x2

)n−22

2Γ(n2)

which is abreviated as Z ∼ χ2(n) and where Γ denotes the gamma

function.

• A chi square distributed random variable Y is created by

Y =n∑i=1

X2i

where X are independent standard normal distributed random variables

N (0, 1).

Stochastic Systems, 2013 28

Important Densities III

• A standard student-t distributed random variable Y is generated by

Y =X√Zν

,

where X ∼ N (0, 1) and Z ∼ χ2(ν).

• Another important density is the Laplace distribution:

p(x) =1

2σe−|x−µ|σ

with mean µ and diffusion σ. The variance of this distribution is given as

2σ2.

Stochastic Systems, 2013 29

Expectation & Variance

Definition 13. Expectation of a random variable

The expectation of a random variable X, defined on a probability space

(Ω,F, P ), is defined by:

E[X] =

∫Ω

XdP =

∫Ω

xfdλ.

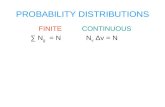

With this definition at hand, it does not matter what the sample Ω is. The

calculations for the two familiar cases of a finite Ω and Ω ≡ R with continuous

random variables remain the same.

Definition 14. Variance of a random variable

The variance of a random variable X, defined on a probability space (Ω,F, P ),

is defined by:

var(X) = E[(X − E[X])2] =

∫Ω

(X − E[X])2dP = E[X

2]− E[X]

2.

Stochastic Systems, 2013 30

Normally distributed random variables

The shorthand notation X ∼ N (µ, σ2) for normally distributed random

variables with parameters µ and σ is often found in the literature. The

following properties are useful when dealing with normally distributed random

variables:

• If X ∼ N (µ, σ2)) and Y = aX + b, then Y ∼ N (aµ+ b, a2σ2)).

• If X1 ∼ N (µ1, σ21)) and X2 ∼ N (µ2, σ

22)) then

X1 +X2 ∼ N (µ1 + µ2, σ21 + σ2

2) (if X1 and X2 are independent)

Stochastic Systems, 2013 31

Conditional Expectation I

From elementary probability theory (Bayes rule):

P (A|B) =P (A ∩ B)

P (B)=P (B|A)P (A)

P (B), P (B) > 0.

Ω

A BA ∩ B

E(X|B) =E(XIB)

P (B), P (B) > 0.

Stochastic Systems, 2013 32

Conditional Expectation II

General case: (Ω,F, P )

Definition 15. Conditional expectation

Let X be a random variable defined on the probability space (Ω,F, P ) with

E[|X|] < ∞. Furthermore let G be a sub-σ-algebra of F (G ⊆ F). Then

there exists a random variable Y with the following properties:

1. Y is G-measurable.

2. E[|Y |] <∞.

3. For all sets G in G we have∫G

Y dP =

∫G

XdP, for all G ∈ G.

The random variable Y = E[X|G] is called conditional expectation.

Stochastic Systems, 2013 33

Conditional Expectation: Example

Y is a piecewise linear approximation of X.

Y = E[X|G]

-

6

X(ω)

ωG1 G2 G3 G4 G5

XY

For the trivial σ-algebra ∅,Ω:

Y = E[X|∅,Ω] =∫

ΩXdP = E[X].

Stochastic Systems, 2013 34

Conditional Expectation: Properties

• E(E(X|F)) = E(X).

• If X is F -measurable, then E(X|F) = X.

• Linearity: E(αX1 + βX2|F) = αE(X1|F) + βE(X2|F).

• Positivity: If X ≥ 0 almost surely, then E(X|F) ≥ 0.

• Tower property: If G is a sub-σ-algebra of F , then

E(E(X|F)|G) = E(X|G).

• Taking out what is known: If Z is G-measurable, then

E(ZX|G) = Z · E(X|G).

Stochastic Systems, 2013 35

Summary

• σ-algebra: collection of the events of interest, closed under elementary set

operations

• Borel σ-algebra: all the events of practical importance in R• Lebesgue measure: defined as the length of an interval

• Density: transforms Lebesgue measure in a probability measure

• Measurable function: the σ-algebra of the probability space is ”rich” enough

• Random variable X: a measurable function X : Ω 7→ R• Expectation, Variance

• Conditional expectation is a piecewise linear approximation of the underlying

random variable.

Stochastic Systems, 2013 36

![Cantor Groups, Haar Measure and Lebesgue Measure on · PDF fileCantor Groups, Haar Measure and Lebesgue Measure on [0;1] Michael Mislove Tulane University Domains XI Paris Tuesday,](https://static.fdocument.org/doc/165x107/5aaaf5b87f8b9a90188ecb94/cantor-groups-haar-measure-and-lebesgue-measure-on-groups-haar-measure-and.jpg)