2.2 Conditional Probability - STAT homestat · 2.2 Conditional Probability Contents ... Let A,B be...

Transcript of 2.2 Conditional Probability - STAT homestat · 2.2 Conditional Probability Contents ... Let A,B be...

http://statwww.epfl.ch

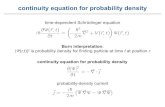

2.2 Conditional Probability

Contents

Conditional probability. Conditioning and its consequences. Multiple

conditioning. Matching problems. Examples.

Independent events. Types of independence. Notions of reliability.

Examples.

References: Ross (Chapter 3); Ben Arous notes (Sections II.5, II.4).

Exercises: 39–42, 44–54, 37, 38 of Recueil d’exercices.

Probabilite et Statistique I — Sections 2.2, 2.3 1

http://statwww.epfl.ch

Conditional Probability

Example : Two fair dice are rolled, one red and one green. Let A

and B be the events that ‘the total exceeds 8’, and that ‘the red die

shows 6’. If B is known to have occurred, how does P(A) change? •

Definition: Let A, B be events of a probability space (Ω,F , P), such

that P(B) > 0. Then the conditional probability of A given B is

P(A | B) =P(A ∩ B)

P(B).

When P(B) = 0, we adopt the convention P(A ∩ B) = P(A | B)P(B),

both sides having the value zero. Thus

P(A) = P(A ∩ B) + P(A ∩ Bc) = P(A | B)P(B) + P(A | Bc)P(Bc)

even if P(B) = 0 or P(Bc) = 0.

Probabilite et Statistique I — Sections 2.2, 2.3 2

http://statwww.epfl.ch

Conditional Probability II

Theorem 2.2 (Law of total probability): Let Bi∞

i=1be

pairwise disjoint events of a probability space (Ω,F , P), and let the

event A satisfy A ⊂⋃

∞

i=1Bi. Then

P(A) =∞∑

i=1

P(A ∩ Bi) =∞∑

i=1

P(A | Bi)P(Bi).

Theorem 2.3 (Bayes): Suppose that the conditions above hold,

and that P(A) > 0. Then

P(Bj | A) =P(A | Bj)P(Bj)

∑

∞

i=1P(A | Bi)P(Bi)

, for any j ∈ N.

In particular, these results are true if Bi∞

i=1is a partition of Ω.

Probabilite et Statistique I — Sections 2.2, 2.3 3

http://statwww.epfl.ch

Example 2.11: Cars are manufactured in towns called Farad,

Gilbert and Henry. Of 1000 made in Farad 20% are defective, of 2000

made in Gilbert 10% are defective, and of 3000 made in Henry 5%

are defective. You buy a car from a distant dealer. If D is the event

that the car is defective, find (a) P(F | Hc), (b) P(D | Hc), (c) P(D),

and P(F | D). Assume you are equally likely to have bought any of

the 6000 cars produced. •

Example 2.12: You undergo a test for a rare disease which occurs

by chance in 1 in every 100,000 people. The test is fairly reliable: if

you have the disease it will correctly say so with probability 0.95; if

you do not have the disease, the test will wrongly say that you do

with probability 0.005. If the test says you do have the disease, what

is the probability that this is a correct diagnosis? •

Probabilite et Statistique I — Sections 2.2, 2.3 4

http://statwww.epfl.ch

Conditioning is a key idea of probability, and gives elegant solutions

to many problems.

Example 2.13 (The fly): A room has four walls, a floor, and a

ceiling. A fly moves between these surfaces. If it leaves the floor or

ceiling then it is equally likely to alight on any one of the four walls

or the surface it has just left. If it leaves a wall then it is equally

likely to alight on any one of the other three walls, or the floor, or the

ceiling. Initially it is on the ceiling.

Find Fk, the probability that the fly is on the floor after k moves. •

Example 2.14 (Gambler’s ruin): You enter a casino with $k, and

on each spin of the roulette wheel you bet $1 at evens on the event R

that the result is red. The wheel is not fair, so P(R) = p < 1

2. If you

lose all $k you must leave, and if you ever possess $K ≥$k, you leave

immediately. What is the probability that you leave with nothing? •

Probabilite et Statistique I — Sections 2.2, 2.3 5

http://statwww.epfl.ch

Conditional Probability III

Theorem 2.4: Let (Ω,F , P) be a probability space, and let B ∈ F

have P(B) > 0 and Q(A) = P(A | B). Then (Ω,F , Q) is a probability

space. In particular,

1. if A ∈ F , then 0 ≤ Q(A) ≤ 1;

2. Q(Ω) = 1;

3. if Ai∞

i=1are pairwise disjoint (that is, Ai ∩ Aj = ∅, i 6= j), then

Q

(

∞⋃

i=1

Ai

)

=∞∑

j=1

Q(Ai).

Thus conditioning enables us to construct many different probability

distributions, starting from a given one.

Probabilite et Statistique I — Sections 2.2, 2.3 6

http://statwww.epfl.ch

Multiple Conditioning

Theorem 2.5 (Prediction decomposition): Let A1, . . . , An be

events of a probability space. Then

P(A1 ∩ A2) = P(A2 | A1)P(A1)

P(A1 ∩ A2 ∩ A3) = P(A3 | A1 ∩ A2)P(A2 | A1)P(A1)

...

P(A1 ∩ · · · ∩ An) =

n∏

i=2

P(Ai | A1 ∩ · · · ∩ Ai−1) P(A1)

Example 2.15: Two fair dice are thrown. Define the events A, B, C

to be ‘the total is at most 6’, ‘the total is even’, and ‘the first die

shows 4’. (a) How does knowledge that B or C has occurred affect

the probability of A? (b) Compute P(A ∩ B ∩ C). •

Probabilite et Statistique I — Sections 2.2, 2.3 7

http://statwww.epfl.ch

Matchings

Example 2.16: n men attend a dinner. Each leaves his hat in the

cloakroom. When they leave, bien arrose, they choose their hats at

random.

(a) What is the probability that no-one has the correct hat?

(b) What is the probability that exactly r choose the correct hats?

(c) What happens when n is very large? •

Note: Variations on this example have many applications.

Probabilite et Statistique I — Sections 2.2, 2.3 8

http://statwww.epfl.ch

2.3 Independence

Probabilite et Statistique I — Sections 2.2, 2.3 9

http://statwww.epfl.ch

Independent Events

Intuitively, saying ‘A and B are independent’ means that occurrence

of one does not affect the occurrence of the other. That is,

P(A | B) = P(A), so knowledge that B has occurred leaves P(A)

unchanged.

Example 2.17 (Dice again): Two fair dice are thrown. Let A and

B be the events ‘the total is even’ and ‘the first die shows an even

number’. Compute P(A) and P(A | B). •

Example 2.18 (Boys): A family has two children.

(a) The first is known to be a boy. What is the probability that the

second is boy?

(b) One is known to be a boy. What is the probability that the other

is a boy? •

Probabilite et Statistique I — Sections 2.2, 2.3 10

http://statwww.epfl.ch

Independence II

Definition: Let (Ω,F , P) be a probability space. Two events

A, B ∈ F are independent iff

P(A ∩ B) = P(A)P(B).

In line with our intuition, this implies that

P(A | B) =P(A ∩ B)

P(B)=

P(A)P(B)

P(B)= P(A),

and by symmetry P(B | A) = P(B). •

Example 2.19: A deck of cards is well shuffled and a card drawn at

random. Are the events A ‘the card is an ace’, and H ‘the card is a

heart’ independent? What about the events A and K ‘the card is a

king’? •

Probabilite et Statistique I — Sections 2.2, 2.3 11

http://statwww.epfl.ch

Total, Pairwise and Conditional Independence

Definition: (a) Events A1, . . . , An are independent (or mutually

independent) if for any set of indices F ⊂ 1, . . . , n, it is true that

P

(

⋂

i∈F

Ai

)

=∏

i∈F

P(Ai).

(b) Events A1, . . . , An are pairwise independent if

P(Ai ∩ Aj) = P(Ai) P(Aj), 1 ≤ i < j ≤ n.

(c) Events A1, . . . , An are conditionally independent given B if

P

(

⋂

i∈F

Ai | B

)

=∏

i∈F

P(Ai | B)

for any set of indices F ⊂ 1, . . . , n. •

Probabilite et Statistique I — Sections 2.2, 2.3 12

http://statwww.epfl.ch

Note: Independence is a key idea which greatly simplifies many

probability calculations. In practice it is wise to check the basis of

claims that events are independent, as unsuspected dependence can

make a big difference to computed probabilities.

Note: Mutual independence implies pairwise independence, but the

converse is true only when n = 2.

Note: Mutual independence implies conditional independence but

the converse is true only if B = Ω.

Example 2.20: A family has two children. Show that the events

‘the first child is a boy’, ‘the second child is a boy’, and ‘there is

exactly one boy’ are pairwise but not mutually independent. •

Probabilite et Statistique I — Sections 2.2, 2.3 13

http://statwww.epfl.ch

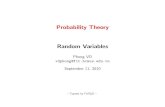

Example 2.21 (Birthdays): n people are in a room. What is the

probability that they all have different birthdays?

0 10 20 30 40 50 60

0.0

0.2

0.4

0.6

0.8

1.0

n

Pro

babi

lity

Probabilite et Statistique I — Sections 2.2, 2.3 14

http://statwww.epfl.ch

Example 2.22: In any given year the probability that a male driver

makes a claim on his insurance is µ, independently of other years.

The probability for a female driver is λ < µ. An insurance company

has equal numbers of male and female drivers, and selects one at

random.

(a) What is the probability that (s)he makes a claim this year?

(b) What is the probability that (s)he makes a claim in two

consecutive years?

(c) If the insurance company selects a claimant at random, what is

the probability that this claimant makes a claim in the following

year?

(d) Show that knowledge that a claim has been made in one year

increases the probability of a claim in a second year. •

Probabilite et Statistique I — Sections 2.2, 2.3 15

http://statwww.epfl.ch

Series and Parallel Systems

An electrical system is composed of components labelled 1, . . . , n,

which fail independently. Let Ai be the event that the ith component

fails, and suppose that P(Ai) = pi. The event B, ‘system failure’

occurs if current cannot pass from one side of the system to the other.

If the components are arranged in parallel, then

PP(B) = P(A1 ∩ · · · ∩ An) =

n∏

i=1

pi.

If the components are arranged in series, then

PS(B) = P(A1 ∪ · · · ∪ An) = 1 −n∏

i=1

(1 − pi).

If pi > p > 0, for all i, and n → ∞, then PP(B) → 0, PS(B) → 1.

Probabilite et Statistique I — Sections 2.2, 2.3 16

http://statwww.epfl.ch

Reliability

Example 2.23 (Chernobyl): A nuclear power station depends on

a safety system whose components are arranged as shown in the

figure (blackboard). Components fail independently with probability

p, and the system fails if electrical current cannot pass from A to B.

(a) What is the probability that the system fails?

(b) The components are manufactured in batches, which can be good

or bad. For a good batch, p = 10−6, while for a bad batch p = 10−2.

The probability that a batch is good is 0.99. What are the

probabilities that the system fails (i) if the components come from

different batches? (ii) if all the components come from the same

batch? •

Probabilite et Statistique I — Sections 2.2, 2.3 17

![Modeling Heterogeneous Materials via Two-Point … function g2(r) [1]. The quantity ρg2(r)s1(r)dris proportional to the conditional probability of finding the center of a particle](https://static.fdocument.org/doc/165x107/5b01e66b7f8b9a84338edd7c/modeling-heterogeneous-materials-via-two-point-function-g2r-1-the-quantity.jpg)