[IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on...

Transcript of [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on...

![Page 1: [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on Intelligent Control - Real-time PI controller tuning via unfalsified control](https://reader037.fdocument.org/reader037/viewer/2022092810/5750a7931a28abcf0cc2194d/html5/thumbnails/1.jpg)

Real-time PI Controller Tuning via Unfalsified Control

Tanet Wonghong and Sebastian Engell

Abstract— In this paper, we present a new unfalsified adap-tive control algorithm. This algorithm leads to a real-timecontroller tuning method. The algorithm consists of two mainelements: 1) Switching of controllers in a controller set by theε-hysteresis switching algorithm and 2) Optimization of thecontroller set via an evolutionary algorithm (EA). The real-time controller tuning is demonstrated for a nonminimum-phase continuous stirred tank reactor (CSTR) model.

Index Terms— Adaptive Control, Unfalsified Control, Real-time Controller Tuning, PI Controller, Evolutionary Algorithm

I. INTRODUCTION

Unfalsified control was initiated by Safonov et al [1].

The main goal is to control a physical object without a

plant model or with little knowledge about the plant. This

technique uses the plant input data and the plant output

data that are collected while one controller from a finite

set of possible controllers is active to evaluate whether the

controller should be switched and, if yes, to which new

active controller. The starting point of our work was the

idea to use the information also to improve on the set of

controllers, because it is unrealistic to have a good controller

for each operating point of a plant if the plant is unknown. In

order to progress beyond switching within a predefined set of

controllers, a major modification of the technique in [1] and

[2] is required because by using the original cost function,

instability of a non-active controller is in fact not detected,

and therefore the cost function based upon the fictitious

signals cannot be used to adapt the controller parameters

(the optimization may converge to unstable controllers). In

[3], we proposed a new fictitious error signal and a new

cost function that overcome this problem. When a transition

to a new operating point occurs, the set of controllers is

updated. An evolutionary algorithm is used to search for

the optimal controller which is then used to generate a

new set of controllers for the new operating conditions.

The combination of switching of the active controller in the

current set and the adaptation of the controller set leads to

a data-based real-time controller tuning scheme for a given

controller structure, e.g. PI and PID controllers.

In this paper, we introduce the new unfalsified adaptive

control algorithm in section II. This algorithm is the main

result of work reported in [4], [5], [6]. In section III, a

chemical engineering example is used to demonstrate that the

Tanet Wonghong is with Department of Electrical Engineering, Schoolof Engineering, Bangkok University (Rangsit Campus), 12120 Pathumthani,Thailand [email protected]

Sebastian Engell is with the Process Dynamics and Operations Group,Department of Biochemical and Chemical Engineering, TU Dortmund,44221 Dortmund, Germany [email protected]

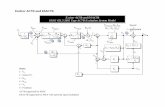

Fig. 1. Adaptive control system Φ(P,Cφ (t)(s))

algorithm can handle considerable changes of the dynamics

of the controlled process well. Finally, conclusions and an

outlook on future work are presented.

II. UNFALSIFIED ADAPTIVE CONTROL ALGORITHM

A. Adaptive Control System

We consider a SISO system in the continuous-time domain

as shown in Fig. 1. A mapping Φ(P,Cφ(t)(s)) : L2e → L 22e

that transforms r(t) → [u(t),y(t)]T is called an adaptive

control system. We denote r(t) ∈L2e as the reference signal

or the external excitation.

B. Unknown Plant

A black-box mapping P : L2e → L2e that transforms

u(t)→ y(t) is called an unknown plant P where u(t) is the

observed plant input signal and y(t) is the observed plant

output signal. We define a set of unknown linearized plant

models of the unknown nonlinear plant P as

P, {P|Pj(s) =

[A j b j

c j d j

]

p j=(xs j,us j

)

} (1)

where Pj(s) denotes the (unknown) linearized plant at the

current operating point p j = (xs j,us j

). p j−1 and p j+1 are

defined to be the previous operating point and the next

operating point for p j.

C. Set of Candidate Controllers

The set of candidate controllers is defined as

K, {C|Ci(s),∀i ∈M= {1,2, . . . ,m}} (2)

where the linear system Ci(s) ∈K is called the ith candidate

controller. We require that the controllers are proper and

quotients of two controllers are stable.

2011 IEEE International Symposium on Intelligent Control (ISIC)Part of 2011 IEEE Multi-Conference on Systems and ControlDenver, CO, USA. September 28-30, 2011

978-1-4577-1103-9/11/$26.00 ©2011 IEEE 1008

![Page 2: [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on Intelligent Control - Real-time PI controller tuning via unfalsified control](https://reader037.fdocument.org/reader037/viewer/2022092810/5750a7931a28abcf0cc2194d/html5/thumbnails/2.jpg)

D. Adaptive Control Law

The adaptive control law is defined as

u(t), cφ(t)(t)∗ e(t) (3)

where cφ(t)(t) is the inverse Laplace transform of Cφ(t)(s)and ∗ is the convolution integral. A piecewise continuous

mapping φ : R+ → M is called a switching signal which

is generated by the unfalsified adaptive control algorithm

to activate a candidate controller Cφ(t+)(s) ∈ K. We define

Ci(t)(s),∀i(t) ∈M−{φ(t)} as the non-active candidate con-

trollers

E. Set of Data

The set of data is defined as

D, {d|d(t) =[r(t) u(t) y(t)

]T} (4)

where d(t)∈L 32e is called a data signal vector. The truncated

data set is

Dτ = {dτ |dτ (t) =[rτ(t) uτ(t) yτ(t)

]T} (5)

where dτ(t) is called a truncated data signal vector or an

observed data signal vector for a given time-window of

length τ , τ = [ta, tb] ∈ R+.

F. Original Fictitious Signals and Original Cost Function

In [1], the original fictitious reference signal and the

original fictitious error signal of Ci(s) are defined as

ri(t), c−1i (t)∗ uφ (t)+ yφ(t), (6)

ei(t), ri(t)− yφ(t) = c−1i (t)∗ uφ(t). (7)

In [2], the original cost function is defined as

Ji(t),‖ei(t)‖2

τ=[0,t]+ γ‖uφ(t)‖2τ=[0,t]

‖ri(t)‖2τ=[0,t]

(8)

where γ is a positive scalar.

G. The Relationship of the Fictitious Reference Signal and

the True Reference Signal

Inserting Uφ (s) and Yφ (s) into (6),

Ri(s) =Cφ (s)Sφ (s)

Ci(s)Si(s)R j(s) = Λi(s)R j(s) (9)

where Sφ (s) =1

1+Cφ (s)Pj(s)is the unknown sensitivity func-

tion of the closed-loop pair (Cφ (s),Pj(s)) and Si(s) =1

1+Ci(s)Pj(s)is the unknown sensitivity function of the closed-

loop pair (Ci(s),Pj(s)). The mapping Λi : L2e → L2e,

r j(t) → ri(t) is called fictitious reference signal generator

of Ci(s).

H. Λi(s) for a PI Controller Structure

A PI controller structure, e.g. CPIi (s) = kpi

(1 + 1Tni

s), is

considered using (6):

Ri(s) =1

CPIi (s)

Uφ (s)+Yφ (s) =s

kpis+

kpiTni

Uφ (s)+Yφ (s).

(10)

We realize (10) by a state space representation. The fictitious

signal generator of Ci(s) can be obtained as

ΛPIi (s) =

[− 1

Tni

1kpi

0

− 1Tni

1kpi

1

]

=

[ai bi 0

ci di 1

]

. (11)

I. New Fictitious Error Signal and New Cost Function

The new fictitious error signal from [3] is defined as

si(t) , ri(t)∗−1 ei(t) (12)

e∗i (t) , si(t)∗ r j(t)

where ∗−1 is the deconvolution operator. Note that (12) can

be computed approximately using sampled signals:

Let

Si(z) =∞

∑k=0

si(k) · z−k

and assume thatCφ (z)

Ci(z)6= 0 as z → ∞ and r j(0) 6= 0. Then the

impulse sequence si(k) can be computed from the measure-

ments uφ (k) and yφ (k) by deconvolution:

Ci(z) =Ci(z)−1 =

∞

∑k=0

ci(k) · z−k

using (7), we obtain

ei(k) =k

∑l=0

ci(l) ·uφ (k− l)

ri(k) = ei(k)+ yφ (k)

ei(0) = ri(0) · si(0)

ei(1) = ri(1) · si(0)+ ri(0) · si(1)

... =...

ei(l) = ri(l) · si(0)+ ri(l − 1) · si(1)+ · · ·+ ri(0) · si(l)

from which it follows that

si(0) = ei(0)/ri(0)

si(1) = [ei(1)− si(0) · ri(1)]/ri(0)... =

...

si(l) = [ei(l)−l−1

∑m=0

si(m) · ri(l −m)

︸ ︷︷ ︸

convolution up to l−1

]/ri(0)

Thus,

e∗i (k) =k

∑l=0

si(l) · r j(k− l). (13)

1009

![Page 3: [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on Intelligent Control - Real-time PI controller tuning via unfalsified control](https://reader037.fdocument.org/reader037/viewer/2022092810/5750a7931a28abcf0cc2194d/html5/thumbnails/3.jpg)

Fig. 2. Cost monitoring

The new cost function used to measure the performance

of a candidate controller Ci(s) is defined as

J∗i (t),‖e∗i (t)‖2

τ=[t j ,ts j]+ γ‖u∗i (t)‖2

τ=[t j ,ts j]

‖r j(t)‖2τ=[t j ,ts j

]

(14)

where u∗i (t) = ci(t)∗ e∗i (t) and t j is an excitation time of the

excitation r j and ts jis a closed-loop settling time after the

excitation r j .

J. Falsified Controllers and Unfalsified Controllers

Following the approach in [3], unsatisfactory closed-loop

performance of a non-active candidate controller Ci(s) is

detected using the criterion

J∗i (r j(t),uφ (t),yφ (t), t) = J∗i (e∗i (t),u

∗i (t),r j(t), t)< α (15)

where α is called the unfalsification threshold. If this cri-

terion is met, Ci(s) is said to be an unfalsified controller.

Otherwise, Ci(s) is said to be a falsified controller.

K. Cost Monitoring

Using J∗i , the cost monitoring scheme evaluates the per-

formances of all candidate controllers as shown in Fig. 2.

At each time, J∗i (k),∀i ∈ M will be sent to the switching

mechanism to decide on the next switching signal φ(k+1).Note the cost monitoring is switched on when there is a new

excitation and it will be switched off when EA is activated.

L. Switching Mechanism

A switching mechanism can be considered as a good

algorithm for unfalsified control if it can discover the best

unfalsified controller in a given set of controllers within a

reasonable period of time. In [7], such an algorithm was

proposed, the so-called ε-hysteresis switching algorithm:

1) Initialize: choose ε > 0 and φ(t j0) =m ∈M for a time-

window of length τ = [t j0 , ts j].

2) t jk := t jk+1

if J∗φ(t jk)(t jk )≥ mini∈M J∗i (t jk )+ ε

then φ(t jk+1) := argmini∈M J∗i (t jk)

else φ(t jk+1) := φ(t jk ).

3) Go to 2 until t jk = ts j.

M. Evolutionary Algorithm: EA

In this paper, the EA is implemented in the specific

form of an evolution strategy (ES) where each individual

is represented by a vector of controller parameters and by

a vector of strategy parameters that control the mutation

strengths. We use the ES as an optimizer in the context of the

unfalsified control theory. The ES is used for the adaptation

of the set of controllers because it manipulates a population

of candidate controllers and can handle non-convex cost

functions and is able to escape from local minima.

N. Evolution Strategy

The idea of the ES was first introduced in [8] and further

developed in [9]. It is an optimization algorithm for a fitness

function f (ci) where ci ∈K and f ∈ F:

c∗ = argmin f (ci). (16)

ci denotes an n-dimensional object parameter vector (e.g.

a controller parameter vector) in an object space K (e.g. a

search space of candidate controllers) and c∗ denotes the

minimizer (e.g. an optimal controller parameter vector).

1) Representation of Individuals: The ES works with a

population P of the size µ + λ . µ denotes the number of

parent individuals and λ denotes the number of offspring

individuals. An individual ai consists of an object parameter

vector ci and an internal (self-adaptation) vector si ∈ S, and

its fitness value f (ci):

ai , (ci,si, f (ci)). (17)

si denotes an n-dimensional strategy parameter vector. si

is not involved in the computation of the fitness of the

individual but it determines the generation of the offspring.

The vector space of individuals where the evolution of the

population occurs is:

A,K×S×F.

The individuals ai construct a population, i.e. µ par-

ent individuals ai, i = 1,2, ...,µ and λ offspring individuals

ak,k = 1,2, ...,λ . At generation q, the populations of the

parent individuals and the offspring individuals are

Pµ(q) , {a1(q),a2(q), ...,aµ(q)}, (18)

Pλ (q) , {a1(q), a2(q), ..., aλ (q)}. (19)

2) Recombination: The basic recombination in the ES

uses two parent individuals to create one child individual. To

obtain λ children individuals, the recombination is performed

λ times. There are two recombination variants of discrete

recombination and intermediate recombination. Two parent

vectors x and y are uniformly randomly chosen from Pµ(q)to produce a child vector z′:

z′j =

{x j or y j with probability 0.5 each : discrete

(x j + y j)/2 : intermediate(20)

where j = 1,2, ...,n. The former mechanism is used for the

object parameter vectors and the latter is used for the strategy

parameter vectors.

1010

![Page 4: [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on Intelligent Control - Real-time PI controller tuning via unfalsified control](https://reader037.fdocument.org/reader037/viewer/2022092810/5750a7931a28abcf0cc2194d/html5/thumbnails/4.jpg)

3) Mutation: Mutation is very important for the ES

because it is the source of the genetic variations. After

the recombination, each child individual is mutated to an

offspring individual. Mathematically, each object parameter

vector c′ is mutated using a normal (Gaussian) distribution

N (0, s j):c j = c′j + s j ·N (0,1) (21)

where j = 1,2, ...,n. According to the self-adaptation mech-

anism of the ES, each strategy parameter s′j is modified log-

normally [10]:

s j = s′j · exp(δ ·N (0,1)) (22)

where δ is the learning rate and it is inversely proportional

to the square root of the problem size (δ ∝ 1√n) [11]. An

offspring individual is defined as

ak , (ck, sk, f (ck)),k = 1,2, ...,λ . (23)

4) Selection: There exist two different selection mecha-

nisms in evolution strategies, (µ ,λ )- and (µ +λ )-selection.

The difference between both selections is the set of individ-

uals involved in the selection. The former selects the µ best

individuals out of the offspring, while the latter selects the

µ best individuals out of the union of parents and offspring

to form the next population.

5) Termination: The ES is used to deal with the non-

convexity of the cost function. From the population of µparents with controllers ci,∀i = 1, ...,µ , let f 1

q be the best

fitness value

f 1q = min

i{ f (c

qi ),∀i = 1, ...,µ} (24)

and fµq be the worst fitness value

f µq = max

i{ f (c

qi ),∀i = 1, ...,µ}. (25)

Then the search will be terminated at generation q if

| f 1q − f µ

q |< ι (26)

where ι is a small positive constant.

O. Adaptive Controller Set

A key point of this work is to show how the current set of

candidate controllers can be adapted when a new operating

point is commanded. K(t) denotes a controller set that varies

with time. In the algorithm, four different sets of candidate

controllers are defined:

The set of initial candidate controllers

K0 ,K(t = 0)

is predefined for the start-up of the unknown plant P. Thus

m candidate controllers are chosen freely, based upon prior

knowledge, at the beginning. By K j = K(t j < t < t∗j ), we

denote the set of controllers at the current operating point

p j.

After convergence or termination of the ES, a set of usu-

ally almost identical candidate controllers at p j is obtained:

K∗j ,Kopt(t = t∗j ). (27)

Fig. 3. Unfalsified adaptive control algorithm

K∗j is the last population of the ES which used the observed

data vector dτ j=[t j ,t∗j ](t) and the new cost function J∗i (t) as

the fitness function.

After convergence of the ES at t∗j , the controllers in the

set have all converged to the best controller so it does

not make sense to use this as a set of controllers for the

current operating point. We need to diversify the set to

maintain variety in the set of candidate controllers. The set

of diversified candidate controllers at p j is modified as

Kj =K(t∗j < t < t j+1) (28)

where is a diversity operator. The optimal solution c∗j ∈Rn is used as a center to generate the new set K

j , i.e. the

candidate controllers are distributed around c∗j . We define

Kj , {ci | ‖ci − c∗j‖ ≤, i = 1,2, ...,m− 1,∀ci ∈ R

n}.(29)

Therefore, at the next operating point p j+1,

K j+1 ,Kj . (30)

P. Central Data Processing Unit

In Fig. 3, we illustrate the overall algorithm by a block

diagram. There are two main loops, the control loop with the

active controller and the intelligent loop (the dashed box) that

computes a new switching signal and a new set of controllers.

There are three tasks that the central data processing unit

performs:

• To detect a change of the current set-point and to

provide time-window data and compute initial states of

Λi(s) for all candidate controllers that are computed in

the cost monitoring and in the EA.

• To send a command to switch on and off the cost

monitoring via the signal OCM(k) and to activate the

EA via the signal OEA(k) for processing the controller

adaptation.

• To assign the current active controller from the current

set of candidate controllers to the ε-hysteresis switching

algorithm to activate the next active controller and to

compute the initial state of the next active controller at

the current switching time.

1011

![Page 5: [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on Intelligent Control - Real-time PI controller tuning via unfalsified control](https://reader037.fdocument.org/reader037/viewer/2022092810/5750a7931a28abcf0cc2194d/html5/thumbnails/5.jpg)

This scheme performs two nested controller adaptations as:

• Switching between the candidate controllers in the set

of controllers that can vary with time.

• Recomputation of the set of controllers by the ES and

the diversity operator online.

This combination leads to a new method for real-time

controller tuning without using an explicit plant model.

III. APPLICATION TO A NONLINEAR SYSTEM

As a benchmark problem for a nonlinear control design,

we consider the production of cyclopentenal (B) from cy-

clopentadiene (A) as presented in [13] and [14]. Due to

the strong reactivity of the raw material and the product,

dicyclopentadiene (D) is produced as a side product, and

cyclopentanediol (C) as a consecutive product by addition

of another water molecule. The reaction scheme is

Ak1−→B

k2−→ C,

2Ak3−→ D.

(31)

We assume that the reactor temperature is fixed at the

operating point ϑs = 134.14oC for the entire operation. Using

this assumption, a SISO nonlinear model results from mass

balances for the components A and B:

x1 = −k1x1 − k3x21 +(x1,in − x1)u

x2 = k1x1 − k2x2 − x2u

y = x2 (32)

where x1 is the concentration of the reactant A (cA) and

x2 is the concentration of the desired product B (cB).The parameter values are k1 = 50.6h−1,k2 = 50.6h−1,k3 =6.74l/(mol · h), and x1,in = cA,in. Control of the product

concentration cB is specified such that any value in the range

of 0.85mol/l ≤ cB ≤ 0.95mol/l can be achieved without

a steady state error for values of the unmeasured inlet

concentration cA,in = 5.1mol/l. u is the manipulated variable

subject to the following constraint:

5h−1 ≤ u ≤ 35h−1.

The steady state behavior of the CSTR is plotted in Fig.

4. Note that the box indicates the desired operating range

(0.85mol/l ≤ cB ≤ 0.95mol/l) and the circle is the main

operating point cB = 0.9mol/l for the start-up.

A. Initialization of the Algorithm

The simulations are carried out under the following as-

sumptions:

1) The CSTR model is used as the unknown plant P with

a finite time delay due to an analytic instrument for the

measurement of the product concentration of 0.02h.

2) Operation scheme:

r(t) =

0mol/l : 0 ≤ t < 0.15h;

0.9mol/l : 0.15h ≤ t < 4.15h;

0.95mol/l : 4.15h ≤ t < 6.15h;

0.85mol/l : 6.15h ≤ t < 8.15h;

0.92mol/l : 8.15h ≤ t < 11.15h;

0.88mol/l : 11.15h ≤ t < 13h.

5 6 7 8 9 1011121314151617181920212223242526272829303132333435350.3

0.35

0.4

0.45

0.5

0.55

0.6

0.65

0.7

0.75

0.8

0.85

0.9

0.95

1

1.05

1.11.1

cB

s

us

Steady state of the product concentration

Fig. 4. Nonlinear behavior Fig. 5. ES running at t∗1 using Ji

Note that we first move the process to the main

operating point cBs = 0.9mol/l from the origin (start-

up). Then we move to the second operating point after

t ≥ 4.15h, etc.

3) The PI controller structure is set up as follows:

a) The initial set of PI candidate controllers consists

of 9 candidate controllers:

K0 = {ci(t = 0)|ci = [kpi,Tni

]T ,

kpi∈ {10,50,100},Tni

∈ {0.1,0.5,1}}where the first active controller cφ(0) = [10,0.1]T .

b) The search space for PI candidate controllers is

KPIEA =Kp ×Tn = [−100,100]× [0.01,1].

c) We define the diversity operator as

Kj (t), {ci(t)|ci = [kpi

,Tni]T ,

kpi∈ { 1

k∗pi

,k∗pi,k∗pi

},Tni∈ { 1

T ∗

ni,T ∗

ni,T ∗

ni}}

where = 2 is chosen.

4) ε = 0.001 for the switching of an active controller and

γ = 10−9 in J∗i .

5) The controller tuning points are t∗j = 0.45h, 4.45h,

6.45h, 8.45h, 11.45h.

6) The (µ +λ )-selection is used.

B. Simulation Results

If the controller is fixed to the first active controller, a poor

performance results as shown in in Fig. 13 because one fixed

controller cannot handle the nonlinear behavior of the CSTR

model due to changes of the set-points. For this reason, the

unfalsified adaptive control algorithm is applied to the CSTR

model. The initial set K1 ,K0 is used at the first operating

point p1. The switching of candidate controllers in K1 is

performed during the interval 0.15h ≤ t < 0.45h and the EA

is activated at t = t∗1 = 0.45h as the first tuning point. If we

assume that at this tuning point, the original cost function Ji

is used, then the ES returns the best (destabilizing) controller

as shown in Fig. 5. Using the new cost function J∗i , the ES

returns the best (stabilizing) controller as shown in Fig. 6.

Since cφ(0) is stabilizing P and the other candidate controllers

in K1 are not better than this controller, it is kept in the loop

up to t = t∗1 as shown in Fig. 12. After the termination of the

ES, the optimized set of controllers at p1 results as shown

in Fig. 7. The optimal controller c∗1 = [17.28,0.055]T is in

1012

![Page 6: [IEEE Control (MSC) - Denver, CO, USA (2011.09.28-2011.09.30)] 2011 IEEE International Symposium on Intelligent Control - Real-time PI controller tuning via unfalsified control](https://reader037.fdocument.org/reader037/viewer/2022092810/5750a7931a28abcf0cc2194d/html5/thumbnails/6.jpg)

Fig. 6. ES running at t∗1 using J∗i

16 16.2 16.4 16.6 16.8 17 17.2 17.4 17.6 17.8 180.05

0.052

0.054

0.056

0.058

Loss of diversity after ES termination at p1

kp

Tn

5 10 15 20 25 30 350.02

0.04

0.06

0.08

0.1

0.12

Diversity at p1

kp

Tn

Other controllers

Best controller

C*

Fig. 7. Adaptive set K2 at t∗1

37.6 37.8 38 38.2 38.4 38.6 38.8 39 39.2 39.40.0405

0.041

0.0415

0.042

0.0425

0.043

0.0435

Loss of diversity after ES termination at p2

kp

Tn

10 20 30 40 50 60 70 80

0.02

0.04

0.06

0.08

0.1

Diversity at p2

kp

Tn

Other controllers

Best controller

C*

Fig. 8. Adaptive set K3 at t∗2

34.3 34.4 34.5 34.6 34.7 34.8 34.9 35 35.10.038

0.039

0.04

0.041

0.042

Loss of diversity after ES termination at p3

kp

Tn

10 20 30 40 50 60 70

0.02

0.04

0.06

0.08

0.1

Diversity at p3

kp

Tn

Other controllers

Best controller

C*

Fig. 9. Adaptive set K4 at t∗3

the loop until p2 is selected at t = t2 = 4.15h and it will

be assigned as the new initial controller for p2 as shown

in Fig. 12. According to the diversity operator, at the first

tuning point, the new controller set K2 is used for p2 as

shown in Fig. 7. Note that the other candidate controllers

are distributed around c∗1 according to = 2.

The same procedure is applied at the next operating

points p2, p3, p4, p5 which results in the new controller sets

K3,K4,K5,K6 as shown in Figs. 8, 9, 10, and 11. The

optimal controllers are switched on at each tuning point.

So this algorithm leads to the real-time PI controller tuning

for the nonlinear CSTR model as shown in Fig. 12. The

regulation performance is shown in Fig. 13. Note that a good

performance can be achieved using the proposed algorithm.

IV. CONCLUSIONS AND FUTURE WORKS

In this paper we presented a new real-time adaptive con-

troller tuning technique which is purely based on measured

data. The only prerequisite is the knowledge of at least one

stabilizing controller within the original set of controllers.

In order to be able to adapt the controller parameters online,

rather than just switching between predefined controllers, the

36 36.5 37 37.5 38 38.50.041

0.042

0.043

0.044

0.045

0.046

Loss of diversity after ES termination at p4

kp

Tn

10 20 30 40 50 60 70 80

0.02

0.04

0.06

0.08

0.1

Diversity at p4

kp

Tn

Other controllers

Best controller

C*

Fig. 10. Adaptive set K5 at t∗4

35.2 35.4 35.6 35.8 36 36.2 36.4 36.6 36.8 37 37.20.04

0.041

0.042

0.043

0.044

Loss of diversity after ES termination at p5

kp

Tn

10 20 30 40 50 60 70 80

0.02

0.04

0.06

0.08

0.1

Diversity at p5

kp

Tn

Other controllers

Best controller

C*

Fig. 11. Adaptive set K6 at t∗5

0 1 2 3 4 5 6 7 8 9 10 11 12 13130

20

40

60

80

Time (h)

kp

Trajectory of kp (1/h)/(mol ⋅ l

−1)

0 1 2 3 4 5 6 7 8 9 10 11 12 1313

0.02

0.04

0.06

0.08

0.1

0.12

Time (h)

Tn

Trajectory of Tn (h)

fixed controller

real−time PI tuning

.

Fig. 12. PI controller tuning

3 4 5 6 7 8 9 10 11 12 13130.75

0.8

0.85

0.88

0.9

0.92

0.95

Time (h)

cB

(t)

Concentration of component B (mol ⋅ l−1

)

r set−points

cB (real−time PI tuning)

cB (fixed controller)

.

Fig. 13. Output response

key element of unfalsified control had to be modified, intro-

ducing a new fictitious error signal. In this paper, we applied

the new adaptive control algorithm to a nonlinear continuous

stirred tank reactor (CSTR) model which is nonminimum

phase. As the set of candidate controllers adapted to the

nonlinearity of the CSTR model, the adaptive controller can

handle significant changes of set-points well.

In the future, this work will be continued on the following

issues:

1) Dealing with the effect of sensor noise

2) Theoretical analysis of the effect of disturbances on

the adaptation

3) Extension to multivariable problems

4) Testing at a real laboratory plant

REFERENCES

[1] M.G. Safonov and T.C. Tsao, The unfalsified control concept andlearning, IEEE Transactions on Automatic Control, 42(6):843-847,1997.

[2] R. Wang and A. Paul and M. Stefanovic and M.G. Safonov, Cost-detectability and Stability of Adaptive Control Systems. In Proc. IEEE

CDC-ECC conf., Seville, 2005.[3] S. Engell and T. Tometzki and T. Wonghong, A New Approach to

Adaptive Unfalisified Control. In Proc. European Control Conf., Kos,2007.

[4] T. Wonghong and S. Engell, Application of a New Scheme forAdaptive Unfalsified Control to a CSTR. In. Proc. 17th IFAC World

Congress, Seoul, 2008.[5] T. Wonghong and S. Engell, Application of a New Scheme for

Adaptive Unfalsified Control to a CSTR with Noisy Measurements.In. Proc. International Symposium on Advanced Control of Chemical

Processes (ADCHEM), Istanbul, 2009.[6] T. Wonghong, Adaptation by Evolutionary Algorithms in Unfalsified

Control. Dr.-Ing. Dissertation, Department of Biochemical and Chem-ical Engineering, TU Dortmund, 2010.

[7] A.S. Morse and D.Q. Mayne and G.C. Goodwin, Application of Hys-teresis Switching in Parameter Adaptive Control. IEEE Transactionson Automatic Control, 37:1343-1354, 1992.

[8] I. Rechenberg, Cybernetics Solution Path of an Experimental Problem.Royal Aircraft Establishment Library Translation, 1965.

[9] H. Schwefel, Evolutionsstrategie und Numerische Optimierung. Dis-sertation, TU Berlin, 1975.

[10] H. Schwefel and G. Rudolph, Contemporary Evolution Strategies. In.

Proc. 3rd CEAL Advances in Artificial Life, Springer:Berlin, 893–907,1995.

[11] A. Eiben and J. Smith, Introduction to Evolutionary Computing.Springer-Verlag, Berlin, 2003.

[12] H. Schwefel, Collective Phenomena in Evolutionary Systems.Preprints of the 31st Annual Meeting of the International Society for

General System Research, Budapest, 1987.[13] S. Engell and K.U. Klatt, Nonlinear Control of a Non Minimum-Phase

CSTR. In Proc. American Control Conf., San Francisco, 1993.[14] K.U. Klatt and S. Engell, Gain-scheduling trajectory control of a

continuous stirred tank reactor. Computers & Chemical Engineering,22:491-502, 1998.

1013

![Schweres Asthma kurz HP [Kompatibilitätsmodus] · PDF fileAsthma-Phänotypisierung • ATS Denver 2011 ¾> 440 Abstracts ! • Schweregrad • allergisch vs. nicht-allergisch (intrinsisch)](https://static.fdocument.org/doc/165x107/5a9e522d7f8b9a077e8bb4cd/schweres-asthma-kurz-hp-kompatibilittsmodus-ats-denver-2011-440-abstracts.jpg)