Heteroskedasticity & Dependence - Faculty of Arts

Transcript of Heteroskedasticity & Dependence - Faculty of Arts

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

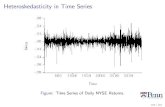

Autocorrelation

References

Heteroskedasticity & Dependence

Paul Schrimpf

UBCEconomics 326

March 6, 2018

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

1 Introduction

2 Consequences of heteroskedasticity

3 Var(β) with heteroskedasticityCalculating in R

4 Examples

5 Detecting heteroskedasticity

6 Heteroskedasticity and efficiency

7 Standard errors for dependent dataClusteringAutocorrelation

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

References

• Wooldridge (2013) chapter 8

• Stock and Watson (2009) chapter 10.5

• Angrist and Pischke (2014) chapter 5

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Section 1

Introduction

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Introduction

MLR.5 (homoskedasticity) Var(εi|X) = σ 2ε is often an

implausible assumptionHomoskedastic Heteroskedastic

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

● ●

●

0

1

2

3

4

0 1 2 3 4 5x

y

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

● ●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

● ●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

0

1

2

3

4

0 1 2 3 4 5x

y

Code

• Heteroskedasticity is when Var(ε|X) varies with X

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Introduction

• Role of homoskedasticity:• Was not needed to show OLS unbiased and consistent• Was needed to calculate Var(β|X)

• If errors are heteroskedastic then still have E[βj] = βj

and βjp→ βj (assuming MLR.1-4), but usual standard

error is invalid

• If there is heteroskedasticity, the variance of β1 isdifferent, but we can fix it

• “heteroskedasticity robust standard errors” /“heteroscedasticity consistent standard errors” /“Eicker–Huber–White standard errors”

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Example: Engel curves

• An Engel curve describes how a consumer’s purchasesof a good (like food) varies with the consumer’s totalresources (income or total spending)

• Named after Engel (1857) — looked at food expendituresand income of Belgian families

• See Chai and Moneta (2010) for a description of Engel’swork and Lewbel (2008) for a brief summary of modernresearch on Engel curves

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Example: Engel (1857) curve

foodexp = β0 + β1income + β2income2 + ε

Engel curve Residuals

●

●

●

●

●

●●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

● ●●

●●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●

●●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●●●

●

●

●

●●

●

●

●

●

●

●

●

●●

●●

●

●

●●

●

●

●

●

● ●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

500

1000

1500

2000

1000 2000 3000 4000 5000

income

food

exp

●●

●

●●

●

●●

●

●

● ● ●

●

●

●

●● ●

● ●

●

●●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●●

●

●

●● ●

●

●● ●

●●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

● ●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●●

●●

●

●

●

●●●

● ●

●

●

●●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●●

● ●

●●

●

● ●

●

● ●

●

●

●

●

●

●●

●

●

●● ●

●

●●

●

●●

●

●

●

●

●●

●

● ● ●

●

●

●

●

●

●

●●

●

●

●

●

−300

0

300

600

1000 2000 3000 4000 5000

income

resi

dual

s• Income and food expenditure measured in BelgianFrancs

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Example: Engel (1857) curve inshares

foodexpincome

= foodshare = β0 + β1income + β2income2 + ε

Engel curve Residuals

●

●

●

●

●●

●

●

●

●

● ●●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

● ●

●

●

●

●

●

●

●

● ●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●●

● ●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

● ●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●

●

● ●

●

●

●

●

●

●●

0.4

0.5

0.6

0.7

0.8

0.9

1000 2000 3000 4000 5000

income

food

shar

e

●

●●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

● ●

●

●

●

● ●

●●

●

●

●

● ●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

−0.2

−0.1

0.0

0.1

0.2

1000 2000 3000 4000 5000

income

resi

dual

s

Code

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Engel curves with modern data

• There are still papers about estimating Engel curves• Banks, Blundell, and Lewbel (1997), Blundell, Duncan,and Pendakur (1998), Blundell, Browning, and Crawford(2003)

• Next slides uses data from British Family ExpenditureSurveys (FES) for 1980-1982 (same data as Blundell,Duncan, and Pendakur (1998))

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Engel curves with modern data:food

Engel curve Residuals

●

●●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●●

●●

●

●

● ● ● ●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●●

● ●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

● ●

●

●●

●

●

●

●

●

●

●

●●

●

● ●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

● ●

●

●

●

●

●

●●

●

●

●

●

●

● ●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●●●

●

●

●●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●●

●

●●

●●

●

●

● ●

●

● ●

●

●

●●

●

●

●

●●

●

●

●

●

●

● ●

●

●●

●

●

●●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●●

●●

●

●

●

●

●●●

●

●

●

●

●

● ●

●

● ●

●

●

●

●●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●●

● ●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●●●

●

● ●

●

●

●●

●

●

●

●

●●●

●

●

●

●

●

●

●

● ●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

● ●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●●●

●● ●

●

●

●

●

●

●

●

●●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●● ●

●●

●

●

●

●

● ●

● ●

●

●

●●

●

●

●

●●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

● ●

●

●

●

● ●

●

●●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●● ●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●●

●

●

●

●

●●

●

●● ●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

● ●●

●

● ●

●

●● ●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

● ●●

●

●

●

●

●

●

●

●●

●

●

●

●● ●

●

●●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●●

●

●

● ●●●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●●●

●

●●

●

● ●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●●

●

●

●

●

●

●

●●

●

●●

● ●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●

●

0

50

100

150

0 100 200 300 400

income

food

●

●

●

●

●●●

● ●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●●

●●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●●

●

●

● ●●

●

● ●●

●

●

●

●

● ●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

● ●

●

●

●

●

●

●

●●

●●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●●

●

●

●●

●

●●

●●

●●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●●

●●

●

●

●

●●

●

●

●

●

●●

●

● ● ●●

●

●

●

●

●

●

●

●●

●●●

●● ●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●●

●

●●

●

● ●

●

●

●

●●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●●

●●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●●●●

●

●

●

● ●

●●

●

●

●

●●●

●

●

●

●

●

●

●

●●

●

●●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●●

● ● ●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●●

●

●●

●●

●●

●

●

●●

●

●

●

●

●●●

●

●

●●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●●●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●

●

●●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

● ●

●

●

● ●

●

●

●

●●

●

●

●

●

●●

● ●

●

●●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●●

●

●●

●

●

●

● ●

●

●●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

● ●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

● ●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

● ●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●●

●

●

●

●●●

●

●

●

●

●

●

●

●

●●●●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●●

●●

●●

●

●

●

●

●

●● ●

●

●

●●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

● ●●

● ●

●●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

● ●●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

● ●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●●

●

●● ●

●

●

●

●

●

●

● ● ●

●

●●

●

●●

●

●

● ●

● ●

●● ●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●●

●

●●

●

●

●

●●

●

●

●●

●

●

●

●

●

●●

●

●

●●

●

●

●●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

● ●

●

●

−40

0

40

80

0 100 200 300 400

income

resi

dual

sCode

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Engel curves with modern data:alcohol

Engel curve Residuals

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

● ●

●●

●

●

●●

●●

●

●

●

●

● ●●

● ●

●

●

●

●

●

● ●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

● ●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●●

●●

●●● ●

●

●● ●

●

●

●● ●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●●

●

●●

●

●

●

●

●●●

●

●●●

●

●

●

●

●

●

●●

●

●

●●

●

●

● ●

●

●

●●

●●

●

●

●

●

●

●

●

● ●

●

●

●

●●

●●

●●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

● ●

●

●

●

●

●

●

●●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●●

●

●●

●

●

● ●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●●

●

● ●

●●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●●

●

● ●●●

●

●●

●●

●

●

●

●

●

●●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

● ●

●●●

●

● ●

●

●

●●

●

●●●●

●

●

●

●●

●● ●

●

●

●●●●●

●●

●

●●

●●

●

●

●●

●

●

●

●

●

●

●●

●

●●

●● ●●

●●

●

●●●

●

●●

●

●●

●

● ●

●● ●

●

●●

●

●●

●

●●

●

●

●●

●

●

●● ●

●● ●

●

●●

●

●

●

●

●●

●

●

●

● ●

●●

● ●●

●

●●

●

●

●

●

●●

●

● ●●●

●

● ●

●

●

●

●

●

●

●●

●

●

●

●● ●

●

●●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●●

●

●

●

●

●

● ●●

●

●

●

●●●

●

●

●

●●

●

●

●

●

●

●

●

●

● ● ●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●●

●●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●●●

●●

● ●

●

●

●

●

●

● ●●

●

●

●

●●

●● ●

●

●

●

●

● ●●●

●

●

●

●●

●

●

●

●

●

●

●●●

●

●

●

●●

●

●●

●

●

●

●●

●

● ●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

● ●

●

●

●

●

●

● ●●

●

●

●

●

●●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●

●●

●

●

●

●●

●

●

●

●

●●

●●

●

●●●

●

●

●

●

●

●

●● ●●

●

●

●

● ●●●●

●

●

●

●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●●

●

●

●

●

●

●

● ●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●●

●●

●

●

●

●

●

●●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●●●

● ●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●●

●

●

●

●

●●

●●

●

●

●●

●●

●

●

●

●●

●

●

●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●●

●

●●

●

●

●●

●

●

●

●

●●

●

●●

●●●

●

●●

● ●

●

●●

●

●

● ●

●

● ●●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

● ●

●

●●

●

●

●●●● ●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●●

●

●

● ●

●●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●● ●

●

●

●

●

●

●●

● ●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●● ●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

0

20

40

60

0 100 200 300 400

income

alc

●

● ●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●●

●

●

●

●

●

●

●

● ●

●

●●

●●

●

●●

●

●

●●●

●

●

● ●

●

●●

●

●●

●

●

●

●

●

●

●

●

●●

●

●●●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

● ●

●

●

●

●●

●

●

● ●

●●

●

●

●

● ●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●●

●

●

●

●

●●

●●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

● ●●

●

● ●

● ●

●

●

●

●

●●●

●

●

●

●●●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●●

●

●● ●●

●

●

●

●

●

●

●

●●

●

●

●

●

● ●●

●

●●

●●●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●●

●●

●

●

●

●●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●

●●

●●

● ● ●●

●

●● ●●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●●

●

●●

●

●

●●●

●

● ●

●

●

●

●●

● ●●●

●

●

●

●

● ●●

●●●●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●●

●

●●●

●

●

●

●

●●

● ●

●●

●

●

●●●●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●● ●

●●

●

●●●

●●●

●

●●

●

●

●

●

●

● ●

●

●

●

●●

●

●

●●

●

●●

●

●●●

●

●●

●

●

●●

●

●●●

●

● ●

●

●

●

●

●

●

●

●

●

●

●

● ●

●●

●

●

●● ●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

● ●

●

●

●

●

●●

●● ●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●●

●

●

●●

●

●

●

●

●●

●

●

●

●

●●

●

● ●●

●

● ●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●●

●●

●●

●●

●

●

●

●

●

●

●

●

●

●

●

●●●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●●

●

●

●

●

●

●●●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

● ●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

● ●

●

●●●

●

●

●

●

●

●

● ● ●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

● ●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

● ●

●

●●●

●●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

● ●

●

●

●

●

●

●

●

●●

● ●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

●●

●●

●

●

●

●●

● ●

●

●

●

●●

●

●

●

●●

●

●●

● ●

●

●

●

●

●

●

●

●●

●

●

●

●

●

●

●●

●

●●

●

●

●

●

●

●

●

●

●

●

●●

●● ●

●

●

● ●

●●

●

●

●

● ●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●

●

●

●

● ● ●

●

●●

●

●●

●

●

●●

●

●

●

●●

●

●

● ●

●●

●

●

●●

● ●

●

●

●

●

●

●

●

●

●

●

● ●

●

●

●

●●

●

●

●

●

●

●

●

●●

●

● ●

●

●●●

●●

●

●●●

●●

●

●

●

●

●

●●

●

●

●●

●

●

●

●

●

●

●● ●

●

●

●

●

●

●

●●

●

●

●

●

●●●

●

●

●●

●

●

●●

●

●

●

●

●

●

●

●

●

●●●

●

●

●

● ●

●

●

●

●

●●

●

●

●●

●

● ●

●

●●●

●●

●

●

●

●

●

●

●●

●

●

●

●

●●

●

●

●●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

●

●

●

●

●

●

●●

●●

●

●

●

●●

●

●

●●

● ●

●

●

●

●●

●

●●

●

●

●

●

●

●●

●

●

●

●

●

●●

●●

●

●

●

●

●●

●

●

●

●

●

●

●

●

● ●

●

●

●●

●●●

●

●

●

●

●

●

●

●

●

●

●

●

0

20

40

60

0 100 200 300 400

income

resi

dual

sCode

Heteroskedasticity& Dependence

Paul Schrimpf

Introduction

Consequencesofheteroskedas-ticity

Var(β) withheteroskedas-ticityCalculating in R

Examples

Detectingheteroskedas-ticity

Heteroskedasticityand efficiency

Standarderrors fordependentdataClustering

Autocorrelation

References

Engel curves with modern data:clothing

Engel curve Residuals

● ● ●●●

● ●●

●

●

●

●

●●

●

●●

●

●

●●

●●

●

● ●

●

●●

●

●●

●●

●

●

●●

●●

●

● ●●● ●

●●

●

●●

●

●

●●

●

●

●●

●

●

●

●

●

●●

● ●●

●

●

●

●●

●

●

●

●

●

●

●

●

●●

● ● ● ●

●

●●

●

●●

●

●

●

●

●

●●

●●●●

●

●●

●

●

●

●

●

●

●

●● ●

●

●

●

●

●● ●●

●

●● ●●

●

●

●●

●

●

●

●

●

● ●

●● ●

● ●

●

●

●

●●

● ●

●

●

●

● ●●

●●

●

●

●

●

● ● ●

●

●

●

●●

●

●

●●

● ●

●

●

●●

●●

●

●●

●●

●●

●

●

●

●

● ● ●●● ●

●●

●

●● ●

●

●

●

●●

●

●●

●●

●● ●

●●

●

●

●

●●

●

●

●

●

●● ●

●●

●

● ●

● ●

●

●●

●

●●

● ●

●

●

●●

●●

●●

●

●●

●●

●

●

●●

●●●

●

●

●

● ●

●●

●

●

●

●

●

●●

●

●●

●

●● ●● ● ●●● ●

●

●

●

● ●

● ●●

● ●●

●

● ●

●

●

●

●

●

● ●●

●

●

●●●

●

●

●●

●●

● ●●

●●●

● ●

●

●

●●

●

●

●

●

●●

●● ●

●

●