ARMA Autocorrelation Functions - Nc State · PDF fileARMA Autocorrelation Functions ... The...

Transcript of ARMA Autocorrelation Functions - Nc State · PDF fileARMA Autocorrelation Functions ... The...

ARMA Autocorrelation Functions

• For a moving average process, MA(q):

xt = wt + θ1wt−1 + θ2wt−2 + · · ·+ θqwt−q.

• So (with θ0 = 1)

γ(h) = cov(xt+h, xt

)= E

q∑j=0

θjwt+h−j

q∑k=0

θkwt−k

=

σ2w

q−h∑j=0

θjθj+h, 0 ≤ h ≤ q

0 h > q.

1

• So the ACF is

ρ(h) =

q−h∑j=0

θjθj+h

q∑j=0

θ2j

, 0 ≤ h ≤ q

0 h > q.

• Notes:

– In these expressions, θ0 = 1 for convenience.

– γ(q) 6= 0 but γ(h) = 0 for h > q. This characterizes

MA(q).

2

• For an autoregressive process, AR(p):

xt = φ1xt−1 + φ2xt−2 + · · ·+ φpxt−p + wt.

• So

γ(h) = cov(xt+h, xt

)= E

p∑j=1

φjxt+h−j + wt+h

xt

=p∑

j=1

φjγ(h− j) + cov(wt+h, xt

).

3

• Because xt is causal, xt is wt+ a linear combination of wt−1, wt−2, . . . .

• So

cov(wt+h, xt

)=

σ2w h = 0

0 h > 0.

• Hence

γ(h) =p∑

j=1

φjγ(h− j), h > 0

and

γ(0) =p∑

j=1

φjγ(−j) + σ2w.

4

• If we know the parameters φ1, φ2, . . . , φp and σ2w, these equa-

tions for h = 0 and h = 1,2, . . . , p form p+ 1 linear equations

in the p+ 1 unknowns γ(0), γ(1), . . . , γ(p).

• The other autocovariances can then be found recursively

from the equation for h > p.

• Alternatively, if we know (or have estimated) γ(0), γ(1), . . . , γ(p),

they form p + 1 linear equations in the p + 1 parameters

φ1, φ2, . . . , φp and σ2w.

• These are the Yule-Walker equations.

5

• For the ARMA(p, q) model with p > 0 and q > 0:

xt =φ1xt−1 + φ2xt−2 + · · ·+ φpxt−p+ wt + θ1wt−1 + θ2wt−2 + · · ·+ θqwt−q,

a generalized set of Yule-Walker equations must be used.

• The moving average models ARMA(0, q) = MA(q) are the

only ones with a closed form expression for γ(h).

• For AR(p) and ARMA(p, q) with p > 0, the recursive equation

means that for h > max(p, q + 1), γ(h) is a sum of geomet-

rically decaying terms, possibly damped oscillations.

6

• The recursive equation is

γ(h) =p∑

j=1

φjγ(h− j), h > q.

• What kinds of sequences satisfy an equation like this?

– Try γ(h) = z−h for some constant z.

– The equation becomes

0 = z−h −p∑

j=1

φjz−(h−j) = z−h

1−p∑

j=1

φjzj

= z−hφ(z).

7

• So if φ(z) = 0, then γ(h) = z−h satisfies the equation.

• Since φ(z) is a polynomial of degree p, there are p solutions,say z1, z2, . . . , zp.

• So a more general solution is

γ(h) =p∑

l=1

clz−hl ,

for any constants c1, c2, . . . , cp.

• If z1, z2, . . . , zp are distinct, this is the most general solution;if some roots are repeated, the general form is a little morecomplicated.

8

• If all z1, z2, . . . , zp are real, this is a sum of geometricallydecaying terms.

• If any root is complex, its complex conjugate must also be aroot, and these two terms may be combined into geometri-cally decaying sine-cosine terms.

• The constants c1, c2, . . . , cp are determined by initial condi-tions; in the ARMA case, these are the Yule-Walker equa-tions.

• Note that the various rates of decay are the zeros of φ(z),the autoregressive operator, and do not depend on θ(z), themoving average operator.

9

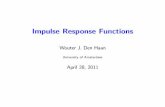

• Example: ARMA(1,1)

xt = φxt−1 + θwt−1 + wt.

• The recursion is

γ(h) = φγ(h− 1), h = 2,3, . . .

• So γ(h) = cφh for h = 1,2, . . . , but c 6= 1.

• Graphically, the ACF decays geometrically, but with a differ-

ent value at h = 0.

10

●

●

●

●

●

●

●

●

●●

●●

●●

●●

●● ● ● ● ● ● ● ●

5 10 15 20 25

0.2

0.4

0.6

0.8

1.0

Index

AR

MA

acf(

ar =

0.9

, ma

= −

0.5,

24)

11

The Partial Autocorrelation Function

• An MA(q) can be identified from its ACF: non-zero to lag q,

and zero afterwards.

• We need a similar tool for AR(p).

• The partial autocorrelation function (PACF) fills that role.

12

• Recall: for multivariate random variables X,Y,Z, the partialcorrelations of X and Y given Z are the correlations of:

– the residuals of X from its regression on Z; and

– the residuals of Y from its regression on Z.

• Here “regression” means conditional expectation, or best lin-ear prediction, based on population distributions, not a sam-ple calculation.

• In a time series, the partial autocorrelations are defined as

φh,h = partial correlation of xt+h and xt

given xt+h−1, xt+h−2, . . . , xt+1.

13

• For an autoregressive process, AR(p):

xt = φ1xt−1 + φ2xt−2 + · · ·+ φpxt−p + wt,

• If h > p, the regression of xt+h on xt+h−1, xt+h−2, . . . , xt+1 is

φ1xt+h−1 + φ2xt+h−2 + · · ·+ φpxt+h−p

• So the residual is just wt+h, which is uncorrelated with

xt+h−1, xt+h−2, . . . , xt+1 and xt.

14

• So the partial autocorrelation is zero for h > p:

φh,h = 0, h > p.

• We can also show that φp,p = φp, which is non-zero by as-

sumption.

• So φp,p 6= 0 but φh,h = 0 for h > p. This characterizes AR(p).

15

The Inverse Autocorrelation Function

• SAS’s proc arima also shows the Inverse Autocorrelation Func-tion (IACF).

• The IACF of the ARMA(p, q) model

φ(B)xt = θ(B)wt

is defined to be the ACF of the inverse (or dual) process

θ(B)x(inverse)t = φ(B)wt.

• The IACF has the same property as the PACF: AR(p) ischaracterized by an IACF that is nonzero at lag p but zerofor larger lags.

16

Summary: Identification of ARMA processes

• AR(p) is characterized by a PACF or IACF that is:

– nonzero at lag p;

– zero for lags larger than p.

• MA(q) is characterized by an ACF that is:

– nonzero at lag q;

– zero for lags larger than q.

• For anything else, try ARMA(p, q) with p > 0 and q > 0.

17

For p > 0 and q > 0:

AR(p) MA(q) ARMA(p, q)

ACF Tails off Cuts off after lag q Tails off

PACF Cuts off after lag p Tails off Tails off

IACF Cuts off after lag p Tails off Tails off

• Note: these characteristics are used to guide the initial choice

of a model; estimation and model-checking will often lead to

a different model.

18

Other ARMA Identification Techniques

• SAS’s proc arima offers the MINIC option on the identify

statement, which produces a table of SBC criteria for various

values of p and q.

• The identify statement has two other options: ESACF and

SCAN.

• Both produce tables in which the pattern of zero and non-

zero values characterize p and q.

• See Section 3.4.10 in Brocklebank and Dickey.

19

options linesize = 80;

ods html file = ’varve3.html’;

data varve;

infile ’../data/varve.dat’;

input varve;

lv = log(varve);

run;

proc arima data = varve;

title ’Use identify options to identify a good model’;

identify var = lv(1) minic esacf scan;

estimate q = 1 method = ml;

estimate q = 2 method = ml;

estimate p = 1 q = 1 method = ml;