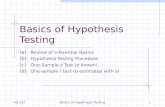

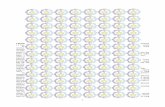

Hypothesis test flow chart

description

Transcript of Hypothesis test flow chart

Hypothesis test flow chart

frequencydata

Measurement scale

number of variables

1

basic χ2 test(19.5)

Table I

χ2 test for independence(19.9) Table I

2correlation (r)number of correlations

1Test H0: r=0(17.2)

Table G

2

Test H0: r1= r2

(17.4) Tables H and A

number of means

Mea

ns

Do you know s?

1Yesz -test(13.1) Table A

t -test(13.14)Table D

2

independentsamples?

Yes

Test H0: m1= m2

(15.6) Table D

No

Test H0: D=0(16.4)

Table D

More than 2 number of factors

1

1-way ANOVACh 20

Table E

2 2-way ANOVACh 21

Table E

No

START HERE

Chapter 18: Testing for difference among three or more groups: One way Analysis of Variance (ANOVA)

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62 Suppose you wanted to compare the results of three tests (A, B and C) to see if there was any differences difficulty. To test this, you randomly sample these ten scores from each of the three populations of test scores.

How would you test to see if there was any difference across the mean scores for these three tests?

The first thing is obvious – calculate the mean for each of the three samples of 10 scores.

But then what? You could run an two-sample t-test on each of the pairs (A vs. B, A vs. C and B vs. C).

Note: we’ll be skipping sections 18.10, 18.12, 18.13, 18.14, 1.15, 18.16, 18.17 18.18, and 18.19 from the book

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62 You could run an two-sample t-test on each of the pairs (A vs. B, A vs. C and B vs. C).

There are two problems with this:

1) The three tests wouldn’t be truly independent of each other, since they contain common values, and

2) We run into the problem of making multiple comparisons: If we use an a value of .05, the probability of obtaining at least one significant comparison by chance is 1-(1-.05)3, or about .14

So how do we test the null hypothesis: H0: mA = mB = mC ?

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62

In the 1920’s Sir Ronald Fisher developed a method called ‘Analysis of Variance’ or ANOVA to test hypotheses like this.

The trick is to look at the amount of variability between the means.

So far in this class, we’ve usually talked about variability in terms of standard deviations. ANOVA’s focus on variances instead, which (of course) is the square of the standard deviation. The intuition is the same.

The variance of these three mean scores (81, 72 and 75) is 22.5

Intuitively, you can see that if the variance of the means scores is ‘large’, then we should reject H0.

But what do we compare this number 22.5 to?

So how do we test the null hypothesis: H0: mA = mB = mC ?

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62

The variance of these three mean scores (81, 72 and 75) is 22.5

How ‘large’ is 22.5?

Suppose we knew the standard deviation of the population of scores (s).

If the null hypothesis is true, then all scores across all three columns are drawn from a population with standard deviation s.

It follows that the mean of n scores should be drawn from a population with standard deviation:

This means multiplying the variance of the means by n gives us an estimate of the variance of the population.

So how do we test the null hypothesis: H0: mA = mB = mC ?

nsX

s 22

XsnsWith a little algebra:

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62

The variance of these three mean scores (81, 72 and 75) is 22.5

Multiplying the variance of the means by n gives us an estimate of the variance of the population.

For our example, 225)5.22)(10(2 Xsn

We typically don’t know what s2 is. But like we do for t-tests, we can use the variance within our samples to estimate it. The variance of the 10 numbers in each column (61, 94, and 55) should each provide an estimate of s2.

We can combine these three estimates of s2 by taking their average, which is 70.

61 94 55

Variances Mean of variances

70

n x Variance of means

225

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62

If H0: mA = mB = mC is true, we now have two separate estimates of the variance of the population (s2).

One is n times the variance of the means of each column.The other is the mean of the variances of each column.

If H0 is true, then these two numbers should be, on average, the same, since they’re both estimates of the same thing (s2).

For our example, these two numbers (225 and 70) seem quite different.

Remember our intuition that a large variance of the means should be evidence against H0. Now we have something to compare it to. 225 seems large compared to 70.

61 94 55

Variances Mean of variances

70

n x Variance of means

225

A B C

81 72 75

Means

87 61 75

81 68 67

76 87 67

79 69 84

68 81 74

92 71 80

76 74 79

93 62 83

78 62 79

84 85 62

If H0 is true, then the value of F should be around 1. If H0 is not true, then F should be significantly greater than 1.

We determine how large F should be for rejecting H0 by looking up Fcrit in Table E. F distributions depend on two separate degrees of freedom – one for the numerator and one for the denominator.

df for the numerator is k-1, where k is the number of columns or ‘treatments’. For our example, df is 3-1 =2.

df for the denominator is N-k, where N is the total number of scores. In our case, df is 30-3 = 27.

61 94 55

Variances Mean of variances

70

n x Variance of means

225 Ratio (F)

3.23

Fcrit for a = .05 and df’s of 2 and 27 is 3.35.

Since Fobs = 3.23 is less than Fcrit, we fail to reject H0. We cannot conclude that the exam scores come from populations with different means.

When conducting an ANOVA, we compute the ratio of these two estimates of s2. This ratio is called the ‘F statistic’. For our example, 225/70 = 3.23.

Fcrit for a = .05 and df’s of 2 and 27 is 3.35.

Since Fobs = 3.23 is less than Fcrit, we fail to reject H0. We cannot conclude that the exam scores come from populations with different means.

Instead of finding Fcrit in Table E, we could have calculated the p-value using our F-calculator. Reporting p-values is standard.

Our p-value for F=3.23 with 2 and 27 degrees of freedom is p=.0552

Since our p-value is greater then .05, we fail to reject H0

Example: Consider the following n=12 samples drawn from k=5 groups. Use an ANOVA to test the hypothesis that the means of the populations that these 5 groups were drawn from are different.

Answer: The 5 means and variances are calculated below, along with n x variance of means, and the mean of variances.

Our resulting F statistic is 15.32.

Our two dfs are k-1=4 (numerator) and 60-5 = 55(denominator). Table E shows that Fcrit for 4 and 55 is 2.54.

Fobs > Fcrit so we reject H0.

78

96

74

78

100

97

76

46

75

149

Means

Variances

A B C D E

87 87 99 78 91

81 69 105 70 51

76 81 104 75 64

79 71 108 91 86

68 74 104 83 81

92 62 87 78 72

76 62 104 66 79

93 85 111 74 65

78 75 107 76 65

84 67 76 69 78

61 67 97 72 90

68 84 97 79 82

Mean of variances

93

n x Variance of means

1429 Ratio (F)

15.32

What does the probability distribution F(dfb,dfw) look like?

0 1 2 3 4 5

F(2,5)

0 1 2 3 4 5

F(2,10)

0 1 2 3 4 5

F(2,50)

0 1 2 3 4 5

F(2,100)

0 1 2 3 4 5

F(10,5)

0 1 2 3 4 5

F(10,10)

0 1 2 3 4 5

F(10,50)

0 1 2 3 4 5

F(10,100)

0 1 2 3 4 5

F(50,5)

0 1 2 3 4 5

F(50,10)

0 1 2 3 4 5

F(50,50)

0 1 2 3 4 5

F(50,100)

For a typical ANOVA, the number of samples in each group may be different, but the intuition is the same - compute F which is the ratio of the variance of the means over the mean of the variances.

Formally, the variance is divided up the following way:

Given a table of k groups, each containing ni scores (i= 1,2, …, k), we can represent the deviation of a given score, X from the mean of all scores, called the grand mean as:

)()( XXXXXX

Deviation of X from the grand mean

Deviation of X from the mean of the group

Deviation of the mean of the group from the grand mean

k

ii

scoresall

scoresall

XXnXXXX1

222 )()()(

Total sum of squares:SStotal

Within-groups sum of squares:SSwithin

Between-groups sum of squares:SSbetween

The total sums of squares can be partitioned into two numbers:

SSbetween (or SSbet) is a measure of the variability between groups. It is used as the numerator in our F-tests

The variance between groups is calculated by dividing SSbet by its degrees of freedom dfbet = k-1

s2bet=SSbet/dfbet and is another estimate of s2 if H0 is true. This is essentially n times

the variance of the means.

If H0 is not true, then s2bet is an estimate of s2 plus any ‘treatment effect’ that would

add to a difference between the means.

.

k

ii

scoresall

scoresall

XXnXXXX1

222 )()()(

Total sum of squares:SStotal

Within-groups sum of squares:SSwithin

Between-groups sum of squares:SSbetween

The total sums of squares can be partitioned into two numbers:

SSwithin (or SSw) is a measure of the variability within each group. It is used as the denominator in all F-tests.

The variance within each group is calculated by dividing SSwithin by its degrees of freedom dfw = ntotal – k

s2w=SSw/dfw This is an estimate of s2 This is essentially the mean of the variances

within each group. (It is exactly the mean of variances if our sample sizes are all the same.)

SStotal = SSwithin + SSbetween

SStotal

SSwithin SSbetween

scoresall

XX 2)(

scoresall

XX 2)(

k

ii XXn

1

2)(2

2

within

between

ssF

dftotal =ntotal-1

dfwithin =ntotal-k dfbetween k-1

dftotal = dfwithin + dfbetween

s2between=SSbetween/dfbetween

s2within=SSwithin/dfwithin

The F ratio is calculated by dividing up the sums of squares and df into ‘between’ and ‘within’

Variances are then calculated by dividing SS by df

F is the ratio of variances between and within

2

2

w

bet

ssF

Finally, the F ratio is the ratio of s2bet and s2

bet

We can write all these calculated values in a summary table like this:

Source SS df s2 F

Between k-1 s2bet=SSbet/dfbet

Within ntotal-k s2w=SSw/dfw

Total ntotal-1

k

ii XXn

1

2)(

scoresall

XX 2)(

scoresall

XX 2)(

2

2

w

bet

ss

Source SS df s2 F

Between

Within

Total 10847 59

78

96

74

78

100

97

76

46

75

149

Means

Variances

A B C D E

87 87 99 78 91

81 69 105 70 51

76 81 104 75 64

79 71 108 91 86

68 74 104 83 81

92 62 87 78 72

76 62 104 66 79

93 85 111 74 65

78 75 107 76 65

84 67 76 69 78

61 67 97 72 90

68 84 97 79 82

Mean of variances

93

n x Variance of means

1429 Ratio (F)

15.32

10847)7.8091(

...)7.8061()7.8068()(

2

22

1

2

k

itotal XXSS

7.80604839

Xgrand mean:

Calculating SStotal

Source SS df s2 F

Between 5717 5-1=4 1429

Within

Total 10847 59

78

96

74

78

100

97

76

46

75

149

Means

Variances

A B C D E

87 87 99 78 91

81 69 105 70 51

76 81 104 75 64

79 71 108 91 86

68 74 104 83 81

92 62 87 78 72

76 62 104 66 79

93 85 111 74 65

78 75 107 76 65

84 67 76 69 78

61 67 97 72 90

68 84 97 79 82

Mean of variances

93

n x Variance of means

1429 Ratio (F)

15.32

5717)7.8075)(12(

...)7.8074)(12()7.8078)(12()(

2

22

1

2

k

iibet XXnSS

7.80604839

X

1429457172

bet

betbet df

SSs

Calculating SSbet and s2bet

Source SS df s2 F

Between 5717 5-1=4 1429

Within 5130 12x5-5=55 93

Total 10847 59

78

96

74

78

100

97

76

46

75

149

Means

Variances

A B C D E

87 87 99 78 91

81 69 105 70 51

76 81 104 75 64

79 71 108 91 86

68 74 104 83 81

92 62 87 78 72

76 62 104 66 79

93 85 111 74 65

78 75 107 76 65

84 67 76 69 78

61 67 97 72 90

68 84 97 79 82

Mean of variances

93

n x Variance of means

1429 Ratio (F)

15.32

5130)7591(...)7467()7484(

...)7861()7868()(

222

22

1

2

k

iw XXSS

935551302

w

ww df

SSs

Calculating SSw and s2w

Source SS df s2 F

Between 5717 5-1=4 1429 15.32

Within 5130 12x5-5=55 93

Total 10847 59

78

96

74

78

100

97

76

46

75

149

Means

Variances

A B C D E

87 87 99 78 91

81 69 105 70 51

76 81 104 75 64

79 71 108 91 86

68 74 104 83 81

92 62 87 78 72

76 62 104 66 79

93 85 111 74 65

78 75 107 76 65

84 67 76 69 78

61 67 97 72 90

68 84 97 79 82

Mean of variances

93

n x Variance of means

1429 Ratio (F)

15.32

Fcrit with dfs of 4 and 55 and a = .05 is 2.54

Our decision is to reject H0 since 15.32 > 2.54

32.15931429

2

2

w

bet

ssF

Calculating F

Example: Female students in this class were asked how much they exercised, given the choices:

A. Just a littleB. A fair amountC. Very much Is there a significant difference in the heights of students across these four

categories? (Use a = .05)

In other words: H0: mA = mB mC

Summary statistics are:

A B C Total

n 23 37 9 69

Mean 64.17 64.32 66.89 64.61

SS 139.3 416.11 58.89 668.43

Means and standard errors for ‘1-way’ ANOVAs can be plotted as bar graphs with error bars indicating ±1 standard error of the mean.

A B C Total

n 23 37 9 69

Mean 64.17 64.32 66.89 64.61

SS 139.3 416.11 58.89 668.43

Just a little A fair amount Very much60

62

64

66

68

70

Amount of exercise

Hei

ght (

in)

Filling in the table for the ANOVA: SSW and s2w

Source SS df S2 F

Between

Within 614.3 69-3=66 9.3

Total 668.43 69-1=68

SSw = 139.3+416.11+58.89 = 614.3

3.9663.6142

w

ww df

SSs

A B C Total

n 23 37 9 69

Mean 64.17 64.32 66.89 64.61

SS 139.3 416.11 58.89 668.43

Filling in the table for the ANOVA: There are two ways of calculating SSbet

13.54)61.6489.66)(9(

)61.6432.64)(37()61.6417.64)(23()(

2

22

1

2

k

iibet XXnSS

Source SS df S2 F

Between 54.13 3-1=2

Within 614.3 69-3=66 9.3

Total 668.43 69-1=68

A B C Total

n 23 37 9 69

Mean 64.17 64.32 66.89 64.61

SS 139.3 416.11 58.89 668.43

Filling in the table for the ANOVA: There are two ways of calculating SSbet

1.27213.542

bet

betbet df

SSs

Or, use the fact that SStotal = SSwithin + SSbetween

or SSbetween = SStotal - SSwithin = 668.43-614.3= 54.13

Source SS df S2 F

Between 54.13 3-1=2 27.1

Within 614.3 69-3=66 9.3

Total 668.43 69-1=68

A B C Total

n 23 37 9 69

Mean 64.17 64.32 66.89 64.61

SS 139.3 416.11 58.89 668.43

Filling in the table for the ANOVA: F

91.23.91.27

2

2

within

between

ssF

Fcrit for dfs of 2 and 66 and a = .05 is 3.14

Since Fcrit is greater than our observed value of F, we fail to reject H0 and conclude that the female student’s average height does not significantly differ across the amount of exercise they get.Source SS df S2 F

Between 54.13 3-1=2 27.1 2.91

Within 614.3 69-3=66 9.3

Total 668.43 69-1=68

A B C Total

n 23 37 9 69

Mean 64.17 64.32 66.89 64.61

SS 139.3 416.11 58.89 668.43