Bootstrap Methods for Time Series: A Selective Overview...

Transcript of Bootstrap Methods for Time Series: A Selective Overview...

Bootstrap Methods for Time Series:A Selective Overview

Dimitris N. PolitisUniversity of California, San Diego

2

DATA: X1, . . . , Xn from time series {Xt, t ∈ Z}

Different resampling set-ups:

1. Parametric (?) E.g. {Xt} is a Gaussian time serieswith mean µ = EXt and stationary autocovarianceγ(k) = Cov(Xt,Xt+k); cf. Ramos (1988).

2. Semi-parametric.E.g. {Xt} satisfies the ARMA(p, q) equation:

Xt−φ1Xt−1−· · ·−φpXt−p = Zt+θ1Zt−1+· · ·+θqZt−q

or the AR(∞) linear time series model:

Xt =

∞∑k=1

φkXt−k + Zt

where Zt ∼ i.i.d. F (unknown) with EZt = 0.Freedman, Efron/Tibshirani, Kreiss, Paparoditis,Swanepoel/VanWyk, Buhlmann

3

3. Non-parametric.

• Model-based. E.g. nonparametric AR(1)Xt = g(Xt−1) + Zt, where Zt ∼ i.i.d. (0, σ2).

• Model-free. Blocking methods, Markovmethods, Frequency-Domain, etc.

A different classification:

• Frequency-Domain Bootstrap.Hurvich/Zeger (1987), Franke/Hardle (1992),Theiler et al. (1994), Dahlhaus/Janas (1996),Braun/Kulperger (1997), Paparoditis/Politis (1999),Kreiss/Paparoditis (2003), Paparoditis (2003)

• Time-Domain Bootstrap.

– Markov: Rajarshi (1990), Horowitz (2002).

– Local bootstrap: Paparoditis/Politis (2000-2002)

– AR(∞) ‘sieve’ bootstrap: Kreiss (1988, 1992),Paparoditis (1992), Buhlmann (1997, 2002),Buhlmann/Wyner (1999)

– Blocking methods ���

4

The sample mean

Data: X1, . . . , Xn from stationary series {Xt, t ∈ Z}with unknown mean µ = EXt and (equally unknown)autocovariance γ(k) = Cov(Xt,Xt+k).

Xn = 1n

∑ni=1 Xi : consistent & asymptotically efficient

σ2n = Var (

√nXn) =

∑ns=−n(1 − |s|

n)γ(s).

• Under regularity:

σ2∞ := lim

n→∞σ2

n =∞∑

s=−∞γ(s) = 2πf(0)

where f(w) = (2π)−1∑∞

s=−∞ eiwsγ(s) forw ∈ [−π, π], is the spectral density function.

• Standard error estimation is nontrivial underdependence.

5

Standard error estimation

Var (Xn) � σ2∞n

where σ2∞ =

∑∞s=−∞ γ(s)

• γ(s) = n−1∑n−|s|

t=1 (Xt − Xn)(Xt+|s| − Xn)

• Naive plug-in estimator σ2∞,naive =

∑|s|<n γ(s)

• But σ2∞,naive = 2πT (0), where

T (w) =1

2πn|

n∑s=1

eiws(Xs − Xn)|2

• The periodogram T (w) is inconsistent for f(w).

� ET (w) = f(w) + O(1/n) for w �= 0.

� Var T (w) � f 2(w)(1 + 1{w/π∈Z}) �→ 0.

• Furthermore, T (0) ≡ 0!

6

Blocking schemes

Basic assumption: b → ∞ but b/n → 0 as n → ∞.

• Fully overlapping—number of blocks q = n − b + 1

B3︷ ︸︸ ︷B1︷ ︸︸ ︷

X1, X2, X3, · · · , Xb, Xb+1, Xb+2, · · · ,

Bq︷ ︸︸ ︷Xn−b+1, · · · , Xn︸ ︷︷ ︸

B2

• Non-overlapping—number of blocks Q = [n/b]

B1︷ ︸︸ ︷X1, · · · , Xb,

B2︷ ︸︸ ︷Xb+1, · · · , X2b,

B3︷ ︸︸ ︷X2b+1, · · · , X3b, · · · · · ·Xn

• Non-overlapping with ‘buffer’—number ofblocks [n/(2b)]

B1︷ ︸︸ ︷X1, · · · , Xb,

buffer︷ ︸︸ ︷Xb+1, · · · , X2b,

B2︷ ︸︸ ︷X2b+1, · · · , X3b, · · · · · ·Xn

7

Bartlett’s spectral estimation scheme

• Consider one of the blocking schemes—for simplicity:non-overlapping

• Let Ti(w) be the periodogram calculated from Bi

– ETi(w) = f(w) + O(1/b)

– Var Ti(w) � cw

where cw = f 2(w)(1 + 1{w/π∈Z}).

• Define T (w) = Q−1∑Q

i=1 Ti(w).

– ET (w) = f(w) + O(1/b)

– Var T (w) � cw/Q = cw[b/n]

• If b → ∞ but b/n → 0, then T (w)P−→ f(w).

◦ Same argument for overlapping scheme—just cw isdifferent: 33% smaller.

8

Welch’s tapered periodograms

• Lag-window interpretation:

T (w) ≈ fB(w) =1

2π

b∑s=−b

λB(s/b)eiwsγ(s)

where λB(x) = 1 − |x| is Bartlett’s kernel.

◦ Let Tνi (w) be the periodogram calculated from block

Bi tapered, i.e., multiplied, by taper ν : [0, 1] → R+

◦ Define T ν(w) = Q−1∑Q

i=1 Tνi (w). Then:

T ν(w) ≈ 1

2π

b∑s=−b

ν2(s/b)eiwsγ(s)

where ν2 = ν ∗ ν is self-convolution of ν.

• If ν(0) = 0, ν is continuous, increasing on [0, 1/2]and symmetric about 1/2, then ν2 is twicecontinuously differentiable at the origin, and:

– ET ν(w) = f(w) + O(1/b2)

– Var T ν(w) � cνw[b/n]

9

t

w(t)

0.0 0.2 0.4 0.6 0.8 1.0

0.00.2

0.40.6

0.81.0

w(t) TRAP ; c=0.43

t

w*w(

t)

-1.0 -0.5 0.0 0.5 1.0

0.00.2

0.40.6

0.81.0

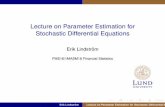

Figure 1: (a) Graph of window wTRAP0.43 ; (b) graph of the self-convolution wTRAP

0.43 ∗ wTRAP0.43 .

t

w(t)

0.0 0.2 0.4 0.6 0.8 1.0

0.00.2

0.40.6

0.81.0

w(t) SMOOTH ; a=1.3

t

w*w(

t)

-1.0 -0.5 0.0 0.5 1.0

0.00.2

0.40.6

0.81.0

Figure 2: (a) Graph of window wSMOOTH1.3 ; (b) graph of the self-convolution wSMOOTH

1.3 ∗wSMOOTH

1.3 (this is like 1 − |x|a).

10

Subsampling standard errors—Carlstein

• Consider all available blocks B1, B2, . . . from one ofthe blocking schemes, e.g., the full-overlap scheme.1

• General statistic of interest θn = θn(X1, . . . , Xn)that is

√n–consistent for some parameter θ.

• Typically,√

n(θn − θ)L⇒ N(0, σ2

θ) as n → ∞.

• Re-compute the statistic θb over the blocksB1, . . . Bq, i.e., let θb,i = θb(Bi).

◦ Let Vb(θn) = q−1∑q

i=1(θb,i − θn)2 denote the sample

variance of the subsample statistics θb,1, . . . , θb,q.

◦ Under some moment, mixing and uniform integrability

conditions, bVb(θn)P−→ σ2

θ as b−1 + b/n → 0.

1Carlstein used non-overlapping blocking scheme for convenience.

11

Subsampling distributions—Politis/Romano

• Assume τn(θn − θ)L⇒ some law J as n → ∞.

• As before, re-compute the statistic θb over the blocksB1, . . . Bq, i.e., let θb,i = θb(Bi).

• Define the subsampling distribution Jb,n as theempirical distribution of the (centered and scaled)subsample statistics θb,1, . . . , θb,q, i.e., let

Jb,n(x) =1

q

q∑i=1

1{τb(θb,i − θn) ≤ x}

� If {Xt} is strong mixing, and b−1 + b/n → 0, then

Jb,n(x)P−→ J(x) for all points of continuity of J .

◦ Confidence intervals and tests under minimalassumptions— not set-up specific!

12

Block Bootstrap: Hall, Kunsch, Liu/Singh

• Consider all available blocks B1, B2, . . . from one ofthe blocking schemes.

• Draw Q = [n/b] blocks B∗1 , . . . , B∗

Q randomly (withreplacement) from the given set of blocks B1, B2, . . .

• Concatenate the elements of the Q bootstrap blocksB∗

1 , . . . , B∗Q to create pseudo-realization X∗

1 , · · · , X∗n

where n = bQ = b[n/b] � n.

B∗1︷ ︸︸ ︷

X∗1 , · · · , X∗

b ,

B∗2︷ ︸︸ ︷

X∗b+1, · · · , X∗

2b, X∗2b+1, · · ·

B∗Q︷ ︸︸ ︷

· · · , X∗n−1, X

∗n

13

Approximately linear statistics

◦ Let θ be a parameter associated with L(X1), andθn = θn(X1, . . . , Xn) an (approximately) linear

statistic satisfying:√

n(θn − θ)L⇒ N(0, σ2

θ).

◦ For example, θn = 1n

∑nt=1 g(Xt) + oP ( 1√

n).

◦ Re-compute θn over the pseudo-realizationX∗

1 , · · · , X∗n, i.e., let θ∗n = θn(X

∗1 , . . . , X∗

n).

� Then, under some moment, mixing and regularityconditions, as b−1 + b/n → 0, BB ‘works’, i.e.

supx |P ∗(√

n(θ∗n− θn) ≤ x)−P (√

n(θn−θ) ≤ x)| P−→ 0

• Can equally handle continuous functions ofapproximately linear statistics.

◦ Bootstrap distribution may require explicit centeringas E∗θ∗n �= θn in general due to edge effects—use acircular scheme.

14

Parameters of joint distributions

◦ Let θ be a parameter associated with the joint lawL(X1, X2, . . . , Xp)

◦ Let g : Rp → R

d and θn = θn(X1, . . . , Xn) be anapproximately linear statistic of the type:

θn =1

n − p + 1

n−p+1∑t=1

g(Xt, Xt+1, . . . , Xt+p−1)+oP (1√n

)

• For example, assume µ = 0 and let p = 2, d = 2 andg(x1, x2) = (x2

1, x1x2)′. Then, θn = (γ(0), γ(1))′.

◦ How to bootstrap θn?

◦ How to bootstrap the sample autocorrelationρ(1) = γ(1)/γ(0); it is a smooth function of θn.

15

Blocks-of-blocks bootstrap

◦ Naive BB scheme:

– BB on X1, . . . , Xn yields X∗1 , . . . , X∗

n

– Re-compute θn over X∗1 , . . . , X∗

n to get θ∗n.

◦ Blocks-of-blocks bootstrap:

– Define Yt = (Xt, Xt+1, . . . , Xt+p−1) fort = 1, . . . , N where N = n − p + 1

– Then, θn = N−1∑N

t=1 g(Yt) + oP ( 1√n)

– Perform BB on Y1, . . . , YN to get Y �1 , . . . , Y �

N

– Re-compute θn over Y �1 , . . . , Y �

N to get θ�n.

• Both schemes work asymptotically when p is finitebut naive scheme has bias due to edge effects.

• Naive scheme fails if p = ∞, e.g., θ =∑∞

k=−∞ γ(k)but blocks-of-blocks scheme still works—Politis, 1990.

16

Circular blocking schemes

◦ Data periodically extended ‘modulo’ n

X1, X2, X3, · · · , Xb,Xb+1, · · · , Xn, X1, X2, X3, · · ·

◦ No edge effects! Bootstrap distribution isautomatically centered correctly.

• Circular Block Bootstrap: Fixed block size b andnumber of blocks = n

B1︷ ︸︸ ︷X1, X2, X3, · · · , Xb, Xb+1, · · · ,

Bn︷ ︸︸ ︷Xn, X1, X2, X3, · · · , Xb−1︸ ︷︷ ︸

B2

• Stationary Bootstrap: Random block size withexpected value b; blocks of all sizes are available.

◦ If the block sizes are drawn from a geometricdistribution, then SB sample paths are stationary.

17

The sample mean revisited

• Subsampling for the sample mean works in greatgenerality; all that is required is strong mixing and

τn(θn − θ)L⇒ some law J as n → ∞.

• J can be heavy-tailed α-stable with τn = n1−1/αL(n).

� BB/CB/SB bootstrap work only when a CLT holdsfor the sample mean, i.e. J is normal and τn = n1/2.

• Why? Let θ∗n denote the BB sample mean.

◦ θ∗n= n−1∑Q

i=1 X∗i = (bQ)−1

∑Qk=1

∑bj=1 X∗

(k−1)b+j

= Q−1∑Q

k=1 θb,i where n = bQ = b[n/b] � n.

◦ θ∗n is the average of Q subsample sample means θb,i.

� The BB distribution of θ∗n is a Q–fold convolution ofthe subsampling distribution Jb,n with itself.

� Q = [n/b] → ∞; hence the BB distribution tends tonormal regardless of the shape of Jb,n (and of J).

18

Higher-order accuracy

� Under some strong moment and mixing conditions,the studentized BB/CB/SB distributions all havehigher-order accuracy—cf. Lahiri, Gotze/Kunsch; i.e.,for some δ > 1/2 (but unfortunately < 1):

supx |P ∗(√

n(θ∗n−θn)σ∗n

≤ x) − P (√

n(θn−θ)σn

≤ x)| = OP (n−δ)

whereas supx |Φ(x) − P (√

n(θn−θ)σn

≤ x)| = OP (n−1/2)

� Proper studentization for BB/CB/SB is cumbersome.

◦ Extrapolation techniques can make subsamplinghigher-order accurate as well; cf. Bertail/Politis.

◦ Extrapolated/interpolated subsampling rate is slightlyslower than BB/CB/SB in the sample mean case.

◦ But for the sample median and other quantilestatistics, subsampling has faster rate than bootstrapin the i.i.d. seting—Arcones, Bickel/Sakov.

19

Standard error estimation

• Let σ2b,BB, σ2

b,CB ,σ2b,SB and σ2

b,SUB be the

BB/CB/SB and subsampling2 estimators ofσ2∞ = limn Var (

√nX).

• Then, σ2b,BB ≈ σ2

b,CB ≈ σ2b,SUB ≈ 2πfB(0), the

Bartlett estimator—they all have bias O(1/b) and

variance approximately 4σ4∞3

bn.

• σ2b,SB is approximately a linear combination of σ2

bi,CB

with bi close to b; still Bias(σ2b,BB) = O(1/b) but has

variance ∼ cSB(b/n) for cSB > (4/3)σ4∞.

• SB is less sensitive to block size mispecification.

2All using full-overlap scheme

20

Block size considerations

◦ Can choose b to minimize the MSE of σ2b :

bias/variance trade-off yields bopt ∼ cn1/3; but theproportionality constant c involves f(0) and f ′(0).

◦ Pretending f(0) and f ′(0) are known, we cancompute bopt for all methods, MSEs and AREs.

MSEopt ≈ C∗ n−2/3 for BB/CB/SUB

MSEopt,SB ≈ CSB

n−2/3 for SB

◦ Can show: 1/3 < ARE(SB/BB) < 1/2.

• But f(0) and f ′(0) are unknown.

• Let bopt be optimal block size estimator based onestimated f(0) and f ′(0).3

• Define Finite-sample Attainable Relative Efficiency(FARE) as a ratio of MSEs based on estimated bopt.

• FARE (SB/BB) close to one for small samples;4

3More on this later...4SB is less sensitive to block size.

21

MSE rates

◦ The rate MSEopt = O(n−2/3) for BB/CB/SB/SUB

and the Bartlett 2πfB(0) is suboptimal.

◦ Can achieve O(n−4/5) with nonnegative/quadraticspectral estimators, e.g. Parzen window, Daniel,etc.—but also with Welch’s scheme.

◦ Welch’s tapering idea is applicable to BB.

• Can actually further reduce the MSE to close toO(1/n) by use of higher-order kernels—but notnecessarily nonnegative estimation.

22

Tapered block bootstrap—Paparoditis/Politis

• Assume Xt is centered5 and consider all availableblocks B1, B2, . . . from one of the blocking schemes.

• Draw Q = [n/b] blocks B∗1 , . . . , B∗

Q randomly (withreplacement) from the given set of blocks B1, B2, . . .

• Taper the data from each block using the taper ν.Let B�

1, . . . , B�Q denote the tapered blocks.

• Concatenate the elements of the Q tapered blocksB�

1, . . . , B�Q to create pseudo-realization X�

1 , · · · , X�n

B�1︷ ︸︸ ︷

X�1 , · · · , X�

b ,

B�2︷ ︸︸ ︷

X�b+1, · · · , X�

2b, X�2b+1, · · ·

B�Q︷ ︸︸ ︷

· · · , X�n−1, X

�n

5centered at the sample mean will do.

23

TBB/BB comparisons

BB/CB or SUB with fully overlapping blocks:

• Bias(σ2b,BB) ≈ −2π“f ′”(0)/b

• Var (σ2b,BB) ≈ 8π2f 2(0)||λB||2 · (b/n)

• bopt,BB ∼ cBB

n1/3 and MSEopt,BB = O(n−2/3)

where “f ′”(w) = (2π)−1∑∞

k=−∞ |k|γ(k)eiwk.

For TBB:

◦ Bias(σ2b,TBB) ≈ −πf ′′(0)/b2

◦ Var (σ2b,TBB) ≈ 8π2f 2(0)||ν ∗ ν||2 · (b/n)

◦ bopt,TBB ∼ cTBB

n1/5 and MSEopt,TBB = O(n−4/5)

—Optimal block sizes depend on f and its derivatives.

—Need accurate estimation of f (and its derivatives).

24

Higher-order kernels in spectral estimation

◦ General lag-window spectral density estimator:

f (w) =1

2π

b∑s=−b

λ(s/b)eiwsγ(s)

◦ Note: f (w) can be equivalently defined as akernel-smoothed periodogram with kernel

Λ(w) =1

2π

∞∑s=−∞

λ(s)eiws

◦ Λ is said to be of order q if∫

wkΛ(w) = 0 fork = 1, . . . , q − 1, and

∫wqΛ(w) �= 0.6

◦ If f has r continuous derivatives and ζ = min(r, q):

– Bias(f(w)) ≈ −(ζ !)−1f (ζ)(w)/bζ

– Var (f(w)) ≈ f 2(w)||λ||2 · (1 + 1{w/π∈Z})(b/n)

◦ bopt,f ∼ cλ,w

n1/(2ζ+1) and MSEopt,f = O(n−2/(2ζ+1))

6Bartlett kernel is ‘almost’ order one:∫ m

−m wΛ(w) → 0 as m → ∞ (Cauchy principal value integral).

25

Flat-top lag-windows in spectral estimation

◦ To get Bias(f(w)) = O(1/br) we need q ≥ r, i.e.,order of kernel ≥ number of continuous derivatives.

◦ But r is unknown; so use a kernel with q = ∞.

◦ Simplest infinite-order kernel: flat-top lag-window(Politis/Romano) λT (x) = min(1, 2(1 − |x|)+).

� Flat-top lag-window spectral density estimator:

fT (w) =

[1

2π

b∑s=−b

λT (s/b)eiwsγ(s)

]+

� Not only MSEopt,ft= O(n−2/(2r+1))—best possible

but choosing the bandwidth b for ft is very intuitivebased on a correlogram inspection.

• Empirical Rule: if γ(s) � 0 for all s ≥ s0, let b = 2s0.

26

lag window

0.0 0.2 0.4 0.6 0.8 1.0

0.0

0.2

0.4

0.6

0.8

1.0

1(a)

Fejer kernel

0 5 10 15 20

0.0

0.0

20

.06

0.1

0

1(b)

lag window

0.0 0.2 0.4 0.6 0.8 1.0

0.0

0.2

0.4

0.6

0.8

1.0

1(c)

Dirichlet kernel

0 5 10 15 20

0.0

0.1

0.2

0.3

1(d)

lag window

0.0 0.2 0.4 0.6 0.8 1.0

0.0

0.2

0.4

0.6

0.8

1.0

1(e)

flat-top kernel, c=0.5

0 5 10 15 20

0.0

0.0

50

.10

0.1

5

1(f)

1(g) 1(h)

27

MSE—optimal standard error estimation

� Can get flat-top by linear combination of two Bartlettwindows: λT (x) = 2λB(x) − λB(2x).

◦ Recall: σ2b,BB ≈ σ2

b,CB ≈ σ2b,SUB ≈ 2πfB(0).

� Let σ2b = 2σ2

b,BB − σ2b/2,BB.

� Then MSEopt,σ2b

= O(n−2/(2r+1))—best possible.

� But σ2b is not necessarily nonnegative.

– Define σ2b,+ = max(ε, σ2

b ) for small ε ≥ 0.

– There is no bootstrap scheme P� such thatVar �(

√nX�) = σ2

b or σ2b,+

28

Bandwidth/block choice for fT and σ2b

◦ Empirical Rule: if γ(s) � 0 for all s ≥ s0, let b = 2s0.

◦ γ(s) � 0 for s ≥ s0 is an implied test of significance.

◦ Focus on sample autocorrelation ρ(k) = γ(k)/γ(0).

� Formal Rule: Let b = 2s0 where s0 be the smallestpositive integer such that

|ρ(s0 + k)| < c

√log

10n

n for all k = 1, . . . ,Kn.

• Practical choice: c = 2 with Kn = max(5,√

log10 n).

◦ If 100< n <1000, then 1.41 <√

log10

n < 1.73.

� Practical Rule: If ρ(k) is in the band ±3/√

n for fiveconsecutive points k-points, then ρ(k) � 0.

� Interpretation: If Kn ≈ 5, the above bandscorrespond to 95% simultaneous intervals forρ(s0 + 1), ρ(s0 + 2), . . . , ρ(s0 + 5) by Bonferroni.

29

Lag

AC

F

0 5 10 15 20

-0.2

0.2

0.6

1.0

Series : AR1 (a)

w

f

0.0 0.5 1.0 1.5 2.0 2.5 3.0

0.0

0.2

0.4

0.6

hat m =1 (b)

w

f

0.0 0.5 1.0 1.5 2.0 2.5 3.0

0.0

0.2

0.4

0.6

hat m =2 (c)

w

f

0.0 0.5 1.0 1.5 2.0 2.5 3.0

0.0

0.2

0.4

0.6

hat m =3 (d)

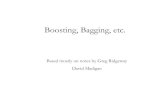

Figure 3: Gaussian AR(1) acf and different bandwidth choices for flat-top lag-window spec-tral density estimation; n = 200. Superimposed are the naive ±2/

√n bands.

30

lag

ACF

0 5 10 15 20

0.00.2

0.40.6

0.81.0

Figure 4: A ‘problematic’ correlogram from an AR(1) model with ρ = 0.3 and n = 500.Superimposed are the correct bands ±c

√log10 n/n with c = 2 recommended in connection

with Kn = max(5,√

log10 n).

A problematic correlogram

◦ The values c = 2 and Kn = max(5,√

log10 n) arerecommendations—not absolute requirements.

◦ Faced with a problematic correlogram, thepractitioner must make an informed decision.

◦ When in doubt, choose the smallest b.

– Flat-tops perform best with small bs.

– “Okham’s razor” favors the simplest model.

31

Block size choice for BB/CB/SUB and TBB

BB/CB or SUB with fully overlapping blocks:

• Bias(σ2b,BB) ≈ −2π“f ′”(0)/b

• Var (σ2b,BB) ≈ 8π2f 2(0)||λB||2 · (b/n)

• bopt,BB ∼ cBB

n1/3 and MSEopt,BB = O(n−2/3)

where “f ′”(w) = (2π)−1∑∞

k=−∞ |k|γ(k)eiwk.

For TBB:

◦ Bias(σ2b,TBB) ≈ −πf ′′(0)/b2

◦ Var (σ2b,TBB) ≈ 8π2f 2(0)||ν ∗ ν||2 · (b/n)

◦ bopt,TBB ∼ cTBB

n1/5 and MSEopt,TBB = O(n−4/5)

—Optimal block sizes depend on f and its derivatives.

—Estimate f via flat-top kernel and plug-in!

32

Locally Stationary Series–Dahlhaus

◦ Stationarity assumption is often unrealistic for verylong time series.

◦ More realistic model: assume a slowly-changingstochastic structure, i.e. (for fixed k) the jointprobability law of (Xt+1, . . . , Xt+k) changessmoothly (and slowly) with the time index t.

• Local Block Bootstrap—Paparoditis/Politis (2002).

• LBB resamples blocks that are close to each other,i.e., a block that starts at time t, can only be replacedwith a block whose starting point is close to t.

– An LBB bootstrap pseudo-series is constructed bya concatenation of Q blocks of size b.

– The jth block of the resampled series is chosenrandomly from a distribution (say, uniform) on allthe size-b blocks whose time indices are ‘close’ tothose in the original jth block.

33

year

1880 1920 1960

12

34

5Figure 3a: annual S&P 500 data

year

1880 1920 1960

12

34

5

Figure 3b: BB realization

year

1880 1920 1960

23

4

Figure 3c: CBB realization

Integrated Series, Unit Roots and Random Walks

e.g.: S&P 500 (figure), stock prices, foreign exchange...

DEFINITION: {Xt} is I(1), i.e., integrated of order one,if {Xt} is not stationary but its first difference series{Yt} is stationary, where Yt = Xt − Xt−1.

• I(1) sample-paths are “continuous”.

• BB destroys the sample-path continuity; see figure.

• Continuous-Path BB—Paparoditis/Politis (2001)

• CBB IDEA: Adjust (shift) the BB blocks to ensurecontinuity of the bootstrap sample paths.

◦ Can employ CBB to test for unit root or cointegration.

34

![Lecture 12 Heteroscedasticity · • Now, we have the CLM regression with hetero-(different) scedastic (variance) disturbances. (A1) DGP: y = X + is correctly specified. (A2) E[ |X]](https://static.fdocument.org/doc/165x107/6106a6b3fb4f960ead0036bd/lecture-12-h-a-now-we-have-the-clm-regression-with-hetero-different-scedastic.jpg)