The Golden Ratio in Communication - Princeton...

-

Upload

nguyentruc -

Category

Documents

-

view

226 -

download

1

Transcript of The Golden Ratio in Communication - Princeton...

The Golden Ratio in Communication...

Blackwell’s Trapdoor Channel

Task Assignment

Paul Cuff(Cover, Permuter, Weissman, Van Roy)

Stanford University

November 24, 2008

1

φ

φ

φ

φ2

Paul Cuff (Stanford University) φ 2008 November 24, 2008 1 / 25

Outline - Markov Chain

φ√

5+12

Xp0(x)

Y

Z

R1

R2

Paul Cuff (Stanford University) φ 2008 November 24, 2008 3 / 25

The Trapdoor Channel

Introduced by David Blackwell in 1961.[Ash 65], [Ahlswede & Kaspi 87], [Ahlswede 98], [Kobayashi 02 & 03].

A “simple two-state channel.” - Blackwell

Paul Cuff (Stanford University) φ 2008 November 24, 2008 4 / 25

The Trapdoor Channel

01

OutputInputChannel

1 0

st = st−1 + xt − yt

s0 = 0

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

The Trapdoor Channel

0

OutputInputChannel

1

1 0

st = st−1 + xt − yt

s0 = 0x1 = 1,

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

The Trapdoor Channel

OutputInputChannel

1

10

0 0

st = st−1 + xt − yt

s0 = 0x1 = 1, s1 = 1, y1 = 0,

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

The Trapdoor Channel

OutputInputChannel

1

10

0 0

st = st−1 + xt − yt

s0 = 0x1 = 1, s1 = 1, y1 = 0,x2 = 0,

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

The Trapdoor Channel

OutputInputChannel

1

10

0 00

st = st−1 + xt − yt

s0 = 0x1 = 1, s1 = 1, y1 = 0,x2 = 0, s2 = 1, y2 = 0,

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

The Trapdoor Channel

OutputInputChannel

1

1 0 001

st = st−1 + xt − yt

s0 = 0x1 = 1, s1 = 1, y1 = 0,x2 = 0, s2 = 1, y2 = 0,x3 = 1,

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

The Trapdoor Channel

OutputInputChannel

1

1 0 001

1

st = st−1 + xt − yt

s0 = 0x1 = 1, s1 = 1, y1 = 0,x2 = 0, s2 = 1, y2 = 0,x3 = 1, s3 = 1, y3 = 1.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 5 / 25

Communication without Feedback

Repeat each bit three time: R = 1/3 bit.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 6 / 25

Communication without Feedback

Repeat each bit three time: R = 1/3 bit.

Repeat each bit twice: R = 1/2 bit. [Ahlswede & Kaspi 87]

Paul Cuff (Stanford University) φ 2008 November 24, 2008 6 / 25

Communication without Feedback

Repeat each bit three time: R = 1/3 bit.

Repeat each bit twice: R = 1/2 bit. [Ahlswede & Kaspi 87]

C ≈ 0.572 bits per channel use. [Kobayashi & Morita 03]

C = limn→∞

maxp(x1,...,xn)

1

nI(Xn;Y n).

0.572

1 2 3 4 5 6 7 8 n

.2

.4

.6

1nI(Xn;Y n)

Paul Cuff (Stanford University) φ 2008 November 24, 2008 6 / 25

Communication Setting (with feedback)

Message

Encoder

xt(m, yt−1)xt

Chemical Channel

p(yt|xt, yt−1)

ytm

Decoder

m(yN )

yt

Unit Delayyt−1

Feedback

message

m

Estimated

Figure: Communication with feedback

Paul Cuff (Stanford University) φ 2008 November 24, 2008 7 / 25

Feedback Capacity of Unifilar, Strongly Connected, FSC

Capacity of the Trapdoor Channel

C = limn→∞

1

nmax

p(x1,...,xn)I(Xn;Y n)

Feedback capacity of the Trapdoor Channel

CFB = limn→∞

1

nmax

{p(xi|xi−1,yi−1)}ni=1

I(Xn → Y n)

[Permuter, Weissman & Goldmith ISIT06]

Paul Cuff (Stanford University) φ 2008 November 24, 2008 8 / 25

Directed Information

Mutual Information

I(Xn;Y n) =

n∑

i=1

I(Xn;Yi|Y i−1)

Directed Information was defined by Massey in 1990.

I(Xn → Y n) ,

n∑

i=1

I(Xi;Yi|Y i−1)

Paul Cuff (Stanford University) φ 2008 November 24, 2008 9 / 25

Directed Information

Mutual Information

I(Xn;Y n) =

n∑

i=1

I(Xn;Yi|Y i−1)

Directed Information was defined by Massey in 1990.

I(Xn → Y n) ,

n∑

i=1

I(Xi;Yi|Y i−1)

Intuition [Massey 05]:

I(Xn;Y n) = I(Xn → Y n) + I(Y n−1 → Xn)

Paul Cuff (Stanford University) φ 2008 November 24, 2008 9 / 25

Feedback Capacity of Unifilar, Strongly Connected, FSC

CFB = limN→∞

1

Nmax

{p(xt|xt−1,yt−1)}Nt=1

I(XN → Y N )

CFB = limN→∞

1

Nmax

{p(xt|xt−1,yt−1)}Nt=1

N∑

t=1

I(Xt;Yt|Y t−1)

CFB = limN→∞

1

Nmax

{p(xt|st−1,yt−1)}Nt=1

N∑

t=1

I(Xt, St−1;Yt|Y t−1)

CFB = sup{p(xt|st−1,yt−1)}t≥1

lim infN→∞

1

N

N∑

t=1

I(Xt, St−1;Yt|Y t−1)

Paul Cuff (Stanford University) φ 2008 November 24, 2008 10 / 25

Dynamic Programming (infinite horizon, average reward)

Variable Assignments

State: βt = p(st|yt)

Action: ut = p(xt|st−1)

Disturbance: wt = yt−1

Dynamic Programming Requirements

State evolution:

βt = F (βt−1, ut, wt)

Reward function per unit time:

g(βt−1, ut) = I(Xt, St−1;Yt|βt−1)

Similar dynamic programming approaches:

Yang, Kavcic & Tatikonda - 2005

Chen & Berger - 2005

Mitter & Tatikonda - 2006

Paul Cuff (Stanford University) φ 2008 November 24, 2008 11 / 25

Dynamic Programming (infinite horizon, average reward)

Dynamic Programming Operator T

The dynamic programming operator T is given by

T ◦ J(β) = supu∈U

(

g(β, u) +

∫

Pw(dw|β, u)J(F (β, u,w))

)

.

Bellman Equation

If there exist a function J(β) and constant ρ that satisfy

J(β) = T ◦ J(β)− ρ

then ρ is the optimal infinite horizon average reward.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 12 / 25

Trapdoor Channel—20th Value iteration

0 0.2 0.4 0.6 0.8 113.6

13.7

13.8

13.9

14

14.1

14.2

β

J(in

d)

J20

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5

0.6

β

δ

δ, iteration=20

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5

0.6

0.7

β

γ

γ, iteration=20

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5histogram of beta

β

rela

tive

freq

uenc

e

Paul Cuff (Stanford University) φ 2008 November 24, 2008 13 / 25

Calculation and Coincidence

Trapdoor channel: CFB ≈ 0.694 bits

Paul Cuff (Stanford University) φ 2008 November 24, 2008 14 / 25

Calculation and Coincidence

Trapdoor channel: CFB ≈ 0.694 bits

Homework Question

Entropy rate. Find the maximum entropy rate of the following two-stateMarkov chain:

BA

1− pp

1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 14 / 25

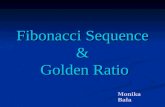

Calculation and Coincidence

Trapdoor channel: CFB ≈ 0.694 bits

Homework Question

Entropy rate. Find the maximum entropy rate of the following two-stateMarkov chain:

BA

1− pp

1

Solution: (Golden Ratio: φ =√

5+12 )

p⋆ = φ− 1 = 1φ

H(X ) = log φ = 0.6942... bits

Paul Cuff (Stanford University) φ 2008 November 24, 2008 14 / 25

Calculation and Coincidence

Trapdoor channel: CFB ≈ 0.694 bits

Homework Question

Entropy rate. Find the maximum entropy rate of the following two-stateMarkov chain:

BA

1− pp

1

Solution: (Golden Ratio: φ =√

5+12 )

p⋆ = φ− 1 = 1φ

H(X ) = log φ = 0.6942... bits

Paul Cuff (Stanford University) φ 2008 November 24, 2008 14 / 25

Equivalent Channel Formulation

Rename channel input: xt = xt ⊕ st−1.Rename channel output: yt = yt ⊕ yt−1.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 15 / 25

Equivalent Channel Formulation

Rename channel input: xt = xt ⊕ st−1.Rename channel output: yt = yt ⊕ yt−1.

Case 1: xt = 0

xt = 0 ⇒ yt = xt−1.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 15 / 25

Equivalent Channel Formulation

Rename channel input: xt = xt ⊕ st−1.Rename channel output: yt = yt ⊕ yt−1.

Case 1: xt = 0

xt = 0 ⇒ yt = xt−1.

Proof: xt = st−1 = yt = st

Paul Cuff (Stanford University) φ 2008 November 24, 2008 15 / 25

Equivalent Channel Formulation

Rename channel input: xt = xt ⊕ st−1.Rename channel output: yt = yt ⊕ yt−1.

Case 1: xt = 0

xt = 0 ⇒ yt = xt−1.

Proof: xt = st−1 = yt = st

xt−1 ⊕ st−2 = yt−1 ⊕ st−1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 15 / 25

Equivalent Channel Formulation

Rename channel input: xt = xt ⊕ st−1.Rename channel output: yt = yt ⊕ yt−1.

Case 1: xt = 0

xt = 0 ⇒ yt = xt−1.

Proof: xt = st−1 = yt = st

xt−1 ⊕ st−2 = yt−1 ⊕ st−1

xt−1 ⊕ st−2 = yt−1 ⊕ yt

Paul Cuff (Stanford University) φ 2008 November 24, 2008 15 / 25

Equivalent Channel Formulation

Rename channel input: xt = xt ⊕ st−1.Rename channel output: yt = yt ⊕ yt−1.

Case 1: xt = 0

xt = 0 ⇒ yt = xt−1.

Proof: xt = st−1 = yt = st

xt−1 ⊕ st−2 = yt−1 ⊕ st−1

xt−1 ⊕ st−2 = yt−1 ⊕ yt

Case 2: xt = 1

xt = 1 ⇒ yt ∼ Bern(1/2), independent of the past.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 15 / 25

Equivalent Channel

Two states specify whether the channel is all noise or noise-free.

Xt = 0

Xt−1 Yt

0

1

0

1

Xt = 1

Xt−1 Yt

0

1

0

1

1/2

Paul Cuff (Stanford University) φ 2008 November 24, 2008 16 / 25

Equivalent Channel

Two states specify whether the channel is all noise or noise-free.

Xt = 0

Xt−1 Yt

0

1

0

1

Xt = 1

Xt−1 Yt

0

1

0

1

1/2

Use Markov input

1− pp

1

0 1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 16 / 25

Decoding Example

Transmitter promises to never use x = 1 twice in a row.

The number of such sequences of length n is the Fibonacci sequence.

Decoding rules

xt = 0 ⇒ xt−1 = yt

xt = 1 ⇒ xt−1 = 0

xn: 0yn: 1 1 1 1 0 0 1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 17 / 25

Decoding Example

Transmitter promises to never use x = 1 twice in a row.

The number of such sequences of length n is the Fibonacci sequence.

Decoding rules

xt = 0 ⇒ xt−1 = yt

xt = 1 ⇒ xt−1 = 0

xn: 0 0yn: 1 1 1 1 0 0 1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 17 / 25

Decoding Example

Transmitter promises to never use x = 1 twice in a row.

The number of such sequences of length n is the Fibonacci sequence.

Decoding rules

xt = 0 ⇒ xt−1 = yt

xt = 1 ⇒ xt−1 = 0

xn: 1 0 0yn: 1 1 1 1 0 0 1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 17 / 25

Decoding Example

Transmitter promises to never use x = 1 twice in a row.

The number of such sequences of length n is the Fibonacci sequence.

Decoding rules

xt = 0 ⇒ xt−1 = yt

xt = 1 ⇒ xt−1 = 0

xn: 0 1 0 0yn: 1 1 1 1 0 0 1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 17 / 25

Decoding Example

Transmitter promises to never use x = 1 twice in a row.

The number of such sequences of length n is the Fibonacci sequence.

Decoding rules

xt = 0 ⇒ xt−1 = yt

xt = 1 ⇒ xt−1 = 0

xn: 1 0 1 0 0yn: 1 1 1 1 0 0 1

Paul Cuff (Stanford University) φ 2008 November 24, 2008 17 / 25

Dynamic Programming 20th Value iteration

0 0.2 0.4 0.6 0.8 113.6

13.7

13.8

13.9

14

14.1

14.2

β

J(in

d)

J20

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5

0.6

β

δ

δ, iteration=20

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5

0.6

0.7

β

γ

γ, iteration=20

0 0.2 0.4 0.6 0.8 10

0.1

0.2

0.3

0.4

0.5histogram of beta

β

rela

tive

freq

uenc

e

Bellman Equation: J(β) = T ◦ J(β)− ρ.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 18 / 25

Conjectured Solution to the Bellman Equation

0 0.2 0.4 0.6 0.8 10

0.2

0.4

0.6

0.8δ

β

0 0.2 0.4 0.6 0.8 10

0.2

0.4

0.6

0.8γ

β

0 0.2 0.4 0.6 0.8 10

0.2

0.4

0.6

0.8

1

β −→

3−√

52

√5−12 β + 3−

√5

2ց

←− β

3−√

52

√5−12 β

H(β)− ρβ + c2ց H(β) + ρβ + c1ւ

b1↓b2↓

b3↓b4↓

Bellman Equation: J(β) = T ◦ J(β)− ρ.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 19 / 25

Feedback Capacity and Zero-Error Capacity

Bellman Equation is satisfied.

Trapdoor channel feedback capacity:

CFB = log φ = 0.6942... bits.

C ≈ .572 bits.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 20 / 25

Feedback Capacity and Zero-Error Capacity

Bellman Equation is satisfied.

Trapdoor channel feedback capacity:

CFB = log φ = 0.6942... bits.

C ≈ .572 bits.

Trapdoor channel zero-error capacity:

CFB = log φ.

C = .5 bits.

[Ahlswede & Kaspi 87]

Paul Cuff (Stanford University) φ 2008 November 24, 2008 20 / 25

Biological Cells

Biochemical interpretation of the trapdoor channel [Berger 71]

Paul Cuff (Stanford University) φ 2008 November 24, 2008 21 / 25

Biological Cells

Biochemical interpretation of the trapdoor channel [Berger 71]

....

.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 21 / 25

Biological Cells

Biochemical interpretation of the trapdoor channel [Berger 71]

... .

.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 21 / 25

Biological Cells

Biochemical interpretation of the trapdoor channel [Berger 71]

.

.

.

.

.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 21 / 25

Coordinated Action in a Network

X1

X2

X3

X4

X5

M1

M2

M3

M4

Paul Cuff (Stanford University) φ 2008 November 24, 2008 22 / 25

Task Assignment

Computation Tasks: T = {0, 1, 2}Processors: X, Y , and Z

Xn ∼ iid, Unif({0, 1, 2}) Xn

Y n

Zn

R1

R2

What are the required rates (R1, R2)?

Paul Cuff (Stanford University) φ 2008 November 24, 2008 23 / 25

Task Assignment

Computation Tasks: T = {0, 1, 2}Processors: X, Y , and Z

Xn ∼ iid, Unif({0, 1, 2}) Xn

Y n

Zn

R1

R2

M1 ∈ {1, ..., 2nR1}

What are the required rates (R1, R2)?

Paul Cuff (Stanford University) φ 2008 November 24, 2008 23 / 25

Task Assignment: Achievable Rates

Xn

Y n

Zn

R1

R2

Idea 1—Describe Xn:

R1 = R2 = H(X) = log(3).

A protocol tells Y and Z how to orient around X.Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Task Assignment: Achievable Rates

Xn

Y n

R1

Zn

R2R1

R2

Idea 2—Minimize R1:

R1 ≥ minp(y|x):X 6=Y

I(X;Y )

= H(X) − maxp(y|x):X 6=Y

H(Y |X)

= log 3− log 2.

Describe Zn at full rate: R2 = log 3.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Task Assignment: Achievable Rates

Xn

Y n

Zn

R1

R2R1

R2

Idea 3—Restrict the support of Y and Z:

Y ∈ {0, 1}Z ∈ {0, 2}.

By default Y = 1 and Z = 2.The encoder tells them when to move out of the way.

R1 ≥ H(Y ) = H(1/3) = log 3− 2/3 bits,

R2 ≥ H(Z) = H(1/3) = log 3− 2/3 bits.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Task Assignment: Achievable Rates

Xn

Y n

Zn

R1

R2

Achievable rates for general joint distribution p(x, y, z):

R1 ≥ I(X;Y,U),

R2 ≥ I(X;Z,U),

R1 + R2 ≥ I(X;Y,U) + I(X;Z,U) + I(Y ;Z|X,U).

for some U .

[Cover, Permuter 07], [Zhang, Berger 95]Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Task Assignment: Achievable Rates

Xn

Y n

Zn

R1

R2R1

R2

Idea 4—Restrict the support of Y and Z around X :

First send X to both Y and Z, where X = X with probability αα+2 .

p(x|x = 1)

0 1 2

1α+2

αα+2

1α+2

Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Task Assignment: Achievable Rates

Xn

Y n

Zn

R1

R2R1

R2

Idea 4—Restrict the support of Y and Z around X :

Y ∈ {X, X + 1}Z ∈ {X, X + 2}.

The encoder tells them when to move out of the way (onto X).

R1 ≥ I(X; X) + H(Y |X),

R2 ≥ I(X; X) + H(Z|X).

Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Task Assignment: Achievable Rates

Xn

Y n

Zn

R1

R2R1

R2

Idea 4—Restrict the support of Y and Z around X :

Optimize α (compression rate of X):

α∗ = φ =

√5 + 1

2.

Resulting rates:R1 = R2 = log 3− log φ.

Paul Cuff (Stanford University) φ 2008 November 24, 2008 24 / 25

Summary

1

φ

φ

φ

φ2

1 Blackwell’s Trapdoor Channel◮ CFB = log φ.◮ Transform to equivalent channel◮ Simple zero-error communication scheme◮ Guess and check solution to Bellman equation

2 Three-node task assignment◮ Achievable symmetric rate: H(X)− log φ◮ Two phases of communication

Paul Cuff (Stanford University) φ 2008 November 24, 2008 25 / 25