Sequential Human Action Recognitionshushman/cv15_poster.pdf · 2016-12-15 · Sequential Human...

Transcript of Sequential Human Action Recognitionshushman/cv15_poster.pdf · 2016-12-15 · Sequential Human...

Sequential Human Action RecognitionShushman Choudhury and Stefanos Nikolaidis

16720 Computer Vision 2015

Problem and Motivation

Results and Challenges

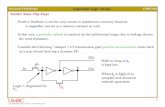

Approaches Hidden Markov Model.

➢ States S: The set of states is the set of action classes C

➢ Transition function T : S → Π(S). Indicates the probability of an action given the previous action

➢ Observation function T : S → Π(O). As observations, we defined the directions of right hand movement in 3D. We disregarded displacements below a pre-defined threshold

➢ We estimate using filtering.

Test Accuracy

0

0.23

0.45

0.68

0.9

Test1 Test2 Test3 Test4 Test5 Test6

Combined LDA HMM

In human-robot collaboration for physical tasks with a known sequence of subtasks, the robot often needs to identify accurately the actions of its human counterpart. For instance, in a table carrying scenario, the robot should be able to infer the intended table rotation of its human teammate, to perform the task in a collaborative fashion.

We consider the problem of recognizing human actions that are part of a sequence in an activity. In particular, we want to estimate the action that a human is performing at every frame of the video. Given a current frame t, and a set of action classes C , our goal is to find the class that maximizes the sequence of observations, up to time t:

[1] Xia, Lu, Chia-Chih Chen, and J. K. Aggarwal. "View invariant human action recognition using histograms of 3d joints." Computer Vision and Pattern Recognition Workshops (CVPRW), 2012 IEEE Computer Society Conference on. IEEE, 2012.

We used a subset of the UTKinect-Action Dataset1 . The dataset has 20 videos, of 10 different human subjects, performing activities with 10 different action types in a sequence. We considered the following 6 action types: Walk, Sit down, Stand up, Pick up, Wave hands, Clap hands.

Challenges- Learning of Transition / Observation function in HMM- Threshold selection for different subjects- Consistent misclassification due to incorrect state