1 Memory Management Chapter 4 4.1 Basic memory management 4.2 Swapping (εναλλαγή) 4.3...

-

Upload

adela-oconnor -

Category

Documents

-

view

222 -

download

3

Transcript of 1 Memory Management Chapter 4 4.1 Basic memory management 4.2 Swapping (εναλλαγή) 4.3...

1

Memory Management

Chapter 4

4.1 Basic memory management4.2 Swapping (εναλλαγή)4.3 Virtual memory (εικονική/ιδεατή μνήμη)4.4 Page replacement algorithms (αντικατάσταση σελιδών)4.5 Modeling page replacement algorithms4.6 Design issues for paging systems4.7 Implementation issues4.8 Segmentation (κατάτμηση)

2

Memory Management

• “Programs expand to fill the memory available to hold them”

• The part of the OS that manages memory is called memory manager. Tasks:– which parts of memory are in use and which are not– allocate/deallocate memory to processes– manage swapping between main memory and disk

when memory is not large enough to hold all the processes

3

Memory Management

• Ideally programmers want memory that is– large

– fast

– non volatile

• Memory hierarchy – small amount of fast, expensive memory – cache

– some medium-speed, medium price main memory

– gigabytes of slow, cheap disk storage

• Memory manager handles the memory hierarchy

4

Basic Memory ManagementMonoprogramming without Swapping or Paging

Three simple ways of organizing memory- an operating system with one user process

5

Multiprogramming with Fixed Partitions

• Fixed memory partitions– separate input queues for each partition (internal fragment., delays)– single input queue (alternative: favor the largest)

• Simple to understand, implement, run to completion (OS360)

6

Relocation and Protection

• Multiprogramming introduces two problems:– Rellocation: when a program is linked, the linker must

know at what address the program will begin in memory. For example, call to a procedure at relative address 100. One solution: modify during loading.

– Protection: One process can access the address space of another process. One solution (IBM): divide memory into blocks of 2K and assign a 4-bit protection code to each block.

7

Relocation and Protection

• Best solution: use base and limit hardware registers– address locations added to the base value register before sent to

memory (to map to physical address)

– address locations larger than base+limit value is an error

• Additional advantage: processes sometimes move within memory after they have started execution: all that is needed is to change the value of the base register

8

Modeling Multiprogramming

• One model: 20% of the time a process is in memory, it computes => 5 processes 100% CPU utilization (unrealistic).

• Probabilistic viewpoint:– each process spends a fraction p in I/O state– if n processes, Prob(CPU idle) = pn – CPU utilization = 1 – pn – n : degree of multiprogramming

9

Modeling Multiprogramming

CPU utilization as a function of number of processes in memory

Degree of multiprogramming

10

Modeling Multiprogramming

• This model just an approximation: processes are not independent (two processes can not run concurrently)

• This model can also be used for batch systems

11

Analysis of Multiprogramming System Performance

• Arrival and work requirements of 4 jobs• CPU utilization for 1 – 4 jobs with 80% I/O wait• Sequence of events as jobs arrive and finish

– note numbers show amout of CPU time jobs get in each interval

12

Swapping (Εναλλαγή)

• So far, processes remain in main memory until they are done (loaded once). With a large number of processes we need swapping: moving processes from/to main memory to/from disk.

• Fixed partitions could be used, but …

• Therefore, we use variable partitions (size, location and number varies dynamically with number of processes)

13

Swapping

Memory allocation changes as – processes come into memory– leave memory

Shaded regions are unused memory

14

Swapping (Εναλλαγή)

• Variable partitions = flexibility, added complexity

• Memory compaction = moving all processes downwards (special hardware)

• Size of the allocated space for a process is an issue (processes can grow – malloc). If adjacent holes exist => OK, otherwise it has to be moved, or another process has to be moved, or, simply, killed. Allocate some extra memory.

15

Swapping

• Allocating space for growing data segment• Allocating space for growing stack & data segment

16

Memory Management with Bit Maps

• Part of memory with 5 processes, 3 holes (a)–tick marks show allocation units–shaded regions are free

• Corresponding bit map (b)

• The size of the allocation is a design issue.• When a process must be brought in, the bit map must be searched for k consecutive 0 bits

17

Memory Management with Linked Lists

• Part of memory with 5 processes, 3 holes• Linked list of allocated and free memory segments, a

segment is a process or a hole between 2 processes.• This example: segment list is sorted by address;

updating the list is straightforward when in/out.

18

Memory Management with Linked Lists

Four neighbor combinations for the terminating process X

19

Memory Management with Linked Lists

• Processes and holes can be kept in a list sorted by address. Possible algorithms for de/allocation:– First fit– Next fit– Best fit– Worst fit

• Processes and holes can be kept in separate lists to speed up allocation – overhead in deallocation

• The hole list can be kept sorted on size.• Quick fit: separate lists for some common sizes

20

Memory Management with Buddies

• The memory manager maintains a list of free blocks of size 1,2,4,8,16 bytes up to the size of the memory. (1M memory = 21 lists).

• Initially all of memory is free and the 1M list has a single entry containing a single 1M hole.

• As memory requests are coming in, lists are broken down to a power of 2 large enough to grant the request.

21

Memory Management with Buddies

A

A

128

A

512

256

128

128

128

128

128

128

128

128

B

B

B

B 512

64

64

64

64

D

D

256

256 C

C

C

C

C

512

512

512

512

512

1024

1024Initially

Request 60

Request 35

Request 80

Return A

Request 70

Return B

Return D

Return C

• Internal fragmentation• External fragmentation

22

Analysis of Swapping Systems• Allocation of swap space

– Disk swap area: somewhere in swap area/specific place

• Analyze external fragmentation• Average process after the system has come to equilibrium• Half of the operations above it will be process allocations,

half will be process deallocations => half of the time it has another process, half of the time has a hole.

• Averaged over time if n processes=> n/2 holes (50% rule)• Unused memory rule:

– f: fraction of memory occupied by holes– k: k>0 such that if s is the avg process size, ks is the avg hole sizeThen: f = k / k+2 (e.g. if k=1/2, then f = 0.2 – wasted memory)

23

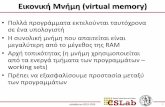

Virtual Memory

• In swapping processes swap in/out because they block for I/O (or for other reasons – scheduling).

• In virtual memory, all processes are virtually in main memory. Physically, only part of them.

• Programs too big to fit in memory => broken down to overlays (stored in disk). OS did the swapping, user did the splitting => virtual memory.

• Example: 1M program can run on a 256K machine by carefully choosing the 256K overlays.

• Virtual memory fits well with multiprogramming (why?)

24

Virtual Memory - Paging

• Memory address space: MOVE REG,1000.• Virtual addresses, virtual address space.• No virtual memory => address requests directly in the bus• Virtual memory => address requests go to MMU

(memory management unit) that maps the virtual address onto a physical memory address.

25

Virtual MemoryPaging

The position and function of the MMU

26

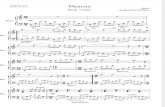

PagingThe relation between

virtual addressesand physical memory addres-ses given bypage table

Example: 16-bit addresses from 0 upTo 64K (virtual addresses). Physical Memory 0 to 32K (15-bit). Page sizeis 4K.

Examples:-MOVE REG,0-MOVE REG,8192-MOVE REG,21500 (20 bytes in page 5)-MOVE REG,32780 (12 bytes in page 8) = page fault

27

Virtual Memory - Paging

• Page fault:– MMU notices that the page is unmapped and cause the CPU to

trap to the Operating System.

– the OS chooses a little-used page frame and puts it in the disk

– the OS marks the corresponding virtual page as unmapped

– replace the cross at page fault virtual page as mapped and

– re-execute the trapped instruction

28

Page Tables

Internal operation of MMU with 16 4 KB pages

29

Virtual Memory - Paging

• Two issues:– The page table can be extremely large

– The mapping must be FAST

• Consider virtual addresses of 32 bits (or even 64!)– 4-KB pages => page table has 1M pages

• Each instruction needs to do 1,2 or even more page table references!

• One solution: an array of registers like in the previous fig.

30

Page Tables

• 32 bit address with 2 page table fields

• Two-level page tables

Second-level page tables

Top-level page table

31

Page Tables

Typical page table entry

32

TLBs – Translation Lookaside Buffers

A TLB to speed up paging

33

Inverted Page Tables

Comparison of a traditional page table with an inverted page table